XLM-ROBERTA-BASE-XNLI-EN

Model description

This model takes the XLM-Roberta-base model which has been continued to pre-traine on a large corpus of Twitter in multiple languages.

It was developed following a similar strategy as introduced as part of the Tweet Eval framework.

The model is further finetuned on the english part of the XNLI training dataset.

Intended Usage

This model was developed to do Zero-Shot Text Classification in the realm of Hate Speech Detection. It is focused on the language of english as it was finetuned on data in said language. Since the base model was pre-trained on 100 different languages it has shown some effectiveness in other languages. Please refer to the list of languages in the XLM Roberta paper

Usage with Zero-Shot Classification pipeline

from transformers import pipeline

classifier = pipeline("zero-shot-classification",

model="morit/english_xlm_xnli")

After loading the model you can classify sequences in the languages mentioned above. You can specify your sequences and a matching hypothesis to be able to classify your proposed candidate labels.

sequence_to_classify = "I think Rishi Sunak is going to win the elections"

# we can specify candidate labels and hypothesis:

candidate_labels = ["politics", "football"]

hypothesis_template = "This example is {}"

# classify using the information provided

classifier(sequence_to_classify, candidate_labels, hypothesis_template=hypothesis_template)

# Output

#{'sequence': 'I think Rishi Sunak is going to win the elections',

# 'labels': ['politics', 'football'],

# 'scores': [0.7982912659645081, 0.20170868933200836]}

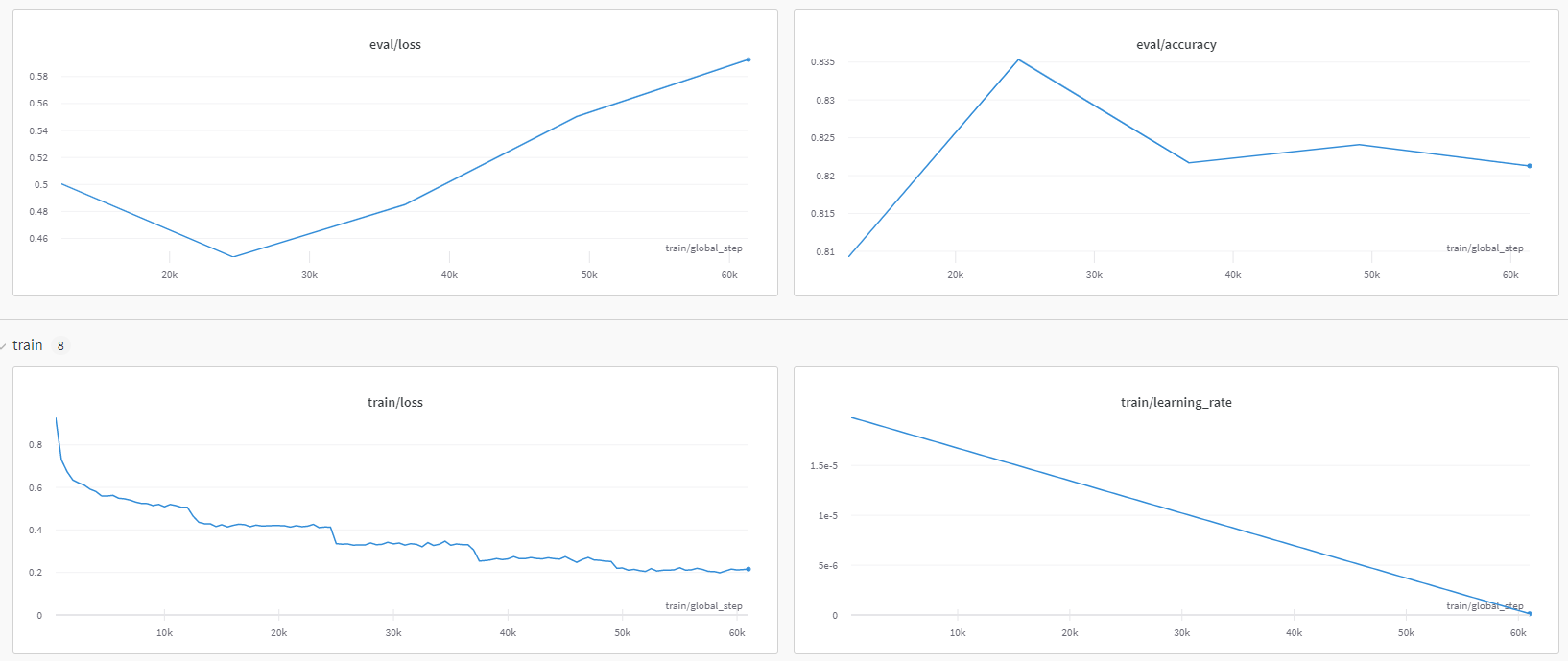

Training

This model was pre-trained on a set of 100 languages and follwed further training on 198M multilingual tweets as described in the original paper. Further it was trained on the training set of XNLI dataset in english which is a machine translated version of the MNLI dataset. It was trained on 5 epochs of the XNLI train set and evaluated on the XNLI eval dataset at the end of every epoch to find the best performing model. The model which had the highest accuracy on the eval set was chosen at the end.

- learning rate: 2e-5

- batch size: 32

- max sequence: length 128

using a GPU (NVIDIA GeForce RTX 3090) resulting in a training time of 1h 47 mins.

Evaluation

The best performing model was evaluatated on the XNLI test set to get a comparable result

predict_accuracy = 82.89%

- Downloads last month

- 4