Donut (base-sized model, fine-tuned on CORD)

Donut model fine-tuned on CORD. It was introduced in the paper OCR-free Document Understanding Transformer by Geewok et al. and first released in this repository.

Disclaimer: The team releasing Donut did not write a model card for this model so this model card has been written by the Hugging Face team.

Model description

Donut consists of a vision encoder (Swin Transformer) and a text decoder (BART). Given an image, the encoder first encodes the image into a tensor of embeddings (of shape batch_size, seq_len, hidden_size), after which the decoder autoregressively generates text, conditioned on the encoding of the encoder.

Intended uses & limitations

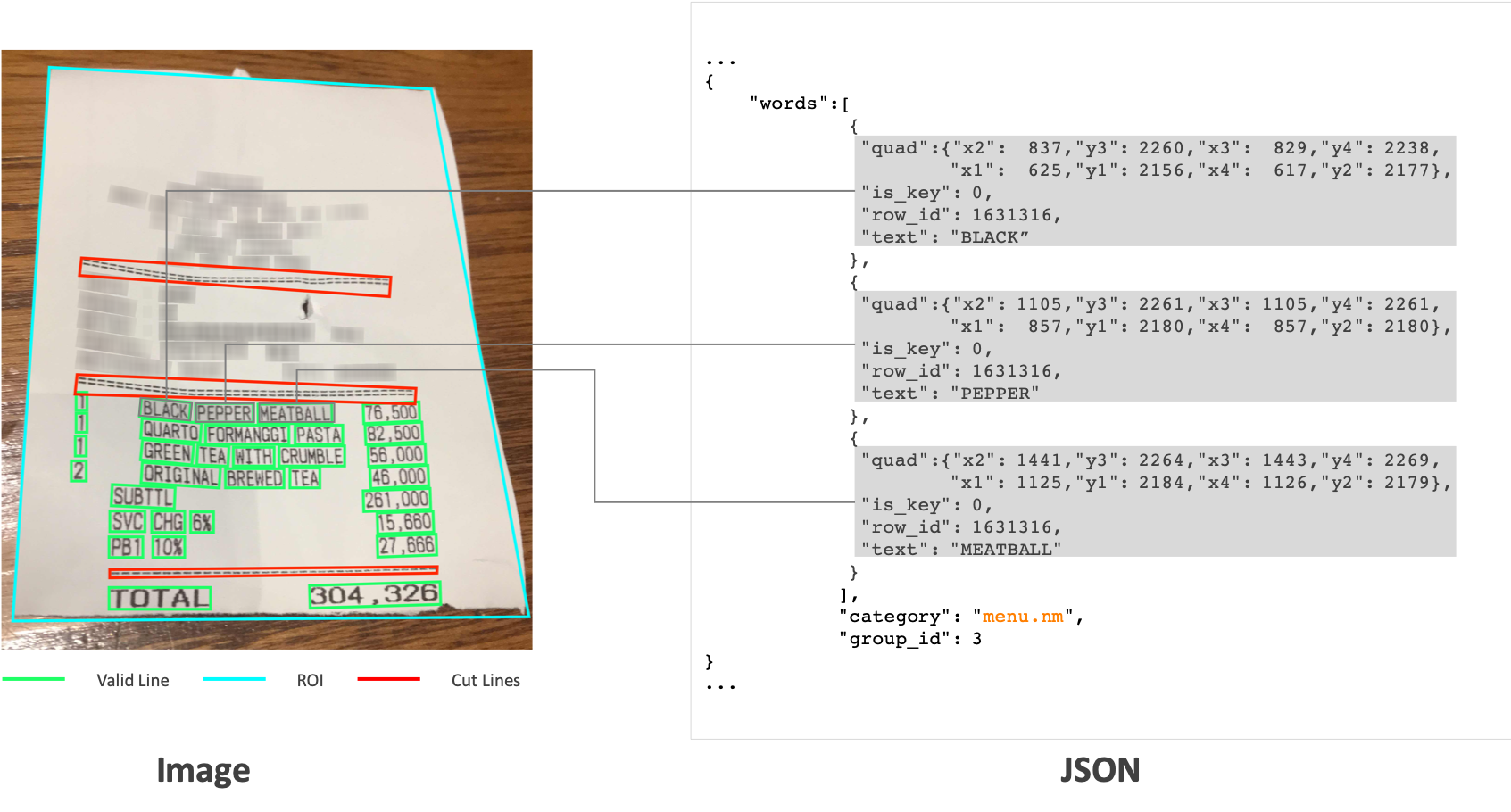

This model is fine-tuned on CORD, a document parsing dataset.

We refer to the documentation which includes code examples.

CORD Dataset

- Downloads last month

- 519

This model does not have enough activity to be deployed to Inference API (serverless) yet. Increase its social

visibility and check back later, or deploy to Inference Endpoints (dedicated)

instead.