metadata

license: apache-2.0

base_model: microsoft/swin-tiny-patch4-window7-224

tags:

- generated_from_trainer

datasets:

- imagefolder

metrics:

- accuracy

model-index:

- name: swin-tiny-patch4-window7-224-finetuned-lungs-disease

results:

- task:

name: Image Classification

type: image-classification

dataset:

name: imagefolder

type: imagefolder

config: default

split: test

args: default

metrics:

- name: Accuracy

type: accuracy

value: 0.8745874587458746

swin-tiny-patch4-window7-224-finetuned-lungs-disease

This model is a fine-tuned version of microsoft/swin-tiny-patch4-window7-224 on the imagefolder dataset. It achieves the following results on the evaluation set:

- Loss: 0.2817

- Accuracy: 0.8746

Model description

This model was created by importing the dataset of the chest x-rays images into Google Colab from kaggle here:

https://www.kaggle.com/datasets/omkarmanohardalvi/lungs-disease-dataset-4-types .

I then used the image classification tutorial here:

obtaining the following notebook:

https://colab.research.google.com/drive/1rNKeA25BR05iMUvKFvRD8SkySBOlO4AC?usp=sharing

'Viral Pneumonia', 'Corona Virus Disease', 'Normal', 'Tuberculosis', 'Bacterial Pneumonia'

The possible classified data are:

- Viral Pneumonia

- Corona Virus Disease

- Normal

- Tuberculosis

- Bacterial Pneumonia

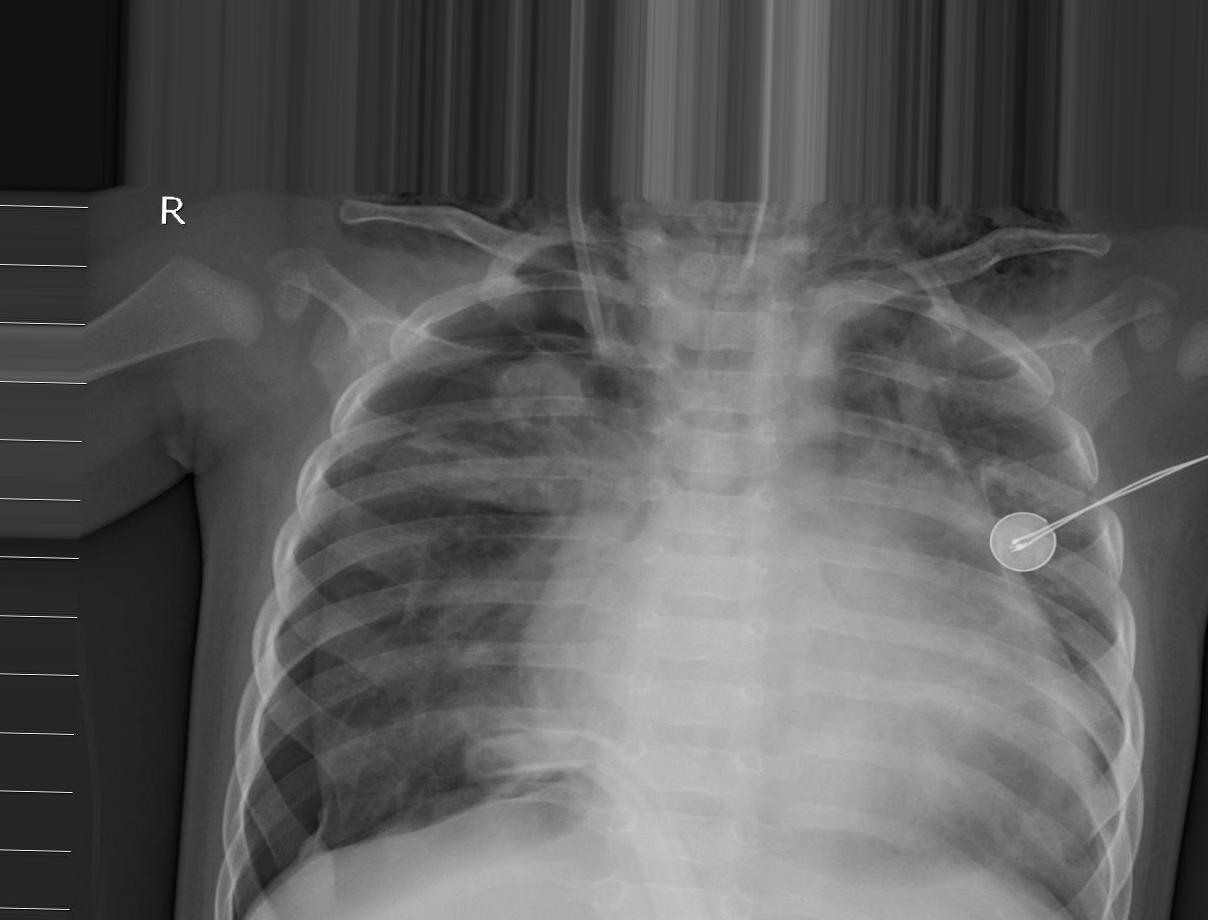

X-rays image example:

Intended uses & limitations

More information needed

Training and evaluation data

More information needed

Training procedure

Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-05

- train_batch_size: 32

- eval_batch_size: 32

- seed: 42

- gradient_accumulation_steps: 4

- total_train_batch_size: 128

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_ratio: 0.1

- num_epochs: 5

Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy |

|---|---|---|---|---|

| 0.7851 | 0.98 | 21 | 0.4674 | 0.8152 |

| 0.4335 | 2.0 | 43 | 0.3662 | 0.8515 |

| 0.3231 | 2.98 | 64 | 0.3361 | 0.8581 |

| 0.3014 | 4.0 | 86 | 0.2817 | 0.8746 |

| 0.252 | 4.88 | 105 | 0.3071 | 0.8713 |

Framework versions

- Transformers 4.38.2

- Pytorch 2.1.0+cu121

- Datasets 2.18.0

- Tokenizers 0.15.2