url

stringlengths 58

61

| repository_url

stringclasses 1

value | labels_url

stringlengths 72

75

| comments_url

stringlengths 67

70

| events_url

stringlengths 65

68

| html_url

stringlengths 46

51

| id

int64 599M

1.12B

| node_id

stringlengths 18

32

| number

int64 1

3.68k

| title

stringlengths 1

276

| user

dict | labels

list | state

stringclasses 2

values | locked

bool 1

class | assignee

dict | assignees

list | milestone

dict | comments

sequence | created_at

timestamp[s] | updated_at

timestamp[s] | closed_at

timestamp[s] | author_association

stringclasses 3

values | active_lock_reason

null | draft

bool 2

classes | pull_request

dict | body

stringlengths 0

228k

⌀ | reactions

dict | timeline_url

stringlengths 67

70

| performed_via_github_app

null | state_reason

stringclasses 2

values | is_pull_request

bool 2

classes |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

https://api.github.com/repos/huggingface/datasets/issues/3678 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3678/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3678/comments | https://api.github.com/repos/huggingface/datasets/issues/3678/events | https://github.com/huggingface/datasets/pull/3678 | 1,123,402,426 | PR_kwDODunzps4yCt91 | 3,678 | Add code example in wikipedia card | {

"login": "lhoestq",

"id": 42851186,

"node_id": "MDQ6VXNlcjQyODUxMTg2",

"avatar_url": "https://avatars.githubusercontent.com/u/42851186?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/lhoestq",

"html_url": "https://github.com/lhoestq",

"followers_url": "https://api.github.com/users/lhoestq/followers",

"following_url": "https://api.github.com/users/lhoestq/following{/other_user}",

"gists_url": "https://api.github.com/users/lhoestq/gists{/gist_id}",

"starred_url": "https://api.github.com/users/lhoestq/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/lhoestq/subscriptions",

"organizations_url": "https://api.github.com/users/lhoestq/orgs",

"repos_url": "https://api.github.com/users/lhoestq/repos",

"events_url": "https://api.github.com/users/lhoestq/events{/privacy}",

"received_events_url": "https://api.github.com/users/lhoestq/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | null | [] | null | [] | 2022-02-03T18:09:02 | 2022-02-21T09:14:56 | 2022-02-04T13:21:39 | MEMBER | null | false | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3678",

"html_url": "https://github.com/huggingface/datasets/pull/3678",

"diff_url": "https://github.com/huggingface/datasets/pull/3678.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3678.patch",

"merged_at": "2022-02-04T13:21:39"

} | Close #3292. | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3678/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/3678/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/3677 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3677/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3677/comments | https://api.github.com/repos/huggingface/datasets/issues/3677/events | https://github.com/huggingface/datasets/issues/3677 | 1,123,192,866 | I_kwDODunzps5C8pAi | 3,677 | Discovery cannot be streamed anymore | {

"login": "severo",

"id": 1676121,

"node_id": "MDQ6VXNlcjE2NzYxMjE=",

"avatar_url": "https://avatars.githubusercontent.com/u/1676121?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/severo",

"html_url": "https://github.com/severo",

"followers_url": "https://api.github.com/users/severo/followers",

"following_url": "https://api.github.com/users/severo/following{/other_user}",

"gists_url": "https://api.github.com/users/severo/gists{/gist_id}",

"starred_url": "https://api.github.com/users/severo/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/severo/subscriptions",

"organizations_url": "https://api.github.com/users/severo/orgs",

"repos_url": "https://api.github.com/users/severo/repos",

"events_url": "https://api.github.com/users/severo/events{/privacy}",

"received_events_url": "https://api.github.com/users/severo/received_events",

"type": "User",

"site_admin": false

} | [

{

"id": 1935892857,

"node_id": "MDU6TGFiZWwxOTM1ODkyODU3",

"url": "https://api.github.com/repos/huggingface/datasets/labels/bug",

"name": "bug",

"color": "d73a4a",

"default": true,

"description": "Something isn't working"

}

] | closed | false | {

"login": "albertvillanova",

"id": 8515462,

"node_id": "MDQ6VXNlcjg1MTU0NjI=",

"avatar_url": "https://avatars.githubusercontent.com/u/8515462?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/albertvillanova",

"html_url": "https://github.com/albertvillanova",

"followers_url": "https://api.github.com/users/albertvillanova/followers",

"following_url": "https://api.github.com/users/albertvillanova/following{/other_user}",

"gists_url": "https://api.github.com/users/albertvillanova/gists{/gist_id}",

"starred_url": "https://api.github.com/users/albertvillanova/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/albertvillanova/subscriptions",

"organizations_url": "https://api.github.com/users/albertvillanova/orgs",

"repos_url": "https://api.github.com/users/albertvillanova/repos",

"events_url": "https://api.github.com/users/albertvillanova/events{/privacy}",

"received_events_url": "https://api.github.com/users/albertvillanova/received_events",

"type": "User",

"site_admin": false

} | [

{

"login": "albertvillanova",

"id": 8515462,

"node_id": "MDQ6VXNlcjg1MTU0NjI=",

"avatar_url": "https://avatars.githubusercontent.com/u/8515462?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/albertvillanova",

"html_url": "https://github.com/albertvillanova",

"followers_url": "https://api.github.com/users/albertvillanova/followers",

"following_url": "https://api.github.com/users/albertvillanova/following{/other_user}",

"gists_url": "https://api.github.com/users/albertvillanova/gists{/gist_id}",

"starred_url": "https://api.github.com/users/albertvillanova/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/albertvillanova/subscriptions",

"organizations_url": "https://api.github.com/users/albertvillanova/orgs",

"repos_url": "https://api.github.com/users/albertvillanova/repos",

"events_url": "https://api.github.com/users/albertvillanova/events{/privacy}",

"received_events_url": "https://api.github.com/users/albertvillanova/received_events",

"type": "User",

"site_admin": false

}

] | null | [

"Seems like a regression from https://github.com/huggingface/datasets/pull/2843\r\n\r\nOr maybe it's an issue with the hosting. I don't think so, though, because https://www.dropbox.com/s/aox84z90nyyuikz/discovery.zip seems to work as expected\r\n\r\n",

"Hi @severo, thanks for reporting.\r\n\r\nSome servers do not support HTTP range requests, and those are required to stream some file formats (like ZIP in this case).\r\n\r\nLet me try to propose a workaround. "

] | 2022-02-03T15:02:03 | 2022-02-10T16:51:24 | 2022-02-10T16:51:24 | CONTRIBUTOR | null | null | null | ## Describe the bug

A clear and concise description of what the bug is.

## Steps to reproduce the bug

```python

from datasets import load_dataset

iterable_dataset = load_dataset("discovery", name="discovery", split="train", streaming=True)

list(iterable_dataset.take(1))

```

## Expected results

The first row of the train split.

## Actual results

```

Traceback (most recent call last):

File "<stdin>", line 1, in <module>

File "/home/slesage/hf/datasets-preview-backend/.venv/lib/python3.9/site-packages/datasets/iterable_dataset.py", line 365, in __iter__

for key, example in self._iter():

File "/home/slesage/hf/datasets-preview-backend/.venv/lib/python3.9/site-packages/datasets/iterable_dataset.py", line 362, in _iter

yield from ex_iterable

File "/home/slesage/hf/datasets-preview-backend/.venv/lib/python3.9/site-packages/datasets/iterable_dataset.py", line 272, in __iter__

yield from islice(self.ex_iterable, self.n)

File "/home/slesage/hf/datasets-preview-backend/.venv/lib/python3.9/site-packages/datasets/iterable_dataset.py", line 79, in __iter__

yield from self.generate_examples_fn(**self.kwargs)

File "/home/slesage/.cache/huggingface/modules/datasets_modules/datasets/discovery/542fab7a9ddc1d9726160355f7baa06a1ccc44c40bc8e12c09e9bc743aca43a2/discovery.py", line 333, in _generate_examples

with open(data_file, encoding="utf8") as f:

File "/home/slesage/hf/datasets-preview-backend/.venv/lib/python3.9/site-packages/datasets/streaming.py", line 64, in wrapper

return function(*args, use_auth_token=use_auth_token, **kwargs)

File "/home/slesage/hf/datasets-preview-backend/.venv/lib/python3.9/site-packages/datasets/utils/streaming_download_manager.py", line 369, in xopen

file_obj = fsspec.open(file, mode=mode, *args, **kwargs).open()

File "/home/slesage/hf/datasets-preview-backend/.venv/lib/python3.9/site-packages/fsspec/core.py", line 456, in open

return open_files(

File "/home/slesage/hf/datasets-preview-backend/.venv/lib/python3.9/site-packages/fsspec/core.py", line 288, in open_files

fs, fs_token, paths = get_fs_token_paths(

File "/home/slesage/hf/datasets-preview-backend/.venv/lib/python3.9/site-packages/fsspec/core.py", line 611, in get_fs_token_paths

fs = filesystem(protocol, **inkwargs)

File "/home/slesage/hf/datasets-preview-backend/.venv/lib/python3.9/site-packages/fsspec/registry.py", line 253, in filesystem

return cls(**storage_options)

File "/home/slesage/hf/datasets-preview-backend/.venv/lib/python3.9/site-packages/fsspec/spec.py", line 68, in __call__

obj = super().__call__(*args, **kwargs)

File "/home/slesage/hf/datasets-preview-backend/.venv/lib/python3.9/site-packages/fsspec/implementations/zip.py", line 57, in __init__

self.zip = zipfile.ZipFile(self.fo)

File "/home/slesage/.pyenv/versions/3.9.6/lib/python3.9/zipfile.py", line 1257, in __init__

self._RealGetContents()

File "/home/slesage/.pyenv/versions/3.9.6/lib/python3.9/zipfile.py", line 1320, in _RealGetContents

endrec = _EndRecData(fp)

File "/home/slesage/.pyenv/versions/3.9.6/lib/python3.9/zipfile.py", line 263, in _EndRecData

fpin.seek(0, 2)

File "/home/slesage/hf/datasets-preview-backend/.venv/lib/python3.9/site-packages/fsspec/implementations/http.py", line 676, in seek

raise ValueError("Cannot seek streaming HTTP file")

ValueError: Cannot seek streaming HTTP file

```

## Environment info

- `datasets` version: 1.18.3

- Platform: Linux-5.11.0-1027-aws-x86_64-with-glibc2.31

- Python version: 3.9.6

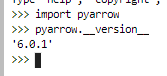

- PyArrow version: 6.0.1

| {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3677/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/3677/timeline | null | completed | false |

https://api.github.com/repos/huggingface/datasets/issues/3676 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3676/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3676/comments | https://api.github.com/repos/huggingface/datasets/issues/3676/events | https://github.com/huggingface/datasets/issues/3676 | 1,123,096,362 | I_kwDODunzps5C8Rcq | 3,676 | `None` replaced by `[]` after first batch in map | {

"login": "lhoestq",

"id": 42851186,

"node_id": "MDQ6VXNlcjQyODUxMTg2",

"avatar_url": "https://avatars.githubusercontent.com/u/42851186?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/lhoestq",

"html_url": "https://github.com/lhoestq",

"followers_url": "https://api.github.com/users/lhoestq/followers",

"following_url": "https://api.github.com/users/lhoestq/following{/other_user}",

"gists_url": "https://api.github.com/users/lhoestq/gists{/gist_id}",

"starred_url": "https://api.github.com/users/lhoestq/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/lhoestq/subscriptions",

"organizations_url": "https://api.github.com/users/lhoestq/orgs",

"repos_url": "https://api.github.com/users/lhoestq/repos",

"events_url": "https://api.github.com/users/lhoestq/events{/privacy}",

"received_events_url": "https://api.github.com/users/lhoestq/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | {

"login": "lhoestq",

"id": 42851186,

"node_id": "MDQ6VXNlcjQyODUxMTg2",

"avatar_url": "https://avatars.githubusercontent.com/u/42851186?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/lhoestq",

"html_url": "https://github.com/lhoestq",

"followers_url": "https://api.github.com/users/lhoestq/followers",

"following_url": "https://api.github.com/users/lhoestq/following{/other_user}",

"gists_url": "https://api.github.com/users/lhoestq/gists{/gist_id}",

"starred_url": "https://api.github.com/users/lhoestq/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/lhoestq/subscriptions",

"organizations_url": "https://api.github.com/users/lhoestq/orgs",

"repos_url": "https://api.github.com/users/lhoestq/repos",

"events_url": "https://api.github.com/users/lhoestq/events{/privacy}",

"received_events_url": "https://api.github.com/users/lhoestq/received_events",

"type": "User",

"site_admin": false

} | [

{

"login": "lhoestq",

"id": 42851186,

"node_id": "MDQ6VXNlcjQyODUxMTg2",

"avatar_url": "https://avatars.githubusercontent.com/u/42851186?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/lhoestq",

"html_url": "https://github.com/lhoestq",

"followers_url": "https://api.github.com/users/lhoestq/followers",

"following_url": "https://api.github.com/users/lhoestq/following{/other_user}",

"gists_url": "https://api.github.com/users/lhoestq/gists{/gist_id}",

"starred_url": "https://api.github.com/users/lhoestq/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/lhoestq/subscriptions",

"organizations_url": "https://api.github.com/users/lhoestq/orgs",

"repos_url": "https://api.github.com/users/lhoestq/repos",

"events_url": "https://api.github.com/users/lhoestq/events{/privacy}",

"received_events_url": "https://api.github.com/users/lhoestq/received_events",

"type": "User",

"site_admin": false

}

] | null | [

"It looks like this is because of this behavior in pyarrow:\r\n```python\r\nimport pyarrow as pa\r\n\r\narr = pa.array([None, [0]])\r\nreconstructed_arr = pa.ListArray.from_arrays(arr.offsets, arr.values)\r\nprint(reconstructed_arr.to_pylist())\r\n# [[], [0]]\r\n```\r\n\r\nIt seems that `arr.offsets` can reconstruct the array properly, but an offsets array with null values can:\r\n```python\r\nfixed_offsets = pa.array([None, 0, 1])\r\nfixed_arr = pa.ListArray.from_arrays(fixed_offsets, arr.values)\r\nprint(fixed_arr.to_pylist())\r\n# [None, [0]]\r\n\r\nprint(arr.offsets.to_pylist())\r\n# [0, 0, 1]\r\nprint(fixed_offsets.to_pylist())\r\n# [None, 0, 1]\r\n```\r\nEDIT: this is because `arr.offsets` is not enough to reconstruct the array, we also need the validity bitmap",

"The offsets don't have nulls because they don't include the validity bitmap from `arr.buffers()[0]`, which is used to say which values are null and which values are non-null.\r\n\r\nThough the validity bitmap also seems to be wrong:\r\n```python\r\nbin(int(arr.buffers()[0].hex(), 16))\r\n# '0b10'\r\n# it should be 0b110 - 1 corresponds to non-null and 0 corresponds to null, if you take the bits in reverse order\r\n```\r\n\r\nSo apparently I can't even create the fixed offsets array using this.\r\n\r\nIf I understand correctly it's always missing the 1 on the left, so I can add it manually as a hack to fix the issue until this is fixed in pyarrow EDIT: actually it may be more complicated than that\r\n\r\nEDIT2: actuall it's right, it corresponds to the validity bitmap of the array of logical length 2. So if we use the offsets array, the values array, and this validity bitmap it should be possible to reconstruct the array properly",

"I created an issue on Apache Arrow's JIRA: https://issues.apache.org/jira/browse/ARROW-15837",

"And another one: https://issues.apache.org/jira/browse/ARROW-15839",

"FYI the behavior is the same with:\r\n- `datasets` version: 1.18.3\r\n- Platform: Linux-5.8.0-50-generic-x86_64-with-debian-bullseye-sid\r\n- Python version: 3.7.11\r\n- PyArrow version: 6.0.1\r\n\r\n\r\nbut not with:\r\n- `datasets` version: 1.8.0\r\n- Platform: Linux-4.18.0-305.40.2.el8_4.x86_64-x86_64-with-redhat-8.4-Ootpa\r\n- Python version: 3.7.11\r\n- PyArrow version: 3.0.0\r\n\r\ni.e. it outputs:\r\n```py\r\n0 [None, [0]]\r\n1 [None, [0]]\r\n2 [None, [0]]\r\n3 [None, [0]]\r\n```\r\n",

"Thanks for the insights @PaulLerner !\r\n\r\nI found a way to workaround this issue for the code example presented in this issue.\r\n\r\nNote that empty lists will still appear when you explicitly `cast` a list of lists that contain None values like [None, [0]] to a new feature type (e.g. to change the integer precision). In this case it will show a warning that it happened. If you don't cast anything, then the None values will be kept as expected.\r\n\r\nLet me know what you think !",

"Hi! I feel like I’m missing something in your answer, *what* is the workaround? Is it fixed in some `datasets` version?",

"`pa.ListArray.from_arrays` returns empty lists instead of None values. The workaround I added inside `datasets` simply consists in not using `pa.ListArray.from_arrays` :)\r\n\r\nOnce this PR [here ](https://github.com/huggingface/datasets/pull/4282)is merged, we'll release a new version of `datasets` that currectly returns the None values in the case described in this issue\r\n\r\nEDIT: released :) but let's keep this issue open because it might happen again if users change the integer precision for example"

] | 2022-02-03T13:36:48 | 2022-10-28T13:13:20 | 2022-10-28T13:13:20 | MEMBER | null | null | null | Sometimes `None` can be replaced by `[]` when running map:

```python

from datasets import Dataset

ds = Dataset.from_dict({"a": range(4)})

ds = ds.map(lambda x: {"b": [[None, [0]]]}, batched=True, batch_size=1, remove_columns=["a"])

print(ds.to_pandas())

# b

# 0 [None, [0]]

# 1 [[], [0]]

# 2 [[], [0]]

# 3 [[], [0]]

```

This issue has been experienced when running the `run_qa.py` example from `transformers` (see issue https://github.com/huggingface/transformers/issues/15401)

This can be due to a bug in when casting `None` in nested lists. Casting only happens after the first batch, since the first batch is used to infer the feature types.

cc @sgugger | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3676/reactions",

"total_count": 3,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 1,

"rocket": 0,

"eyes": 2

} | https://api.github.com/repos/huggingface/datasets/issues/3676/timeline | null | completed | false |

https://api.github.com/repos/huggingface/datasets/issues/3675 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3675/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3675/comments | https://api.github.com/repos/huggingface/datasets/issues/3675/events | https://github.com/huggingface/datasets/issues/3675 | 1,123,078,408 | I_kwDODunzps5C8NEI | 3,675 | Add CodeContests dataset | {

"login": "mariosasko",

"id": 47462742,

"node_id": "MDQ6VXNlcjQ3NDYyNzQy",

"avatar_url": "https://avatars.githubusercontent.com/u/47462742?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/mariosasko",

"html_url": "https://github.com/mariosasko",

"followers_url": "https://api.github.com/users/mariosasko/followers",

"following_url": "https://api.github.com/users/mariosasko/following{/other_user}",

"gists_url": "https://api.github.com/users/mariosasko/gists{/gist_id}",

"starred_url": "https://api.github.com/users/mariosasko/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/mariosasko/subscriptions",

"organizations_url": "https://api.github.com/users/mariosasko/orgs",

"repos_url": "https://api.github.com/users/mariosasko/repos",

"events_url": "https://api.github.com/users/mariosasko/events{/privacy}",

"received_events_url": "https://api.github.com/users/mariosasko/received_events",

"type": "User",

"site_admin": false

} | [

{

"id": 2067376369,

"node_id": "MDU6TGFiZWwyMDY3Mzc2MzY5",

"url": "https://api.github.com/repos/huggingface/datasets/labels/dataset%20request",

"name": "dataset request",

"color": "e99695",

"default": false,

"description": "Requesting to add a new dataset"

}

] | closed | false | null | [] | null | [

"@mariosasko Can I take this up?",

"This dataset is now available here: https://huggingface.co./datasets/deepmind/code_contests."

] | 2022-02-03T13:20:00 | 2022-07-20T11:07:05 | 2022-07-20T11:07:05 | CONTRIBUTOR | null | null | null | ## Adding a Dataset

- **Name:** CodeContests

- **Description:** CodeContests is a competitive programming dataset for machine-learning.

- **Paper:**

- **Data:** https://github.com/deepmind/code_contests

- **Motivation:** This dataset was used when training [AlphaCode](https://deepmind.com/blog/article/Competitive-programming-with-AlphaCode).

Instructions to add a new dataset can be found [here](https://github.com/huggingface/datasets/blob/master/ADD_NEW_DATASET.md).

| {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3675/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/3675/timeline | null | completed | false |

https://api.github.com/repos/huggingface/datasets/issues/3674 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3674/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3674/comments | https://api.github.com/repos/huggingface/datasets/issues/3674/events | https://github.com/huggingface/datasets/pull/3674 | 1,123,027,874 | PR_kwDODunzps4yBe17 | 3,674 | Add FrugalScore metric | {

"login": "moussaKam",

"id": 28675016,

"node_id": "MDQ6VXNlcjI4Njc1MDE2",

"avatar_url": "https://avatars.githubusercontent.com/u/28675016?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/moussaKam",

"html_url": "https://github.com/moussaKam",

"followers_url": "https://api.github.com/users/moussaKam/followers",

"following_url": "https://api.github.com/users/moussaKam/following{/other_user}",

"gists_url": "https://api.github.com/users/moussaKam/gists{/gist_id}",

"starred_url": "https://api.github.com/users/moussaKam/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/moussaKam/subscriptions",

"organizations_url": "https://api.github.com/users/moussaKam/orgs",

"repos_url": "https://api.github.com/users/moussaKam/repos",

"events_url": "https://api.github.com/users/moussaKam/events{/privacy}",

"received_events_url": "https://api.github.com/users/moussaKam/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | null | [] | null | [

"@lhoestq \r\n\r\nThe model used by default (`moussaKam/frugalscore_tiny_bert-base_bert-score`) is a tiny model.\r\n\r\nI still want to make one modification before merging.\r\nI would like to load the model checkpoint once. Do you think it's a good idea if I load it in `_download_and_prepare`? In this case should the model name be the `self.config_name` or another variable say `self.model_name` ? ",

"OK, I added a commit that loads the checkpoint in `_download_and_prepare`. Please let me know if it looks good. ",

"@lhoestq is everything OK to merge? ",

"I triggered the CI and it's failing, can you merge the `master` branch into yours ? It should fix the issues.\r\n\r\nAlso the doctest apparently raises an error because it outputs `{'scores': [0.6307542, 0.6449357]}` instead of `{'scores': [0.631, 0.645]}` - feel free to edit the code example in the docstring to round the scores, that should fix it",

"@lhoestq hope it's OK now"

] | 2022-02-03T12:28:52 | 2022-02-21T15:58:44 | 2022-02-21T15:58:44 | CONTRIBUTOR | null | false | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3674",

"html_url": "https://github.com/huggingface/datasets/pull/3674",

"diff_url": "https://github.com/huggingface/datasets/pull/3674.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3674.patch",

"merged_at": "2022-02-21T15:58:44"

} | This pull request add FrugalScore metric for NLG systems evaluation.

FrugalScore is a reference-based metric for NLG models evaluation. It is based on a distillation approach that allows to learn a fixed, low cost version of any expensive NLG metric, while retaining most of its original performance.

Paper: https://arxiv.org/abs/2110.08559?context=cs

Github: https://github.com/moussaKam/FrugalScore

@lhoestq | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3674/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/3674/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/3673 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3673/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3673/comments | https://api.github.com/repos/huggingface/datasets/issues/3673/events | https://github.com/huggingface/datasets/issues/3673 | 1,123,010,520 | I_kwDODunzps5C78fY | 3,673 | `load_dataset("snli")` is different from dataset viewer | {

"login": "pietrolesci",

"id": 61748653,

"node_id": "MDQ6VXNlcjYxNzQ4NjUz",

"avatar_url": "https://avatars.githubusercontent.com/u/61748653?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/pietrolesci",

"html_url": "https://github.com/pietrolesci",

"followers_url": "https://api.github.com/users/pietrolesci/followers",

"following_url": "https://api.github.com/users/pietrolesci/following{/other_user}",

"gists_url": "https://api.github.com/users/pietrolesci/gists{/gist_id}",

"starred_url": "https://api.github.com/users/pietrolesci/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/pietrolesci/subscriptions",

"organizations_url": "https://api.github.com/users/pietrolesci/orgs",

"repos_url": "https://api.github.com/users/pietrolesci/repos",

"events_url": "https://api.github.com/users/pietrolesci/events{/privacy}",

"received_events_url": "https://api.github.com/users/pietrolesci/received_events",

"type": "User",

"site_admin": false

} | [

{

"id": 1935892857,

"node_id": "MDU6TGFiZWwxOTM1ODkyODU3",

"url": "https://api.github.com/repos/huggingface/datasets/labels/bug",

"name": "bug",

"color": "d73a4a",

"default": true,

"description": "Something isn't working"

},

{

"id": 3470211881,

"node_id": "LA_kwDODunzps7O1zsp",

"url": "https://api.github.com/repos/huggingface/datasets/labels/dataset-viewer",

"name": "dataset-viewer",

"color": "E5583E",

"default": false,

"description": "Related to the dataset viewer on huggingface.co"

}

] | closed | false | {

"login": "severo",

"id": 1676121,

"node_id": "MDQ6VXNlcjE2NzYxMjE=",

"avatar_url": "https://avatars.githubusercontent.com/u/1676121?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/severo",

"html_url": "https://github.com/severo",

"followers_url": "https://api.github.com/users/severo/followers",

"following_url": "https://api.github.com/users/severo/following{/other_user}",

"gists_url": "https://api.github.com/users/severo/gists{/gist_id}",

"starred_url": "https://api.github.com/users/severo/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/severo/subscriptions",

"organizations_url": "https://api.github.com/users/severo/orgs",

"repos_url": "https://api.github.com/users/severo/repos",

"events_url": "https://api.github.com/users/severo/events{/privacy}",

"received_events_url": "https://api.github.com/users/severo/received_events",

"type": "User",

"site_admin": false

} | [

{

"login": "severo",

"id": 1676121,

"node_id": "MDQ6VXNlcjE2NzYxMjE=",

"avatar_url": "https://avatars.githubusercontent.com/u/1676121?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/severo",

"html_url": "https://github.com/severo",

"followers_url": "https://api.github.com/users/severo/followers",

"following_url": "https://api.github.com/users/severo/following{/other_user}",

"gists_url": "https://api.github.com/users/severo/gists{/gist_id}",

"starred_url": "https://api.github.com/users/severo/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/severo/subscriptions",

"organizations_url": "https://api.github.com/users/severo/orgs",

"repos_url": "https://api.github.com/users/severo/repos",

"events_url": "https://api.github.com/users/severo/events{/privacy}",

"received_events_url": "https://api.github.com/users/severo/received_events",

"type": "User",

"site_admin": false

}

] | null | [

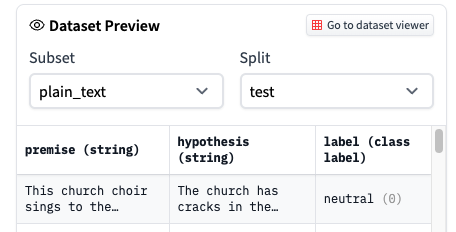

"Yes, we decided to replace the encoded label with the corresponding label when possible in the dataset viewer. But\r\n1. maybe it's the wrong default\r\n2. we could find a way to show both (with a switch, or showing both ie. `0 (neutral)`).\r\n",

"Hi @severo,\r\n\r\nThanks for clarifying. \r\n\r\nI think this default is a bit counterintuitive for the user. However, this is a personal opinion that might not be general. I think it is nice to have the actual (non-encoded) labels in the viewer. On the other hand, it would be nice to match what the user sees with what they get when they download a dataset. I don't know - I can see the difficulty of choosing a default :)\r\nMaybe having non-encoded labels as a default can be useful?\r\n\r\nAnyway, I think the issue has been addressed. Thanks a lot for your super-quick answer!\r\n\r\n ",

"Thanks for the 👍 in https://github.com/huggingface/datasets/issues/3673#issuecomment-1029008349 @mariosasko @gary149 @pietrolesci, but as I proposed various solutions, it's not clear to me which you prefer. Could you write your preferences as a comment?\r\n\r\n_(note for myself: one idea per comment in the future)_",

"As I am working with seq2seq, I prefer having the label in string form rather than numeric. So the viewer is fine and the underlying dataset should be \"decoded\" (from int to str). In this way, the user does not have to search for a mapping `int -> original name` (even though is trivial to find, I reckon). Also, encoding labels is rather easy.\r\n\r\nI hope this is useful",

"I like the idea of \"0 (neutral)\". The label name can even be greyed to make it clear that it's not part of the actual item in the dataset, it's just the meaning.",

"I like @lhoestq's idea of having grayed-out labels.",

"Proposals by @gary149. Which one do you prefer? Please vote with the thumbs\r\n\r\n- 👍 \r\n\r\n \r\n\r\n- 👎 \r\n\r\n \r\n\r\n",

"I like Option 1 better as it shows clearly what the user is downloading",

"Thanks! ",

"It's [live](https://huggingface.co./datasets/glue/viewer/cola/train):\r\n\r\n<img width=\"1126\" alt=\"Capture d’écran 2022-02-14 à 10 26 03\" src=\"https://user-images.githubusercontent.com/1676121/153836716-25f6205b-96af-42d8-880a-7c09cb24c420.png\">\r\n\r\nThanks all for the help to improve the UI!",

"Love it ! thanks :)"

] | 2022-02-03T12:10:43 | 2022-02-16T11:22:31 | 2022-02-11T17:01:21 | NONE | null | null | null | ## Describe the bug

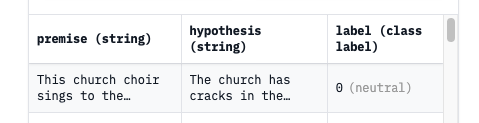

The dataset that is downloaded from the Hub via `load_dataset("snli")` is different from what is available in the dataset viewer. In the viewer the labels are not encoded (i.e., "neutral", "entailment", "contradiction"), while the downloaded dataset shows the encoded labels (i.e., 0, 1, 2).

Is this expected?

## Environment info

<!-- You can run the command `datasets-cli env` and copy-and-paste its output below. -->

- `datasets` version:

- Platform: Ubuntu 20.4

- Python version: 3.7

| {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3673/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/3673/timeline | null | completed | false |

https://api.github.com/repos/huggingface/datasets/issues/3672 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3672/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3672/comments | https://api.github.com/repos/huggingface/datasets/issues/3672/events | https://github.com/huggingface/datasets/pull/3672 | 1,122,980,556 | PR_kwDODunzps4yBUrZ | 3,672 | Prioritize `module.builder_kwargs` over defaults in `TestCommand` | {

"login": "lvwerra",

"id": 8264887,

"node_id": "MDQ6VXNlcjgyNjQ4ODc=",

"avatar_url": "https://avatars.githubusercontent.com/u/8264887?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/lvwerra",

"html_url": "https://github.com/lvwerra",

"followers_url": "https://api.github.com/users/lvwerra/followers",

"following_url": "https://api.github.com/users/lvwerra/following{/other_user}",

"gists_url": "https://api.github.com/users/lvwerra/gists{/gist_id}",

"starred_url": "https://api.github.com/users/lvwerra/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/lvwerra/subscriptions",

"organizations_url": "https://api.github.com/users/lvwerra/orgs",

"repos_url": "https://api.github.com/users/lvwerra/repos",

"events_url": "https://api.github.com/users/lvwerra/events{/privacy}",

"received_events_url": "https://api.github.com/users/lvwerra/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | null | [] | null | [] | 2022-02-03T11:38:42 | 2022-02-04T12:37:20 | 2022-02-04T12:37:19 | MEMBER | null | false | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3672",

"html_url": "https://github.com/huggingface/datasets/pull/3672",

"diff_url": "https://github.com/huggingface/datasets/pull/3672.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3672.patch",

"merged_at": "2022-02-04T12:37:19"

} | This fixes a bug in the `TestCommand` where multiple kwargs for `name` were passed if it was set in both default and `module.builder_kwargs`. Example error:

```Python

Traceback (most recent call last):

File "create_metadata.py", line 96, in <module>

main(**vars(args))

File "create_metadata.py", line 86, in main

metadata_command.run()

File "/opt/conda/lib/python3.7/site-packages/datasets/commands/test.py", line 144, in run

for j, builder in enumerate(get_builders()):

File "/opt/conda/lib/python3.7/site-packages/datasets/commands/test.py", line 141, in get_builders

name=name, cache_dir=self._cache_dir, data_dir=self._data_dir, **module.builder_kwargs

TypeError: type object got multiple values for keyword argument 'name'

```

Let me know what you think. | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3672/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/3672/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/3671 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3671/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3671/comments | https://api.github.com/repos/huggingface/datasets/issues/3671/events | https://github.com/huggingface/datasets/issues/3671 | 1,122,864,253 | I_kwDODunzps5C7Yx9 | 3,671 | Give an estimate of the dataset size in DatasetInfo | {

"login": "severo",

"id": 1676121,

"node_id": "MDQ6VXNlcjE2NzYxMjE=",

"avatar_url": "https://avatars.githubusercontent.com/u/1676121?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/severo",

"html_url": "https://github.com/severo",

"followers_url": "https://api.github.com/users/severo/followers",

"following_url": "https://api.github.com/users/severo/following{/other_user}",

"gists_url": "https://api.github.com/users/severo/gists{/gist_id}",

"starred_url": "https://api.github.com/users/severo/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/severo/subscriptions",

"organizations_url": "https://api.github.com/users/severo/orgs",

"repos_url": "https://api.github.com/users/severo/repos",

"events_url": "https://api.github.com/users/severo/events{/privacy}",

"received_events_url": "https://api.github.com/users/severo/received_events",

"type": "User",

"site_admin": false

} | [

{

"id": 1935892871,

"node_id": "MDU6TGFiZWwxOTM1ODkyODcx",

"url": "https://api.github.com/repos/huggingface/datasets/labels/enhancement",

"name": "enhancement",

"color": "a2eeef",

"default": true,

"description": "New feature or request"

}

] | open | false | null | [] | null | [] | 2022-02-03T09:47:10 | 2022-02-03T09:47:10 | null | CONTRIBUTOR | null | null | null | **Is your feature request related to a problem? Please describe.**

Currently, only part of the datasets provide `dataset_size`, `download_size`, `size_in_bytes` (and `num_bytes` and `num_examples` inside `splits`). I would want to get this information, or an estimation, for all the datasets.

**Describe the solution you'd like**

- get access to the git information for the dataset files hosted on the hub

- look at the [`Content-Length`](https://developer.mozilla.org/en-US/docs/Web/HTTP/Headers/Content-Length) for the files served by HTTP

| {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3671/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/3671/timeline | null | null | false |

https://api.github.com/repos/huggingface/datasets/issues/3670 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3670/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3670/comments | https://api.github.com/repos/huggingface/datasets/issues/3670/events | https://github.com/huggingface/datasets/pull/3670 | 1,122,439,827 | PR_kwDODunzps4x_kBx | 3,670 | feat: 🎸 generate info if dataset_infos.json does not exist | {

"login": "severo",

"id": 1676121,

"node_id": "MDQ6VXNlcjE2NzYxMjE=",

"avatar_url": "https://avatars.githubusercontent.com/u/1676121?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/severo",

"html_url": "https://github.com/severo",

"followers_url": "https://api.github.com/users/severo/followers",

"following_url": "https://api.github.com/users/severo/following{/other_user}",

"gists_url": "https://api.github.com/users/severo/gists{/gist_id}",

"starred_url": "https://api.github.com/users/severo/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/severo/subscriptions",

"organizations_url": "https://api.github.com/users/severo/orgs",

"repos_url": "https://api.github.com/users/severo/repos",

"events_url": "https://api.github.com/users/severo/events{/privacy}",

"received_events_url": "https://api.github.com/users/severo/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | null | [] | null | [

"It's a first attempt at solving https://github.com/huggingface/datasets/issues/3013.",

"I only kept these ones:\r\n```\r\n path: str,\r\n data_files: Optional[Union[Dict, List, str]] = None,\r\n download_config: Optional[DownloadConfig] = None,\r\n download_mode: Optional[GenerateMode] = None,\r\n revision: Optional[Union[str, Version]] = None,\r\n use_auth_token: Optional[Union[bool, str]] = None,\r\n **config_kwargs,\r\n```\r\n\r\nLet me know if it's better for you now !\r\n\r\n(note that there's no breaking change since the ones that are removed can be passed as config_kwargs if you really want)",

"(https://github.com/huggingface/datasets/pull/3670/commits/5636911880ea4306c27c7f5825fa3f9427ccc2b6 and https://github.com/huggingface/datasets/pull/3670/commits/07c3f0800dd34dfebb9674ad46c67a907b08ded8 -> I has forgotten to update black in my venv)"

] | 2022-02-02T22:11:56 | 2022-02-21T15:57:11 | 2022-02-21T15:57:10 | CONTRIBUTOR | null | false | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3670",

"html_url": "https://github.com/huggingface/datasets/pull/3670",

"diff_url": "https://github.com/huggingface/datasets/pull/3670.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3670.patch",

"merged_at": "2022-02-21T15:57:10"

} | in get_dataset_infos(). Also: add the `use_auth_token` parameter, and create get_dataset_config_info()

✅ Closes: #3013 | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3670/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/3670/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/3669 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3669/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3669/comments | https://api.github.com/repos/huggingface/datasets/issues/3669/events | https://github.com/huggingface/datasets/pull/3669 | 1,122,335,622 | PR_kwDODunzps4x_OTI | 3,669 | Common voice validated partition | {

"login": "shalymin-amzn",

"id": 98762373,

"node_id": "U_kgDOBeL-hQ",

"avatar_url": "https://avatars.githubusercontent.com/u/98762373?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/shalymin-amzn",

"html_url": "https://github.com/shalymin-amzn",

"followers_url": "https://api.github.com/users/shalymin-amzn/followers",

"following_url": "https://api.github.com/users/shalymin-amzn/following{/other_user}",

"gists_url": "https://api.github.com/users/shalymin-amzn/gists{/gist_id}",

"starred_url": "https://api.github.com/users/shalymin-amzn/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/shalymin-amzn/subscriptions",

"organizations_url": "https://api.github.com/users/shalymin-amzn/orgs",

"repos_url": "https://api.github.com/users/shalymin-amzn/repos",

"events_url": "https://api.github.com/users/shalymin-amzn/events{/privacy}",

"received_events_url": "https://api.github.com/users/shalymin-amzn/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | null | [] | null | [

"Hi @patrickvonplaten - could you please advise whether this would be a welcomed change, and if so, who I consult regarding the unit-tests?",

"I'd be happy with adding this change. @anton-l @lhoestq - what do you think?",

"Cool ! I just fixed the tests by adding a dummy `validated.tsv` file in the dummy data archive of common_voice\r\n\r\nI wonder if you should separate the train/valid/test configuration from the validated/invalidated configuration of the splits ? \r\nIn particular having `validated` along with the train/valid/test splits could be a bit weird since it comprises them. We can do that if you think it makes more sense. Otherwise it's also good as it is right now :)\r\n",

"Thanks! I think that there are 2 cases for using the validated partition: 1) trainset = {validated - dev - test}, dev and test as they come; 2) train, dev, and test sampled from validated manually with the desired ratios.\r\nIn either case, I think that it's quite a big change on the HF interface part, so could as well be taken care of in the client code. Or is it not? (In which case, what's the most compact way to implement this?)",

"What's important IMO is to let the users as much flexibility as they need - so we try to not do too much regarding splits to not constrain users. So I guess the way it is right now is ok. Can you confirm that it's ok @patrickvonplaten and that it won't break some speech training script out there ?",

"@lhoestq all split names are explicit in our example scripts, so this shouldn't break anything, feel free to merge :)\r\nI'll go ahead and add this to the official `mozilla-foundation` datasets as well ",

"Good for me! This has no real down-sides IMO and surely won't break any training scripts."

] | 2022-02-02T20:04:43 | 2022-02-08T17:26:52 | 2022-02-08T17:23:12 | CONTRIBUTOR | null | false | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3669",

"html_url": "https://github.com/huggingface/datasets/pull/3669",

"diff_url": "https://github.com/huggingface/datasets/pull/3669.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3669.patch",

"merged_at": "2022-02-08T17:23:12"

} | This patch adds access to the 'validated' partitions of CommonVoice datasets (provided by the dataset creators but not available in the HuggingFace interface yet).

As 'validated' contains significantly more data than 'train' (although it contains both test and validation, so one needs to be careful there), it can be useful to train better models where no strict comparison with the previous work is intended. | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3669/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/3669/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/3668 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3668/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3668/comments | https://api.github.com/repos/huggingface/datasets/issues/3668/events | https://github.com/huggingface/datasets/issues/3668 | 1,122,261,736 | I_kwDODunzps5C5Fro | 3,668 | Couldn't cast array of type string error with cast_column | {

"login": "R4ZZ3",

"id": 25264037,

"node_id": "MDQ6VXNlcjI1MjY0MDM3",

"avatar_url": "https://avatars.githubusercontent.com/u/25264037?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/R4ZZ3",

"html_url": "https://github.com/R4ZZ3",

"followers_url": "https://api.github.com/users/R4ZZ3/followers",

"following_url": "https://api.github.com/users/R4ZZ3/following{/other_user}",

"gists_url": "https://api.github.com/users/R4ZZ3/gists{/gist_id}",

"starred_url": "https://api.github.com/users/R4ZZ3/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/R4ZZ3/subscriptions",

"organizations_url": "https://api.github.com/users/R4ZZ3/orgs",

"repos_url": "https://api.github.com/users/R4ZZ3/repos",

"events_url": "https://api.github.com/users/R4ZZ3/events{/privacy}",

"received_events_url": "https://api.github.com/users/R4ZZ3/received_events",

"type": "User",

"site_admin": false

} | [

{

"id": 1935892857,

"node_id": "MDU6TGFiZWwxOTM1ODkyODU3",

"url": "https://api.github.com/repos/huggingface/datasets/labels/bug",

"name": "bug",

"color": "d73a4a",

"default": true,

"description": "Something isn't working"

}

] | closed | false | null | [] | null | [

"Hi ! I wasn't able to reproduce the error, are you still experiencing this ? I tried calling `cast_column` on a string column containing paths.\r\n\r\nIf you manage to share a reproducible code example that would be perfect",

"Hi,\r\n\r\nI think my team mate got this solved. Clolsing it for now and will reopen if I experience this again.\r\nThanks :) ",

"Hi @R4ZZ3,\r\n\r\nIf it is not too much of a bother, can you please help me how to resolve this error? I am exactly getting the same error where I am going as per the documentation guideline:\r\n\r\n`my_audio_dataset = my_audio_dataset.cast_column(\"audio_paths\", Audio())`\r\n\r\nwhere `\"audio_paths\"` is a dataset column (feature) having strings of absolute paths to mp3 files of the dataset.\r\n\r\n",

"I was having the same issue with this code:\r\n\r\n```\r\ndataset = dataset.map(\r\n lambda batch: {\"full_path\" : os.path.join(self.data_path, batch[\"path\"])},\r\n num_procs = 4\r\n)\r\nmy_audio_dataset = dataset.cast_column(\"full_path\", Audio(sampling_rate=16_000))\r\n```\r\n\r\nRemoving the \"num_procs\" argument fixed it somehow.\r\nUsing a mac with m1 chip",

"Hi @Hubert-Bonisseur, I think this will be fixed by https://github.com/huggingface/datasets/pull/4614"

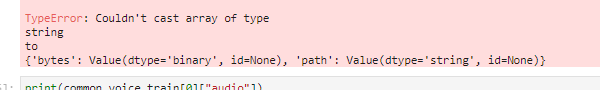

] | 2022-02-02T18:33:29 | 2022-07-19T13:36:24 | 2022-07-19T13:36:24 | NONE | null | null | null | ## Describe the bug

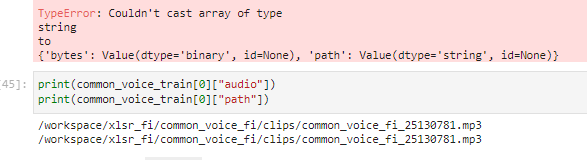

In OVH cloud during Huggingface Robust-speech-recognition event on a AI training notebook instance using jupyter lab and running jupyter notebook When using the dataset.cast_column("audio",Audio(sampling_rate=16_000))

method I get error

This was working with datasets version 1.17.1.dev0

but now with version 1.18.3 produces the error above.

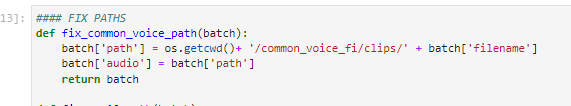

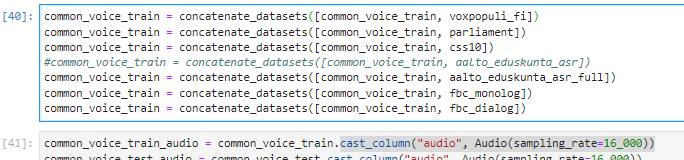

## Steps to reproduce the bug

load dataset:

remove columns:

run my fix_path function.

This also creates the audio column that is referring to the absolute file path of the audio

Then I concatenate few other datasets and finally try the cast_column method

but get error:

## Expected results

A clear and concise description of the expected results.

## Actual results

Specify the actual results or traceback.

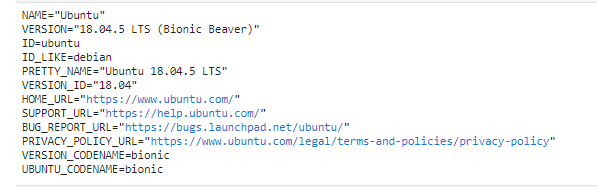

## Environment info

<!-- You can run the command `datasets-cli env` and copy-and-paste its output below. -->

- `datasets` version: 1.18.3

- Platform:

OVH Cloud, AI Training section, container for Huggingface Robust Speech Recognition event image(baaastijn/ovh_huggingface)

- Python version: 3.8.8

- PyArrow version:

| {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3668/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/3668/timeline | null | completed | false |

https://api.github.com/repos/huggingface/datasets/issues/3667 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3667/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3667/comments | https://api.github.com/repos/huggingface/datasets/issues/3667/events | https://github.com/huggingface/datasets/pull/3667 | 1,122,060,630 | PR_kwDODunzps4x-Ujt | 3,667 | Process .opus files with torchaudio | {

"login": "polinaeterna",

"id": 16348744,

"node_id": "MDQ6VXNlcjE2MzQ4NzQ0",

"avatar_url": "https://avatars.githubusercontent.com/u/16348744?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/polinaeterna",

"html_url": "https://github.com/polinaeterna",

"followers_url": "https://api.github.com/users/polinaeterna/followers",

"following_url": "https://api.github.com/users/polinaeterna/following{/other_user}",

"gists_url": "https://api.github.com/users/polinaeterna/gists{/gist_id}",

"starred_url": "https://api.github.com/users/polinaeterna/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/polinaeterna/subscriptions",

"organizations_url": "https://api.github.com/users/polinaeterna/orgs",

"repos_url": "https://api.github.com/users/polinaeterna/repos",

"events_url": "https://api.github.com/users/polinaeterna/events{/privacy}",

"received_events_url": "https://api.github.com/users/polinaeterna/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | {

"login": "polinaeterna",

"id": 16348744,

"node_id": "MDQ6VXNlcjE2MzQ4NzQ0",

"avatar_url": "https://avatars.githubusercontent.com/u/16348744?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/polinaeterna",

"html_url": "https://github.com/polinaeterna",

"followers_url": "https://api.github.com/users/polinaeterna/followers",

"following_url": "https://api.github.com/users/polinaeterna/following{/other_user}",

"gists_url": "https://api.github.com/users/polinaeterna/gists{/gist_id}",

"starred_url": "https://api.github.com/users/polinaeterna/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/polinaeterna/subscriptions",

"organizations_url": "https://api.github.com/users/polinaeterna/orgs",

"repos_url": "https://api.github.com/users/polinaeterna/repos",

"events_url": "https://api.github.com/users/polinaeterna/events{/privacy}",

"received_events_url": "https://api.github.com/users/polinaeterna/received_events",

"type": "User",

"site_admin": false

} | [

{

"login": "polinaeterna",

"id": 16348744,

"node_id": "MDQ6VXNlcjE2MzQ4NzQ0",

"avatar_url": "https://avatars.githubusercontent.com/u/16348744?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/polinaeterna",

"html_url": "https://github.com/polinaeterna",

"followers_url": "https://api.github.com/users/polinaeterna/followers",

"following_url": "https://api.github.com/users/polinaeterna/following{/other_user}",

"gists_url": "https://api.github.com/users/polinaeterna/gists{/gist_id}",

"starred_url": "https://api.github.com/users/polinaeterna/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/polinaeterna/subscriptions",

"organizations_url": "https://api.github.com/users/polinaeterna/orgs",

"repos_url": "https://api.github.com/users/polinaeterna/repos",

"events_url": "https://api.github.com/users/polinaeterna/events{/privacy}",

"received_events_url": "https://api.github.com/users/polinaeterna/received_events",

"type": "User",

"site_admin": false

}

] | null | [

"Note that torchaudio is maybe less practical to use for TF or JAX users.\r\nThis is not in the scope of this PR, but in the future if we manage to find a way to let the user control the decoding it would be nice",

"> Note that torchaudio is maybe less practical to use for TF or JAX users. This is not in the scope of this PR, but in the future if we manage to find a way to let the user control the decoding it would be nice\r\n\r\n@lhoestq so maybe don't do this PR? :) if it doesn't work anyway with an opened file, only with path",

"Yes as discussed offline there seems to be issues with torchaudio on opened files. Feel free to close this PR if it's better to stick with soundfile because of that",

"We should be able to remove torchaudio, which has torch as a hard dependency, soon and use only soundfile for decoding: https://github.com/bastibe/python-soundfile/issues/252#issuecomment-1000246773 (opus + mp3 support is on the way)."

] | 2022-02-02T15:23:14 | 2022-02-04T15:29:38 | 2022-02-04T15:29:38 | CONTRIBUTOR | null | true | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3667",

"html_url": "https://github.com/huggingface/datasets/pull/3667",

"diff_url": "https://github.com/huggingface/datasets/pull/3667.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3667.patch",

"merged_at": null

} | @anton-l suggested to proccess .opus files with `torchaudio` instead of `soundfile` as it's faster:

(moreover, I didn't manage to load .opus files with `soundfile` / `librosa` locally on any my machine anyway for some reason, even with `ffmpeg` installed).

For now my current changes work with locally stored file:

```python

# download sample opus file (from MultilingualSpokenWords dataset)

!wget https://huggingface.co./datasets/polinaeterna/test_opus/resolve/main/common_voice_tt_17737010.opus

from datasets import Dataset, Audio

audio_path = "common_voice_tt_17737010.opus"

dataset = Dataset.from_dict({"audio": [audio_path]}).cast_column("audio", Audio(48000))

dataset[0]

# {'audio': {'path': 'common_voice_tt_17737010.opus',

# 'array': array([ 0.0000000e+00, 0.0000000e+00, 3.0517578e-05, ...,

# -6.1035156e-05, 6.1035156e-05, 0.0000000e+00], dtype=float32),

# 'sampling_rate': 48000}}

```

But it doesn't work when loading inside s dataset from bytes (I checked on [MultilingualSpokenWords](https://github.com/huggingface/datasets/pull/3666), the PR is a draft now, maybe the bug is somewhere there )

```python

import torchaudio

with open(audio_path, "rb") as b:

print(torchaudio.load(b))

# RuntimeError: Error loading audio file: failed to open file <in memory buffer>

``` | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3667/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/3667/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/3666 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3666/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3666/comments | https://api.github.com/repos/huggingface/datasets/issues/3666/events | https://github.com/huggingface/datasets/pull/3666 | 1,122,058,894 | PR_kwDODunzps4x-ULz | 3,666 | process .opus files (for Multilingual Spoken Words) | {

"login": "polinaeterna",

"id": 16348744,

"node_id": "MDQ6VXNlcjE2MzQ4NzQ0",

"avatar_url": "https://avatars.githubusercontent.com/u/16348744?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/polinaeterna",

"html_url": "https://github.com/polinaeterna",

"followers_url": "https://api.github.com/users/polinaeterna/followers",

"following_url": "https://api.github.com/users/polinaeterna/following{/other_user}",

"gists_url": "https://api.github.com/users/polinaeterna/gists{/gist_id}",

"starred_url": "https://api.github.com/users/polinaeterna/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/polinaeterna/subscriptions",

"organizations_url": "https://api.github.com/users/polinaeterna/orgs",

"repos_url": "https://api.github.com/users/polinaeterna/repos",

"events_url": "https://api.github.com/users/polinaeterna/events{/privacy}",

"received_events_url": "https://api.github.com/users/polinaeterna/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | null | [] | null | [

"@lhoestq I still have problems with processing `.opus` files with `soundfile` so I actually cannot fully check that it works but it should... Maybe this should be investigated in case of someone else would also have problems with that.\r\n\r\nAlso, as the data is in a private repo on the hub (before we come to a decision about audio data privacy), the needed checks cannot be done right now.",

"@lhoestq I check the data redownloading for configs sharing the same languages, you were right: the data is downloaded once for each language. But samples are generated from scratch each time. Is it a supposed behavior? ",

"> But samples are generated from scratch each time. Is it a supposed behavior?\r\n\r\nYea that's the way it works right now, because we generate one arrow file per configuration. Since changing the languages creates a new configuration, then it generates a new arrow file."

] | 2022-02-02T15:21:48 | 2022-02-22T10:04:03 | 2022-02-22T10:03:53 | CONTRIBUTOR | null | false | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3666",

"html_url": "https://github.com/huggingface/datasets/pull/3666",

"diff_url": "https://github.com/huggingface/datasets/pull/3666.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3666.patch",

"merged_at": "2022-02-22T10:03:53"

} | Opus files requires `libsndfile>=1.0.30`. Add check for this version and tests.

**outdated:**

Add [Multillingual Spoken Words dataset](https://mlcommons.org/en/multilingual-spoken-words/)

You can specify multiple languages for downloading 😌:

```python

ds = load_dataset("datasets/ml_spoken_words", languages=["ar", "tt"])

```

1. I didn't take into account that each time you pass a set of languages the data for a specific language is downloaded even if it was downloaded before (since these are custom configs like `ar+tt` and `ar+tt+br`. Maybe that wasn't a good idea?

2. The script will have to be slightly changed after merge of https://github.com/huggingface/datasets/pull/3664

2. Just can't figure out what wrong with dummy files... 😞 Maybe we should get rid of them at some point 😁 | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3666/reactions",

"total_count": 1,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 1,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/3666/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/3665 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3665/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3665/comments | https://api.github.com/repos/huggingface/datasets/issues/3665/events | https://github.com/huggingface/datasets/pull/3665 | 1,121,753,385 | PR_kwDODunzps4x9TnU | 3,665 | Fix MP3 resampling when a dataset's audio files have different sampling rates | {

"login": "lhoestq",

"id": 42851186,

"node_id": "MDQ6VXNlcjQyODUxMTg2",

"avatar_url": "https://avatars.githubusercontent.com/u/42851186?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/lhoestq",

"html_url": "https://github.com/lhoestq",

"followers_url": "https://api.github.com/users/lhoestq/followers",

"following_url": "https://api.github.com/users/lhoestq/following{/other_user}",

"gists_url": "https://api.github.com/users/lhoestq/gists{/gist_id}",

"starred_url": "https://api.github.com/users/lhoestq/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/lhoestq/subscriptions",

"organizations_url": "https://api.github.com/users/lhoestq/orgs",

"repos_url": "https://api.github.com/users/lhoestq/repos",

"events_url": "https://api.github.com/users/lhoestq/events{/privacy}",

"received_events_url": "https://api.github.com/users/lhoestq/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | null | [] | null | [] | 2022-02-02T10:31:45 | 2022-02-02T10:52:26 | 2022-02-02T10:52:26 | MEMBER | null | false | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3665",

"html_url": "https://github.com/huggingface/datasets/pull/3665",

"diff_url": "https://github.com/huggingface/datasets/pull/3665.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3665.patch",

"merged_at": "2022-02-02T10:52:25"

} | The resampler needs to be updated if the `orig_freq` doesn't match the audio file sampling rate

Fix https://github.com/huggingface/datasets/issues/3662 | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3665/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/3665/timeline | null | null | true |

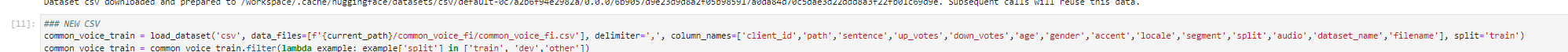

https://api.github.com/repos/huggingface/datasets/issues/3664 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3664/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3664/comments | https://api.github.com/repos/huggingface/datasets/issues/3664/events | https://github.com/huggingface/datasets/pull/3664 | 1,121,233,301 | PR_kwDODunzps4x7mg_ | 3,664 | [WIP] Return local paths to Common Voice | {

"login": "anton-l",

"id": 26864830,

"node_id": "MDQ6VXNlcjI2ODY0ODMw",

"avatar_url": "https://avatars.githubusercontent.com/u/26864830?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/anton-l",

"html_url": "https://github.com/anton-l",

"followers_url": "https://api.github.com/users/anton-l/followers",

"following_url": "https://api.github.com/users/anton-l/following{/other_user}",

"gists_url": "https://api.github.com/users/anton-l/gists{/gist_id}",

"starred_url": "https://api.github.com/users/anton-l/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/anton-l/subscriptions",

"organizations_url": "https://api.github.com/users/anton-l/orgs",

"repos_url": "https://api.github.com/users/anton-l/repos",

"events_url": "https://api.github.com/users/anton-l/events{/privacy}",

"received_events_url": "https://api.github.com/users/anton-l/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | null | [] | null | [

"Cool thanks for giving it a try @anton-l ! \r\n\r\nWould be very much in favor of having \"real\" paths to the audio files again for non-streaming use cases. At the same time it would be nice to make the audio data loading script as understandable as possible so that the community can easily add audio datasets in the future by looking at this one as an example. Think if it's clear for a contributor how to add an audio datasets script that works for the standard non-streaming case while it is easy to extend it afterwards to a streaming dataset script, then this would be perfect",

"@anton-l @patrickvonplaten @lhoestq Is it possible somehow to provide this logic inside the library instead of a loading script so that we don't need to completely rewrite all the scripts for audio datasets and users don't have to care about two different loading approaches in the same script? 🤔 ",

"> @anton-l @patrickvonplaten @lhoestq Is it possible somehow to provide this logic inside the library instead of a loading script so that we don't need to completely rewrite all the scripts for audio datasets and users don't have to care about two different loading approaches in the same script? thinking\r\n\r\nNot sure @lhoestq - what do you think? \r\n\r\nNow that we've corrected the previous resampling bug, think this one here is of high importance. @lhoestq - what do you think how we should proceed here? ",

"> @anton-l @patrickvonplaten @lhoestq Is it possible somehow to provide this logic inside the library instead of a loading script so that we don't need to completely rewrite all the scripts for audio datasets and users don't have to care about two different loading approaches in the same script? 🤔\r\n\r\nYes let's do this :)\r\n\r\nMaybe we can change the behavior of `DownloadManager.iter_archive` back to extracting the TAR archive locally, and return an iterable of (local path, file obj). And the `StreamingDownloadManager.iter_archive` can return an iterable of (relative path inside the archive, file obj) ?\r\n\r\nIn this case, a dataset would need to have something like this:\r\n```python\r\nfor path, f in files:\r\n yield id_, {\"audio\": {\"path\": path, \"bytes\": f.read() if not is_local_file(path) else None}}\r\n```\r\n\r\nAlternatively, we can allow this if we consider that `Audio.encode_example` sets the \"bytes\" field to `None` automatically if `path` is a local path:\r\n```python\r\nfor path, f in files:\r\n yield id_, {\"audio\": {\"path\": path, \"bytes\": f.read()}}\r\n```\r\nNote that in this case the file is read for nothing though (maybe it's not a big deal ?)\r\n\r\nLet me know if it sounds good to you and what you'd prefer !",

"@lhoestq I'm very much in favor of your first aproach! With the full paths returned I think we won't even need to mess with `os.path.join` vs `\"/\".join()\"` and other local/streaming differences 👍 ",

"@lhoestq I also like the idea and favor your first approach to avoid an unnecessary read and make yielding faster.",

"Looks cool - thanks for working on this. I just feel strongly about `path` being an absolute `path` that exist and can be inspected in the non-streaming case :-) For streaming=True IMO it's absolutely fine if we only have access to the bytes",

"Hi ! I started implementing this but I noticed that returning an absolute path is breaking for many datasets that do things like\r\n```python\r\nfor path, f in files:\r\n if path.startswith(data_dir):\r\n ...\r\n```\r\nso I think I will have to add a parameter to `iter_archive` like `extract_locally=True` to avoid the breaking change, does that sound good to you ?\r\n\r\nThis makes me also think that in streaming mode it could also return a local path too, if we think that writing and deleting temporary files on-the-fly while iterating over the streaming dataset is ok.",

"@lhoestq I think it is a good idea to rollback to extracting the archives locally in non-streaming mode, as far as (as you mentioned) we do not store the bytes in the Arrow file for those cases to avoid \"doubling\" the disk space usage.\r\n\r\nOn the other hand, I don't like:\r\n- neither the possibility to avoid extracting locally in non-streaming: the behavior should be consistent; thus we always extract in non-streaming\r\n - which could be the criterium to decide whether an archive should or should not be extracted? Just because I want to make a condition on path.startswith?\r\n- nor the option to download/delete temporary files in streaming (see discussion here: https://github.com/huggingface/datasets/pull/3689#issuecomment-1032858345)\r\n\r\nUnfortunately, in order to fix the datasets that are breaking after the rollback, I would suggest fixing their scripts so that the paths are handled more robustly (considering that they can be absolute or relative).",

"I agree with Albert, fixing all of the audio datasets isn't too big of a deal (yet). I can help with those if needed :)",

"Ok cool ! I'm completely rolling it back then",

"Alright I did the rollback and now you can get local paths :)\r\nFeel free to try it out and let me know if it's good for you",

"I'll fix the CI tomorrow x)",

"Ok according to the CI there around 60+ datasets to fix",

"> fixing all of the audio datasets isn't too big of a deal (yet). I can help with those if needed :)\r\n\r\nI can help with them too :)\r\n",

"Here is the full list to keep track of things:\r\n\r\n- [x] air_dialogue\r\n- [x] id_nergrit_corpus\r\n- [ ] id_newspapers_2018\r\n- [x] imdb\r\n- [ ] indic_glue\r\n- [ ] inquisitive_qg\r\n- [x] klue\r\n- [x] lama\r\n- [x] lex_glue\r\n- [ ] lm1b\r\n- [x] amazon_polarity\r\n- [ ] mac_morpho\r\n- [ ] math_dataset\r\n- [ ] md_gender_bias\r\n- [ ] mdd\r\n- [ ] assin\r\n- [ ] atomic\r\n- [ ] babi_qa\r\n- [ ] mlqa\r\n- [ ] mocha\r\n- [ ] blended_skill_talk\r\n- [ ] capes\r\n- [ ] cbt\r\n- [ ] newsgroup\r\n- [ ] cifar10\r\n- [ ] cifar100\r\n- [ ] norec\r\n- [ ] ohsumed\r\n- [ ] code_x_glue_cc_clone_detection_poj104\r\n- [x] openslr\r\n- [ ] orange_sum\r\n- [ ] paws\r\n- [ ] paws-x\r\n- [ ] cppe-5\r\n- [ ] polyglot_ner\r\n- [ ] dbrd\r\n- [ ] empathetic_dialogues\r\n- [ ] eraser_multi_rc\r\n- [ ] flores\r\n- [ ] flue\r\n- [ ] food101\r\n- [ ] py_ast\r\n- [ ] qasc\r\n- [ ] qasper\r\n- [ ] race\r\n- [ ] reuters21578\r\n- [ ] ropes\r\n- [ ] rotten_tomatoes\r\n- [x] vivos\r\n- [ ] wi_locness\r\n- [ ] wiki_movies\r\n- [ ] wikiann\r\n- [ ] wmt20_mlqe_task1\r\n- [ ] wmt20_mlqe_task2\r\n- [ ] wmt20_mlqe_task3\r\n- [ ] scicite\r\n- [ ] xsum\r\n- [ ] scielo\r\n- [ ] scifact\r\n- [ ] setimes\r\n- [ ] social_bias_frames\r\n- [ ] sogou_news\r\n- [x] speech_commands\r\n- [ ] ted_hrlr\r\n- [ ] ted_multi\r\n- [ ] tlc\r\n- [ ] turku_ner_corpus\r\n\r\n",

"I'll do my best to fix as many as possible tomorrow :)",

"the audio datasets are fixed if I didn't forget anything :)\r\n\r\nbtw what are we gonna do with the community ones that would be broken after the fix?",

"Closing in favor of https://github.com/huggingface/datasets/pull/3736"

] | 2022-02-01T21:48:27 | 2022-02-22T09:14:06 | 2022-02-22T09:14:06 | MEMBER | null | false | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3664",

"html_url": "https://github.com/huggingface/datasets/pull/3664",

"diff_url": "https://github.com/huggingface/datasets/pull/3664.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3664.patch",

"merged_at": null

} | Fixes https://github.com/huggingface/datasets/issues/3663

This is a proposed way of returning the old local file-based generator while keeping the new streaming generator intact.

TODO:

- [ ] brainstorm a bit more on https://github.com/huggingface/datasets/issues/3663 to see if we can do better

- [ ] refactor the heck out of this PR to avoid completely copying the logic between the two generators | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3664/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/3664/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/3663 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3663/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3663/comments | https://api.github.com/repos/huggingface/datasets/issues/3663/events | https://github.com/huggingface/datasets/issues/3663 | 1,121,067,647 | I_kwDODunzps5C0iJ_ | 3,663 | [Audio] Path of Common Voice cannot be used for audio loading anymore | {

"login": "patrickvonplaten",

"id": 23423619,

"node_id": "MDQ6VXNlcjIzNDIzNjE5",

"avatar_url": "https://avatars.githubusercontent.com/u/23423619?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/patrickvonplaten",

"html_url": "https://github.com/patrickvonplaten",

"followers_url": "https://api.github.com/users/patrickvonplaten/followers",

"following_url": "https://api.github.com/users/patrickvonplaten/following{/other_user}",

"gists_url": "https://api.github.com/users/patrickvonplaten/gists{/gist_id}",

"starred_url": "https://api.github.com/users/patrickvonplaten/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/patrickvonplaten/subscriptions",

"organizations_url": "https://api.github.com/users/patrickvonplaten/orgs",

"repos_url": "https://api.github.com/users/patrickvonplaten/repos",

"events_url": "https://api.github.com/users/patrickvonplaten/events{/privacy}",

"received_events_url": "https://api.github.com/users/patrickvonplaten/received_events",

"type": "User",

"site_admin": false

} | [