Model Card for Musterdatenkatalog Classifier

Model Description

- Developed by: and-effect

- Project by: Bertelsmann Stiftung

- Model type: Text Classification

- Language(s) (NLP): de

- Finetuned from model: "bert-base-german-case. For more information on the model check on this model card"

- license: cc-by-4.0

Model Sources

- Repository: https://github.com/bertelsmannstift/Musterdatenkatalog-V4

- Demo: Spaces App

This model is based on bert-base-german-cased and fine-tuned on and-effect/mdk_gov_data_titles_clf. The model was created as part of the Bertelsmann Foundation's Musterdatenkatalog (MDK) project. The model is intended to classify open source dataset titles from german municipalities. This can help municipalities in Germany, as well as data analysts and journalists, to see which cities have already published data sets and what might be missing. The model is specifically tailored for this task and uses a specific taxonomy. It thus has a clear intended downstream task and should be used with the mentioned taxonomy.

Information about the underlying taxonomy: The used taxonomy 'Musterdatenkatalog' has two levels: 'Thema' and 'Bezeichnung' which roughly translates to topic and label. There are 25 entries for the top level ranging from topics such as 'Finanzen' (finance) to 'Gesundheit' (health). The second level, 'Bezeichnung' (label) goes into more detail and would for example contain 'Krankenhaus' (hospital) in the case of the topic being health. The second level contains 241 labels. The combination of topic and label (Thema + Bezeichnung) creates a 'Musterdatensatz'. One can classify the data into the topics or the labels, results for both are presented down below. Although matching to other taxonomies is provdided in the published rdf version of the taxonomy, the model is tailored to this taxonomy. You can find the taxonomy in rdf format here. Also have a look on our visualization of the taxonomy here.

Use model for classification

Please make sure that you have installed the following packages:

pip install sentence-transformers huggingface_hub

In order to run the algorithm use the following code:

import sys

from huggingface_hub import snapshot_download

path = snapshot_download(

cache_dir="tmp/",

repo_id="and-effect/musterdatenkatalog_clf",

revision="main",

)

sys.path.append(path)

from pipeline import PipelineWrapper

pipeline = PipelineWrapper(path=path)

queries = [

{

"id": "1", "title": "Spielplätze"

},

{

"id": "2", "title": "Berliner Weihnachtsmärkte 2022"

},

{

"id": "3", "title": "Hochschulwechslerquoten zum Masterstudium nach Bundesländern",

}

]

output = pipeline(queries)

The input data must be a list of dictionaries. Each dictionary must contain the keys 'id' and 'title'. The key title is the input for the pipeline. The output is again a list of dictionaries containing the id, the title and the key 'prediction' with the prediction of the algorithm.

If you want to predict only a few titles or test the model, you can also take a look at our algorithm demo here.

Classification Process

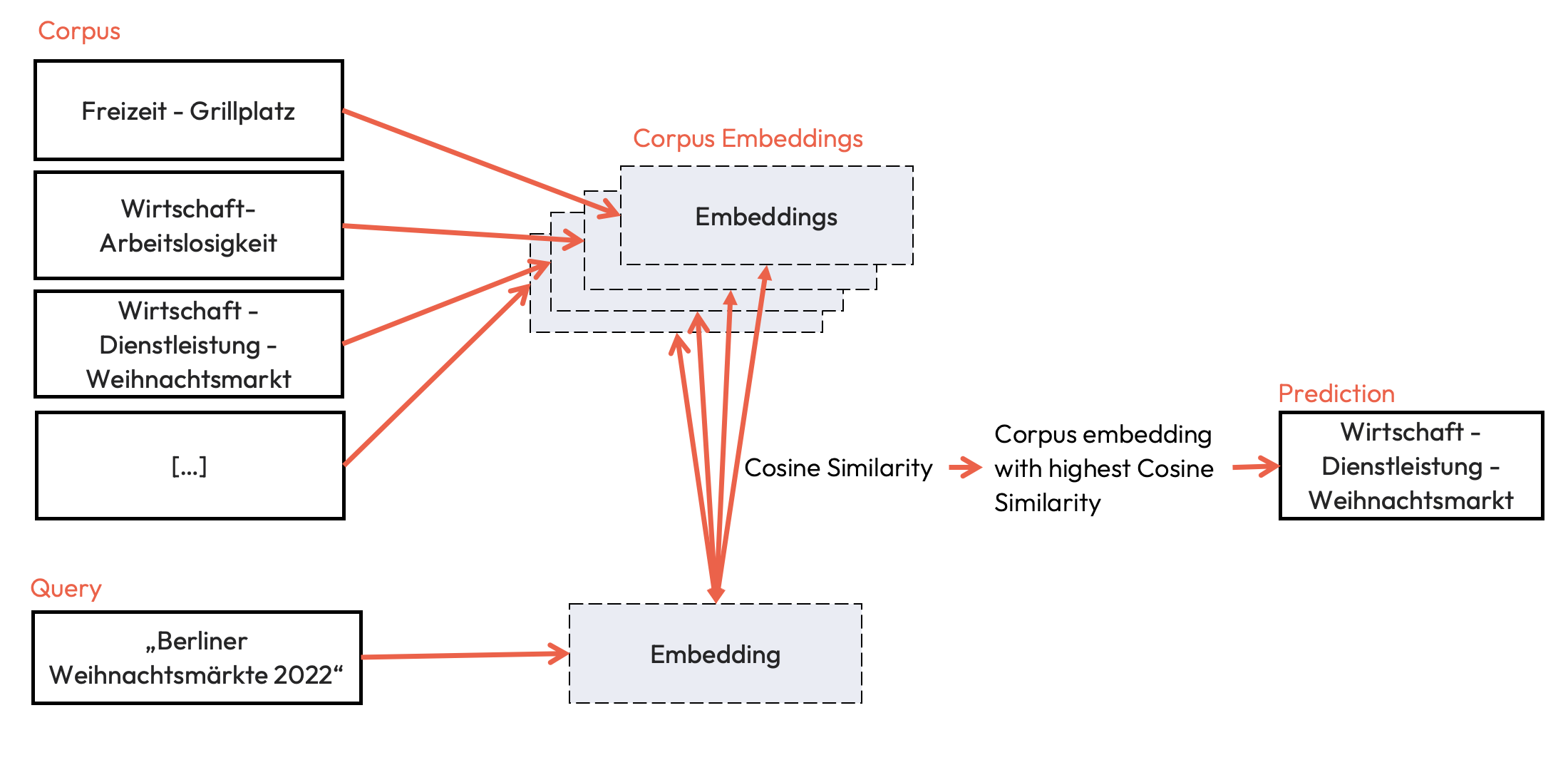

The classification is realized using semantic search. For this purpose, both the taxonomy and the queries, in this case dataset titles, are embedded with the model. Using cosine similarity, the label with the highest similarity to the query is determined.

Direct Use

Direct use of the model is possible with Sentence Transformers or Hugging Face Transformers. Since this model was developed only for classifying dataset titles from GOV Data into the taxonomy described above, we do not recommend using the model as an embedder for other domains.

Bias, Risks, and Limitations

The model has some limititations. The model has some limitations in terms of the downstream task.

- Distribution of classes: The dataset trained on is small, but at the same time the number of classes is very high. Thus, for some classes there are only a few examples (more information about the class distribution of the training data can be found here). Consequently, the performance for smaller classes may not be as good as for the majority classes. Accordingly, the evaluation is also limited.

- Systematic problems: some subjects could not be correctly classified systematically. One example is the embedding and classification of titles related to 'migration'. In none of the evaluation cases could the titles be embedded in such a way that they corresponded to their true names.

- Generalization of the model: by using semantic search, the model is able to classify titles into new categories that have not been trained, but the model is not tuned for this and therefore the performance of the model for unseen classes is likely to be limited.

Training Details

Training Data

You can find all information about the training data here. For the Fine Tuning we used the revision 172e61bb1dd20e43903f4c51e5cbec61ec9ae6e6 of the data, since the performance was better with this previous version of the data. We additionally applied AugmentedSBERT to extend the dataset for better performance.

Preprocessing

This section describes the generating of the input data for the model. More information on the preprocessing of the data itself can be found here

The model is fine tuned with similar and dissimilar pairs. Similar pairs are built with all titles and their true label. Dissimilar pairs defined as pairs of title and all labels, except the true label. Since the combinations of dissimilar is much higher, a sample of two pairs per title is selected.

| pairs | size |

|---|---|

| train_similar_pairs | 1964 |

| train_unsimilar_pairs | 982 |

| test_similar_pairs | 498 |

| test_unsimilar_pairs | 249 |

We trained a CrossEncoder based on this data and used it again to generate new samplings based on the dataset titles (silver data). Using both we then fine tuned a bi-encoder, representing the resulting model.

Training Parameter

The model was trained with the parameters:

DataLoader:

torch.utils.data.dataloader.DataLoader

Loss:

sentence_transformers.losses.CosineSimilarityLoss.CosineSimilarityLoss

Hyperparameters:

{

"epochs": 3,

"warmup_steps": 100,

}

Evaluation

All metrices express the models ability to classify dataset titles from GOVDATA into the taxonomy described here. For more information see VERLINKUNG MDK Projekt.

Testing Data, Factors & Metrics

Testing Data

The evaluation data can be found here. Since the model is trained on revision 172e61bb1dd20e43903f4c51e5cbec61ec9ae6e6 for evaluation, the evaluation metrics rely on the same revision.

Metrics

The model performance is tested with four metrics: Accuracy, Precision, Recall and F1 Score. Although the data is imbalanced accuracy was still used as the imbalance accurately represents the tendency for more entries for some classes, for example 'Raumplanung - Bebauungsplan'.

A lot of classes were not predicted and are thus set to zero for the calculation of precision, recall and f1 score. For these metrices additional calculations were performed. These are denoted with 'II' in the table and excluded the classes with less than two predictions for the level 'Bezeichnung'. One must be careful when interpreting the results of these calculations though as they do not give any information about the classes left out.

The tasks denoted with 'I' include all classes.

The tasks are split not only into either including all classes ('I') or not ('II'), they are also divided into a task on 'Bezeichnung' or 'Thema'. As previously mentioned this has to do with the underlying taxonomy. The task on 'Thema' is performed on the first level of the taxonomy with 25 classes, the task on 'Bezeichnung' is performed on the second level which has 241 classes.

Results

| task | acccuracy | precision (macro) | recall (macro) | f1 (macro) |

|---|---|---|---|---|

| Test dataset 'Bezeichnung' I | 0.73 (.82)* | 0.66 | 0.71 | 0.67 |

| Test dataset 'Thema' I | 0.89 (.92)* | 0.90 | 0.89 | 0.88 |

| Test dataset 'Bezeichnung' II | 0.73 | 0.58 | 0.82 | 0.65 |

| Validation dataset 'Bezeichnung' I | 0.51 | 0.35 | 0.36 | 0.33 |

| Validation dataset 'Thema' I | 0.77 | 0.59 | 0.68 | 0.60 |

| Validation dataset 'Bezeichnung' II | 0.51 | 0.58 | 0.69 | 0.59 |

* the accuracy in brackets was calculated with a manual analysis. This was done to check for data entries that could for example be part of more than one class and thus were actually correctly classified by the algorithm. In this step the correct labeling of the test data was also checked again for possible mistakes and resulted in a better performance.

The validation dataset was created manually to check certain classes.

- Downloads last month

- 246

Dataset used to train and-effect/musterdatenkatalog_clf

Space using and-effect/musterdatenkatalog_clf 1

Evaluation results

- Accuracy 'Bezeichnung' on and-effect/mdk_gov_data_titles_clftest set self-reported0.730

- Precision 'Bezeichnung' (macro) on and-effect/mdk_gov_data_titles_clftest set self-reported0.660

- Recall 'Bezeichnung' (macro) on and-effect/mdk_gov_data_titles_clftest set self-reported0.710

- F1 'Bezeichnung' (macro) on and-effect/mdk_gov_data_titles_clftest set self-reported0.670

- Accuracy 'Thema' on and-effect/mdk_gov_data_titles_clftest set self-reported0.890

- Precision 'Thema' (macro) on and-effect/mdk_gov_data_titles_clftest set self-reported0.900

- Recall 'Thema' (macro) on and-effect/mdk_gov_data_titles_clftest set self-reported0.890

- F1 'Thema' (macro) on and-effect/mdk_gov_data_titles_clftest set self-reported0.880