Upload folder using huggingface_hub

Browse files- README.md +108 -0

- assets/got_logo.png +0 -0

- assets/got_support.jpg +0 -0

- assets/train_sample.jpg +0 -0

- config.json +38 -0

- generation_config.json +6 -0

- got_vision_b.py +468 -0

- model.safetensors +3 -0

- modeling_GOT.py +881 -0

- qwen.tiktoken +0 -0

- qwen_original.tiktoken +0 -0

- render_tools.py +96 -0

- special_tokens_map.json +9 -0

- tokenisation.ipynb +571 -0

- tokenization_qwen.py +283 -0

- tokenization_qwen_original.py +283 -0

- tokenizer_config.json +14 -0

README.md

ADDED

|

@@ -0,0 +1,108 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

pipeline_tag: image-text-to-text

|

| 3 |

+

library_name: transformers

|

| 4 |

+

language:

|

| 5 |

+

- multilingual

|

| 6 |

+

tags:

|

| 7 |

+

- got

|

| 8 |

+

- vision-language

|

| 9 |

+

- ocr2.0

|

| 10 |

+

- custom_code

|

| 11 |

+

license: apache-2.0

|

| 12 |

+

---

|

| 13 |

+

|

| 14 |

+

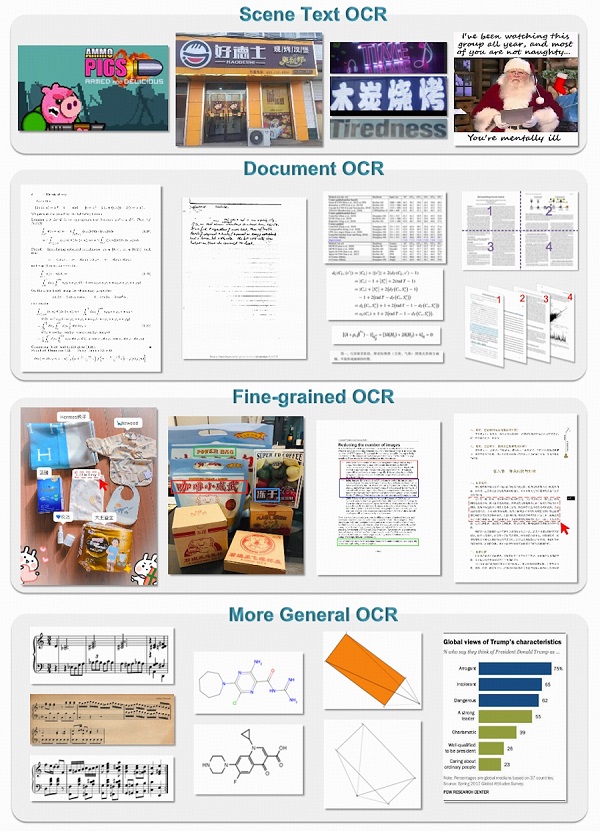

<h1>General OCR Theory: Towards OCR-2.0 via a Unified End-to-end Model

|

| 15 |

+

</h1>

|

| 16 |

+

|

| 17 |

+

[🔋Online Demo](https://huggingface.co/spaces/ucaslcl/GOT_online) | [🌟GitHub](https://github.com/Ucas-HaoranWei/GOT-OCR2.0/) | [📜Paper](https://arxiv.org/abs/2409.01704)</a>

|

| 18 |

+

|

| 19 |

+

|

| 20 |

+

[Haoran Wei*](https://scholar.google.com/citations?user=J4naK0MAAAAJ&hl=en), Chenglong Liu*, Jinyue Chen, Jia Wang, Lingyu Kong, Yanming Xu, [Zheng Ge](https://joker316701882.github.io/), Liang Zhao, [Jianjian Sun](https://scholar.google.com/citations?user=MVZrGkYAAAAJ&hl=en), [Yuang Peng](https://scholar.google.com.hk/citations?user=J0ko04IAAAAJ&hl=zh-CN&oi=ao), Chunrui Han, [Xiangyu Zhang](https://scholar.google.com/citations?user=yuB-cfoAAAAJ&hl=en)

|

| 21 |

+

|

| 22 |

+

|

| 23 |

+

|

| 24 |

+

|

| 25 |

+

|

| 26 |

+

|

| 27 |

+

|

| 28 |

+

## Usage

|

| 29 |

+

Inference using Huggingface transformers on NVIDIA GPUs. Requirements tested on python 3.10:

|

| 30 |

+

```

|

| 31 |

+

torch==2.0.1

|

| 32 |

+

torchvision==0.15.2

|

| 33 |

+

transformers==4.37.2

|

| 34 |

+

tiktoken==0.6.0

|

| 35 |

+

verovio==4.3.1

|

| 36 |

+

accelerate==0.28.0

|

| 37 |

+

```

|

| 38 |

+

|

| 39 |

+

|

| 40 |

+

```python

|

| 41 |

+

from transformers import AutoModel, AutoTokenizer

|

| 42 |

+

|

| 43 |

+

tokenizer = AutoTokenizer.from_pretrained('ucaslcl/GOT-OCR2_0', trust_remote_code=True)

|

| 44 |

+

model = AutoModel.from_pretrained('ucaslcl/GOT-OCR2_0', trust_remote_code=True, low_cpu_mem_usage=True, device_map='cuda', use_safetensors=True, pad_token_id=tokenizer.eos_token_id)

|

| 45 |

+

model = model.eval().cuda()

|

| 46 |

+

|

| 47 |

+

|

| 48 |

+

# input your test image

|

| 49 |

+

image_file = 'xxx.jpg'

|

| 50 |

+

|

| 51 |

+

# plain texts OCR

|

| 52 |

+

res = model.chat(tokenizer, image_file, ocr_type='ocr')

|

| 53 |

+

|

| 54 |

+

# format texts OCR:

|

| 55 |

+

# res = model.chat(tokenizer, image_file, ocr_type='format')

|

| 56 |

+

|

| 57 |

+

# fine-grained OCR:

|

| 58 |

+

# res = model.chat(tokenizer, image_file, ocr_type='ocr', ocr_box='')

|

| 59 |

+

# res = model.chat(tokenizer, image_file, ocr_type='format', ocr_box='')

|

| 60 |

+

# res = model.chat(tokenizer, image_file, ocr_type='ocr', ocr_color='')

|

| 61 |

+

# res = model.chat(tokenizer, image_file, ocr_type='format', ocr_color='')

|

| 62 |

+

|

| 63 |

+

# multi-crop OCR:

|

| 64 |

+

# res = model.chat_crop(tokenizer, image_file, ocr_type='ocr')

|

| 65 |

+

# res = model.chat_crop(tokenizer, image_file, ocr_type='format')

|

| 66 |

+

|

| 67 |

+

# render the formatted OCR results:

|

| 68 |

+

# res = model.chat(tokenizer, image_file, ocr_type='format', render=True, save_render_file = './demo.html')

|

| 69 |

+

|

| 70 |

+

print(res)

|

| 71 |

+

|

| 72 |

+

|

| 73 |

+

```

|

| 74 |

+

More details about 'ocr_type', 'ocr_box', 'ocr_color', and 'render' can be found at our GitHub.

|

| 75 |

+

Our training codes are available at our [GitHub](https://github.com/Ucas-HaoranWei/GOT-OCR2.0/).

|

| 76 |

+

|

| 77 |

+

|

| 78 |

+

|

| 79 |

+

## More Multimodal Projects

|

| 80 |

+

|

| 81 |

+

👏 Welcome to explore more multimodal projects of our team:

|

| 82 |

+

|

| 83 |

+

[Vary](https://github.com/Ucas-HaoranWei/Vary) | [Fox](https://github.com/ucaslcl/Fox) | [OneChart](https://github.com/LingyvKong/OneChart)

|

| 84 |

+

|

| 85 |

+

## Citation

|

| 86 |

+

|

| 87 |

+

If you find our work helpful, please consider citing our papers 📝 and liking this project ❤️!

|

| 88 |

+

|

| 89 |

+

```bib

|

| 90 |

+

@article{wei2024general,

|

| 91 |

+

title={General OCR Theory: Towards OCR-2.0 via a Unified End-to-end Model},

|

| 92 |

+

author={Wei, Haoran and Liu, Chenglong and Chen, Jinyue and Wang, Jia and Kong, Lingyu and Xu, Yanming and Ge, Zheng and Zhao, Liang and Sun, Jianjian and Peng, Yuang and others},

|

| 93 |

+

journal={arXiv preprint arXiv:2409.01704},

|

| 94 |

+

year={2024}

|

| 95 |

+

}

|

| 96 |

+

@article{liu2024focus,

|

| 97 |

+

title={Focus Anywhere for Fine-grained Multi-page Document Understanding},

|

| 98 |

+

author={Liu, Chenglong and Wei, Haoran and Chen, Jinyue and Kong, Lingyu and Ge, Zheng and Zhu, Zining and Zhao, Liang and Sun, Jianjian and Han, Chunrui and Zhang, Xiangyu},

|

| 99 |

+

journal={arXiv preprint arXiv:2405.14295},

|

| 100 |

+

year={2024}

|

| 101 |

+

}

|

| 102 |

+

@article{wei2023vary,

|

| 103 |

+

title={Vary: Scaling up the Vision Vocabulary for Large Vision-Language Models},

|

| 104 |

+

author={Wei, Haoran and Kong, Lingyu and Chen, Jinyue and Zhao, Liang and Ge, Zheng and Yang, Jinrong and Sun, Jianjian and Han, Chunrui and Zhang, Xiangyu},

|

| 105 |

+

journal={arXiv preprint arXiv:2312.06109},

|

| 106 |

+

year={2023}

|

| 107 |

+

}

|

| 108 |

+

```

|

assets/got_logo.png

ADDED

|

assets/got_support.jpg

ADDED

|

assets/train_sample.jpg

ADDED

|

config.json

ADDED

|

@@ -0,0 +1,38 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_name_or_path": "ucaslcl/GOT-OCR2_0",

|

| 3 |

+

"architectures": [

|

| 4 |

+

"GOTQwenForCausalLM"

|

| 5 |

+

],

|

| 6 |

+

"auto_map": {

|

| 7 |

+

"AutoConfig": "modeling_GOT.GOTConfig",

|

| 8 |

+

"AutoModel": "modeling_GOT.GOTQwenForCausalLM"

|

| 9 |

+

},

|

| 10 |

+

"attention_dropout": 0.0,

|

| 11 |

+

"bos_token_id": 151643,

|

| 12 |

+

"eos_token_id": 151643,

|

| 13 |

+

"freeze_vision_tower": false,

|

| 14 |

+

"hidden_act": "silu",

|

| 15 |

+

"hidden_size": 1024,

|

| 16 |

+

"im_end_token": 151858,

|

| 17 |

+

"im_patch_token": 151859,

|

| 18 |

+

"im_start_token": 151857,

|

| 19 |

+

"image_token_len": 256,

|

| 20 |

+

"initializer_range": 0.02,

|

| 21 |

+

"intermediate_size": 2816,

|

| 22 |

+

"max_position_embeddings": 32768,

|

| 23 |

+

"max_window_layers": 21,

|

| 24 |

+

"model_type": "GOT",

|

| 25 |

+

"num_attention_heads": 16,

|

| 26 |

+

"num_hidden_layers": 24,

|

| 27 |

+

"num_key_value_heads": 16,

|

| 28 |

+

"rms_norm_eps": 1e-06,

|

| 29 |

+

"rope_theta": 1000000.0,

|

| 30 |

+

"sliding_window": 32768,

|

| 31 |

+

"tie_word_embeddings": true,

|

| 32 |

+

"torch_dtype": "bfloat16",

|

| 33 |

+

"transformers_version": "4.37.2",

|

| 34 |

+

"use_cache": true,

|

| 35 |

+

"use_im_start_end": true,

|

| 36 |

+

"use_sliding_window": false,

|

| 37 |

+

"vocab_size": 151860

|

| 38 |

+

}

|

generation_config.json

ADDED

|

@@ -0,0 +1,6 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"bos_token_id": 151643,

|

| 3 |

+

"eos_token_id": 151643,

|

| 4 |

+

"max_new_tokens": 2048,

|

| 5 |

+

"transformers_version": "4.37.2"

|

| 6 |

+

}

|

got_vision_b.py

ADDED

|

@@ -0,0 +1,468 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

import torch

|

| 2 |

+

import torch.nn.functional as F

|

| 3 |

+

from typing import Optional, Tuple, Type

|

| 4 |

+

from functools import partial

|

| 5 |

+

import torch.nn as nn

|

| 6 |

+

from typing import Type

|

| 7 |

+

|

| 8 |

+

|

| 9 |

+

|

| 10 |

+

class MLPBlock(nn.Module):

|

| 11 |

+

def __init__(

|

| 12 |

+

self,

|

| 13 |

+

embedding_dim: int,

|

| 14 |

+

mlp_dim: int,

|

| 15 |

+

act: Type[nn.Module] = nn.GELU,

|

| 16 |

+

) -> None:

|

| 17 |

+

super().__init__()

|

| 18 |

+

self.lin1 = nn.Linear(embedding_dim, mlp_dim)

|

| 19 |

+

self.lin2 = nn.Linear(mlp_dim, embedding_dim)

|

| 20 |

+

self.act = act()

|

| 21 |

+

|

| 22 |

+

def forward(self, x: torch.Tensor) -> torch.Tensor:

|

| 23 |

+

return self.lin2(self.act(self.lin1(x)))

|

| 24 |

+

|

| 25 |

+

|

| 26 |

+

|

| 27 |

+

class LayerNorm2d(nn.Module):

|

| 28 |

+

def __init__(self, num_channels: int, eps: float = 1e-6) -> None:

|

| 29 |

+

super().__init__()

|

| 30 |

+

self.weight = nn.Parameter(torch.ones(num_channels))

|

| 31 |

+

self.bias = nn.Parameter(torch.zeros(num_channels))

|

| 32 |

+

self.eps = eps

|

| 33 |

+

|

| 34 |

+

def forward(self, x: torch.Tensor) -> torch.Tensor:

|

| 35 |

+

u = x.mean(1, keepdim=True)

|

| 36 |

+

s = (x - u).pow(2).mean(1, keepdim=True)

|

| 37 |

+

x = (x - u) / torch.sqrt(s + self.eps)

|

| 38 |

+

x = self.weight[:, None, None] * x + self.bias[:, None, None]

|

| 39 |

+

return x

|

| 40 |

+

|

| 41 |

+

|

| 42 |

+

|

| 43 |

+

class ImageEncoderViT(nn.Module):

|

| 44 |

+

def __init__(

|

| 45 |

+

self,

|

| 46 |

+

img_size: int = 1024,

|

| 47 |

+

patch_size: int = 16,

|

| 48 |

+

in_chans: int = 3,

|

| 49 |

+

embed_dim: int = 768,

|

| 50 |

+

depth: int = 12,

|

| 51 |

+

num_heads: int = 12,

|

| 52 |

+

mlp_ratio: float = 4.0,

|

| 53 |

+

out_chans: int = 256,

|

| 54 |

+

qkv_bias: bool = True,

|

| 55 |

+

norm_layer: Type[nn.Module] = nn.LayerNorm,

|

| 56 |

+

act_layer: Type[nn.Module] = nn.GELU,

|

| 57 |

+

use_abs_pos: bool = True,

|

| 58 |

+

use_rel_pos: bool = False,

|

| 59 |

+

rel_pos_zero_init: bool = True,

|

| 60 |

+

window_size: int = 0,

|

| 61 |

+

global_attn_indexes: Tuple[int, ...] = (),

|

| 62 |

+

) -> None:

|

| 63 |

+

"""

|

| 64 |

+

Args:

|

| 65 |

+

img_size (int): Input image size.

|

| 66 |

+

patch_size (int): Patch size.

|

| 67 |

+

in_chans (int): Number of input image channels.

|

| 68 |

+

embed_dim (int): Patch embedding dimension.

|

| 69 |

+

depth (int): Depth of ViT.

|

| 70 |

+

num_heads (int): Number of attention heads in each ViT block.

|

| 71 |

+

mlp_ratio (float): Ratio of mlp hidden dim to embedding dim.

|

| 72 |

+

qkv_bias (bool): If True, add a learnable bias to query, key, value.

|

| 73 |

+

norm_layer (nn.Module): Normalization layer.

|

| 74 |

+

act_layer (nn.Module): Activation layer.

|

| 75 |

+

use_abs_pos (bool): If True, use absolute positional embeddings.

|

| 76 |

+

use_rel_pos (bool): If True, add relative positional embeddings to the attention map.

|

| 77 |

+

rel_pos_zero_init (bool): If True, zero initialize relative positional parameters.

|

| 78 |

+

window_size (int): Window size for window attention blocks.

|

| 79 |

+

global_attn_indexes (list): Indexes for blocks using global attention.

|

| 80 |

+

"""

|

| 81 |

+

super().__init__()

|

| 82 |

+

self.img_size = img_size

|

| 83 |

+

|

| 84 |

+

self.patch_embed = PatchEmbed(

|

| 85 |

+

kernel_size=(patch_size, patch_size),

|

| 86 |

+

stride=(patch_size, patch_size),

|

| 87 |

+

in_chans=in_chans,

|

| 88 |

+

embed_dim=embed_dim,

|

| 89 |

+

)

|

| 90 |

+

|

| 91 |

+

self.pos_embed: Optional[nn.Parameter] = None

|

| 92 |

+

if use_abs_pos:

|

| 93 |

+

# Initialize absolute positional embedding with pretrain image size.

|

| 94 |

+

self.pos_embed = nn.Parameter(

|

| 95 |

+

torch.zeros(1, img_size // patch_size, img_size // patch_size, embed_dim)

|

| 96 |

+

)

|

| 97 |

+

|

| 98 |

+

self.blocks = nn.ModuleList()

|

| 99 |

+

for i in range(depth):

|

| 100 |

+

block = Block(

|

| 101 |

+

dim=embed_dim,

|

| 102 |

+

num_heads=num_heads,

|

| 103 |

+

mlp_ratio=mlp_ratio,

|

| 104 |

+

qkv_bias=qkv_bias,

|

| 105 |

+

norm_layer=norm_layer,

|

| 106 |

+

act_layer=act_layer,

|

| 107 |

+

use_rel_pos=use_rel_pos,

|

| 108 |

+

rel_pos_zero_init=rel_pos_zero_init,

|

| 109 |

+

window_size=window_size if i not in global_attn_indexes else 0,

|

| 110 |

+

input_size=(img_size // patch_size, img_size // patch_size),

|

| 111 |

+

)

|

| 112 |

+

self.blocks.append(block)

|

| 113 |

+

|

| 114 |

+

self.neck = nn.Sequential(

|

| 115 |

+

nn.Conv2d(

|

| 116 |

+

embed_dim,

|

| 117 |

+

out_chans,

|

| 118 |

+

kernel_size=1,

|

| 119 |

+

bias=False,

|

| 120 |

+

),

|

| 121 |

+

LayerNorm2d(out_chans),

|

| 122 |

+

nn.Conv2d(

|

| 123 |

+

out_chans,

|

| 124 |

+

out_chans,

|

| 125 |

+

kernel_size=3,

|

| 126 |

+

padding=1,

|

| 127 |

+

bias=False,

|

| 128 |

+

),

|

| 129 |

+

LayerNorm2d(out_chans),

|

| 130 |

+

)

|

| 131 |

+

|

| 132 |

+

|

| 133 |

+

self.net_2 = nn.Conv2d(256, 512, kernel_size=3, stride=2, padding=1, bias=False)

|

| 134 |

+

self.net_3 = nn.Conv2d(512, 1024, kernel_size=3, stride=2, padding=1, bias=False)

|

| 135 |

+

|

| 136 |

+

def forward(self, x: torch.Tensor) -> torch.Tensor:

|

| 137 |

+

x = self.patch_embed(x)

|

| 138 |

+

if self.pos_embed is not None:

|

| 139 |

+

x = x + self.pos_embed

|

| 140 |

+

|

| 141 |

+

for blk in self.blocks:

|

| 142 |

+

x = blk(x)

|

| 143 |

+

|

| 144 |

+

x = self.neck(x.permute(0, 3, 1, 2))

|

| 145 |

+

x = self.net_2(x)

|

| 146 |

+

x = self.net_3(x)

|

| 147 |

+

|

| 148 |

+

|

| 149 |

+

return x

|

| 150 |

+

|

| 151 |

+

|

| 152 |

+

class Block(nn.Module):

|

| 153 |

+

"""Transformer blocks with support of window attention and residual propagation blocks"""

|

| 154 |

+

|

| 155 |

+

def __init__(

|

| 156 |

+

self,

|

| 157 |

+

dim: int,

|

| 158 |

+

num_heads: int,

|

| 159 |

+

mlp_ratio: float = 4.0,

|

| 160 |

+

qkv_bias: bool = True,

|

| 161 |

+

norm_layer: Type[nn.Module] = nn.LayerNorm,

|

| 162 |

+

act_layer: Type[nn.Module] = nn.GELU,

|

| 163 |

+

use_rel_pos: bool = False,

|

| 164 |

+

rel_pos_zero_init: bool = True,

|

| 165 |

+

window_size: int = 0,

|

| 166 |

+

input_size: Optional[Tuple[int, int]] = None,

|

| 167 |

+

) -> None:

|

| 168 |

+

"""

|

| 169 |

+

Args:

|

| 170 |

+

dim (int): Number of input channels.

|

| 171 |

+

num_heads (int): Number of attention heads in each ViT block.

|

| 172 |

+

mlp_ratio (float): Ratio of mlp hidden dim to embedding dim.

|

| 173 |

+

qkv_bias (bool): If True, add a learnable bias to query, key, value.

|

| 174 |

+

norm_layer (nn.Module): Normalization layer.

|

| 175 |

+

act_layer (nn.Module): Activation layer.

|

| 176 |

+

use_rel_pos (bool): If True, add relative positional embeddings to the attention map.

|

| 177 |

+

rel_pos_zero_init (bool): If True, zero initialize relative positional parameters.

|

| 178 |

+

window_size (int): Window size for window attention blocks. If it equals 0, then

|

| 179 |

+

use global attention.

|

| 180 |

+

input_size (tuple(int, int) or None): Input resolution for calculating the relative

|

| 181 |

+

positional parameter size.

|

| 182 |

+

"""

|

| 183 |

+

super().__init__()

|

| 184 |

+

self.norm1 = norm_layer(dim)

|

| 185 |

+

self.attn = Attention(

|

| 186 |

+

dim,

|

| 187 |

+

num_heads=num_heads,

|

| 188 |

+

qkv_bias=qkv_bias,

|

| 189 |

+

use_rel_pos=use_rel_pos,

|

| 190 |

+

rel_pos_zero_init=rel_pos_zero_init,

|

| 191 |

+

input_size=input_size if window_size == 0 else (window_size, window_size),

|

| 192 |

+

)

|

| 193 |

+

|

| 194 |

+

self.norm2 = norm_layer(dim)

|

| 195 |

+

self.mlp = MLPBlock(embedding_dim=dim, mlp_dim=int(dim * mlp_ratio), act=act_layer)

|

| 196 |

+

|

| 197 |

+

self.window_size = window_size

|

| 198 |

+

|

| 199 |

+

def forward(self, x: torch.Tensor) -> torch.Tensor:

|

| 200 |

+

shortcut = x

|

| 201 |

+

x = self.norm1(x)

|

| 202 |

+

# Window partition

|

| 203 |

+

if self.window_size > 0:

|

| 204 |

+

H, W = x.shape[1], x.shape[2]

|

| 205 |

+

x, pad_hw = window_partition(x, self.window_size)

|

| 206 |

+

|

| 207 |

+

x = self.attn(x)

|

| 208 |

+

# Reverse window partition

|

| 209 |

+

if self.window_size > 0:

|

| 210 |

+

x = window_unpartition(x, self.window_size, pad_hw, (H, W))

|

| 211 |

+

|

| 212 |

+

x = shortcut + x

|

| 213 |

+

x = x + self.mlp(self.norm2(x))

|

| 214 |

+

|

| 215 |

+

return x

|

| 216 |

+

|

| 217 |

+

|

| 218 |

+

class Attention(nn.Module):

|

| 219 |

+

"""Multi-head Attention block with relative position embeddings."""

|

| 220 |

+

|

| 221 |

+

def __init__(

|

| 222 |

+

self,

|

| 223 |

+

dim: int,

|

| 224 |

+

num_heads: int = 8,

|

| 225 |

+

qkv_bias: bool = True,

|

| 226 |

+

use_rel_pos: bool = False,

|

| 227 |

+

rel_pos_zero_init: bool = True,

|

| 228 |

+

input_size: Optional[Tuple[int, int]] = None,

|

| 229 |

+

) -> None:

|

| 230 |

+

"""

|

| 231 |

+

Args:

|

| 232 |

+

dim (int): Number of input channels.

|

| 233 |

+

num_heads (int): Number of attention heads.

|

| 234 |

+

qkv_bias (bool): If True, add a learnable bias to query, key, value.

|

| 235 |

+

rel_pos (bool): If True, add relative positional embeddings to the attention map.

|

| 236 |

+

rel_pos_zero_init (bool): If True, zero initialize relative positional parameters.

|

| 237 |

+

input_size (tuple(int, int) or None): Input resolution for calculating the relative

|

| 238 |

+

positional parameter size.

|

| 239 |

+

"""

|

| 240 |

+

super().__init__()

|

| 241 |

+

self.num_heads = num_heads

|

| 242 |

+

head_dim = dim // num_heads

|

| 243 |

+

self.scale = head_dim**-0.5

|

| 244 |

+

|

| 245 |

+

self.qkv = nn.Linear(dim, dim * 3, bias=qkv_bias)

|

| 246 |

+

self.proj = nn.Linear(dim, dim)

|

| 247 |

+

|

| 248 |

+

self.use_rel_pos = use_rel_pos

|

| 249 |

+

if self.use_rel_pos:

|

| 250 |

+

assert (

|

| 251 |

+

input_size is not None

|

| 252 |

+

), "Input size must be provided if using relative positional encoding."

|

| 253 |

+

# initialize relative positional embeddings

|

| 254 |

+

self.rel_pos_h = nn.Parameter(torch.zeros(2 * input_size[0] - 1, head_dim))

|

| 255 |

+

self.rel_pos_w = nn.Parameter(torch.zeros(2 * input_size[1] - 1, head_dim))

|

| 256 |

+

|

| 257 |

+

def forward(self, x: torch.Tensor) -> torch.Tensor:

|

| 258 |

+

B, H, W, _ = x.shape

|

| 259 |

+

# qkv with shape (3, B, nHead, H * W, C)

|

| 260 |

+

qkv = self.qkv(x).reshape(B, H * W, 3, self.num_heads, -1).permute(2, 0, 3, 1, 4)

|

| 261 |

+

# q, k, v with shape (B * nHead, H * W, C)

|

| 262 |

+

q, k, v = qkv.reshape(3, B * self.num_heads, H * W, -1).unbind(0)

|

| 263 |

+

|

| 264 |

+

attn = (q * self.scale) @ k.transpose(-2, -1)

|

| 265 |

+

|

| 266 |

+

if self.use_rel_pos:

|

| 267 |

+

attn = add_decomposed_rel_pos(attn, q, self.rel_pos_h, self.rel_pos_w, (H, W), (H, W))

|

| 268 |

+

|

| 269 |

+

attn = attn.softmax(dim=-1)

|

| 270 |

+

x = (attn @ v).view(B, self.num_heads, H, W, -1).permute(0, 2, 3, 1, 4).reshape(B, H, W, -1)

|

| 271 |

+

x = self.proj(x)

|

| 272 |

+

|

| 273 |

+

return x

|

| 274 |

+

|

| 275 |

+

|

| 276 |

+

def window_partition(x: torch.Tensor, window_size: int) -> Tuple[torch.Tensor, Tuple[int, int]]:

|

| 277 |

+

"""

|

| 278 |

+

Partition into non-overlapping windows with padding if needed.

|

| 279 |

+

Args:

|

| 280 |

+

x (tensor): input tokens with [B, H, W, C].

|

| 281 |

+

window_size (int): window size.

|

| 282 |

+

|

| 283 |

+

Returns:

|

| 284 |

+

windows: windows after partition with [B * num_windows, window_size, window_size, C].

|

| 285 |

+

(Hp, Wp): padded height and width before partition

|

| 286 |

+

"""

|

| 287 |

+

B, H, W, C = x.shape

|

| 288 |

+

|

| 289 |

+

pad_h = (window_size - H % window_size) % window_size

|

| 290 |

+

pad_w = (window_size - W % window_size) % window_size

|

| 291 |

+

if pad_h > 0 or pad_w > 0:

|

| 292 |

+

x = F.pad(x, (0, 0, 0, pad_w, 0, pad_h))

|

| 293 |

+

Hp, Wp = H + pad_h, W + pad_w

|

| 294 |

+

|

| 295 |

+

x = x.view(B, Hp // window_size, window_size, Wp // window_size, window_size, C)

|

| 296 |

+

windows = x.permute(0, 1, 3, 2, 4, 5).contiguous().view(-1, window_size, window_size, C)

|

| 297 |

+

return windows, (Hp, Wp)

|

| 298 |

+

|

| 299 |

+

|

| 300 |

+

def window_unpartition(

|

| 301 |

+

windows: torch.Tensor, window_size: int, pad_hw: Tuple[int, int], hw: Tuple[int, int]

|

| 302 |

+

) -> torch.Tensor:

|

| 303 |

+

"""

|

| 304 |

+

Window unpartition into original sequences and removing padding.

|

| 305 |

+

Args:

|

| 306 |

+

windows (tensor): input tokens with [B * num_windows, window_size, window_size, C].

|

| 307 |

+

window_size (int): window size.

|

| 308 |

+

pad_hw (Tuple): padded height and width (Hp, Wp).

|

| 309 |

+

hw (Tuple): original height and width (H, W) before padding.

|

| 310 |

+

|

| 311 |

+

Returns:

|

| 312 |

+

x: unpartitioned sequences with [B, H, W, C].

|

| 313 |

+

"""

|

| 314 |

+

Hp, Wp = pad_hw

|

| 315 |

+

H, W = hw

|

| 316 |

+

B = windows.shape[0] // (Hp * Wp // window_size // window_size)

|

| 317 |

+

x = windows.view(B, Hp // window_size, Wp // window_size, window_size, window_size, -1)

|

| 318 |

+

x = x.permute(0, 1, 3, 2, 4, 5).contiguous().view(B, Hp, Wp, -1)

|

| 319 |

+

|

| 320 |

+

if Hp > H or Wp > W:

|

| 321 |

+

x = x[:, :H, :W, :].contiguous()

|

| 322 |

+

return x

|

| 323 |

+

|

| 324 |

+

|

| 325 |

+

def get_rel_pos(q_size: int, k_size: int, rel_pos: torch.Tensor) -> torch.Tensor:

|

| 326 |

+

"""

|

| 327 |

+

Get relative positional embeddings according to the relative positions of

|

| 328 |

+

query and key sizes.

|

| 329 |

+

Args:

|

| 330 |

+

q_size (int): size of query q.

|

| 331 |

+

k_size (int): size of key k.

|

| 332 |

+

rel_pos (Tensor): relative position embeddings (L, C).

|

| 333 |

+

|

| 334 |

+

Returns:

|

| 335 |

+

Extracted positional embeddings according to relative positions.

|

| 336 |

+

"""

|

| 337 |

+

max_rel_dist = int(2 * max(q_size, k_size) - 1)

|

| 338 |

+

# Interpolate rel pos if needed.

|

| 339 |

+

if rel_pos.shape[0] != max_rel_dist:

|

| 340 |

+

# Interpolate rel pos.

|

| 341 |

+

rel_pos_resized = F.interpolate(

|

| 342 |

+

rel_pos.reshape(1, rel_pos.shape[0], -1).permute(0, 2, 1),

|

| 343 |

+

size=max_rel_dist,

|

| 344 |

+

mode="linear",

|

| 345 |

+

)

|

| 346 |

+

rel_pos_resized = rel_pos_resized.reshape(-1, max_rel_dist).permute(1, 0)

|

| 347 |

+

else:

|

| 348 |

+

rel_pos_resized = rel_pos

|

| 349 |

+

|

| 350 |

+

# Scale the coords with short length if shapes for q and k are different.

|

| 351 |

+

q_coords = torch.arange(q_size)[:, None] * max(k_size / q_size, 1.0)

|

| 352 |

+

k_coords = torch.arange(k_size)[None, :] * max(q_size / k_size, 1.0)

|

| 353 |

+

relative_coords = (q_coords - k_coords) + (k_size - 1) * max(q_size / k_size, 1.0)

|

| 354 |

+

|

| 355 |

+

return rel_pos_resized[relative_coords.long()]

|

| 356 |

+

|

| 357 |

+

|

| 358 |

+

def add_decomposed_rel_pos(

|

| 359 |

+

attn: torch.Tensor,

|

| 360 |

+

q: torch.Tensor,

|

| 361 |

+

rel_pos_h: torch.Tensor,

|

| 362 |

+

rel_pos_w: torch.Tensor,

|

| 363 |

+

q_size: Tuple[int, int],

|

| 364 |

+

k_size: Tuple[int, int],

|

| 365 |

+

) -> torch.Tensor:

|

| 366 |

+

"""

|

| 367 |

+

Args:

|

| 368 |

+

attn (Tensor): attention map.

|

| 369 |

+

q (Tensor): query q in the attention layer with shape (B, q_h * q_w, C).

|

| 370 |

+

rel_pos_h (Tensor): relative position embeddings (Lh, C) for height axis.

|

| 371 |

+

rel_pos_w (Tensor): relative position embeddings (Lw, C) for width axis.

|

| 372 |

+

q_size (Tuple): spatial sequence size of query q with (q_h, q_w).

|

| 373 |

+

k_size (Tuple): spatial sequence size of key k with (k_h, k_w).

|

| 374 |

+

|

| 375 |

+

Returns:

|

| 376 |

+

attn (Tensor): attention map with added relative positional embeddings.

|

| 377 |

+

"""

|

| 378 |

+

q_h, q_w = q_size

|

| 379 |

+

k_h, k_w = k_size

|

| 380 |

+

Rh = get_rel_pos(q_h, k_h, rel_pos_h)

|

| 381 |

+

Rw = get_rel_pos(q_w, k_w, rel_pos_w)

|

| 382 |

+

|

| 383 |

+

B, _, dim = q.shape

|

| 384 |

+

r_q = q.reshape(B, q_h, q_w, dim)

|

| 385 |

+

rel_h = torch.einsum("bhwc,hkc->bhwk", r_q, Rh)

|

| 386 |

+

rel_w = torch.einsum("bhwc,wkc->bhwk", r_q, Rw)

|

| 387 |

+

|

| 388 |

+

attn = (

|

| 389 |

+

attn.view(B, q_h, q_w, k_h, k_w) + rel_h[:, :, :, :, None] + rel_w[:, :, :, None, :]

|

| 390 |

+

).view(B, q_h * q_w, k_h * k_w)

|

| 391 |

+

|

| 392 |

+

return attn

|

| 393 |

+

|

| 394 |

+

|

| 395 |

+

class PatchEmbed(nn.Module):

|

| 396 |

+

"""

|

| 397 |

+

Image to Patch Embedding.

|

| 398 |

+

"""

|

| 399 |

+

|

| 400 |

+

def __init__(

|

| 401 |

+

self,

|

| 402 |

+

kernel_size: Tuple[int, int] = (16, 16),

|

| 403 |

+

stride: Tuple[int, int] = (16, 16),

|

| 404 |

+

padding: Tuple[int, int] = (0, 0),

|

| 405 |

+

in_chans: int = 3,

|

| 406 |

+

embed_dim: int = 768,

|

| 407 |

+

) -> None:

|

| 408 |

+

"""

|

| 409 |

+

Args:

|

| 410 |

+

kernel_size (Tuple): kernel size of the projection layer.

|

| 411 |

+

stride (Tuple): stride of the projection layer.

|

| 412 |

+

padding (Tuple): padding size of the projection layer.

|

| 413 |

+

in_chans (int): Number of input image channels.

|

| 414 |

+

embed_dim (int): Patch embedding dimension.

|

| 415 |

+

"""

|

| 416 |

+

super().__init__()

|

| 417 |

+

|

| 418 |

+

self.proj = nn.Conv2d(

|

| 419 |

+

in_chans, embed_dim, kernel_size=kernel_size, stride=stride, padding=padding

|

| 420 |

+

)

|

| 421 |

+

|

| 422 |

+

def forward(self, x: torch.Tensor) -> torch.Tensor:

|

| 423 |

+

x = self.proj(x)

|

| 424 |

+

# B C H W -> B H W C

|

| 425 |

+

x = x.permute(0, 2, 3, 1)

|

| 426 |

+

return x

|

| 427 |

+

|

| 428 |

+

|

| 429 |

+

|

| 430 |

+

def build_GOT_vit_b(checkpoint=None):

|

| 431 |

+

return _build_GOT_vision(

|

| 432 |

+

encoder_embed_dim=768,

|

| 433 |

+

encoder_depth=12,

|

| 434 |

+

encoder_num_heads=12,

|

| 435 |

+

encoder_global_attn_indexes=[2, 5, 8, 11],

|

| 436 |

+

checkpoint=checkpoint,

|

| 437 |

+

)

|

| 438 |

+

|

| 439 |

+

|

| 440 |

+

def _build_GOT_vision(

|

| 441 |

+

encoder_embed_dim,

|

| 442 |

+

encoder_depth,

|

| 443 |

+

encoder_num_heads,

|

| 444 |

+

encoder_global_attn_indexes,

|

| 445 |

+

checkpoint=None,

|

| 446 |

+

):

|

| 447 |

+

prompt_embed_dim = 256

|

| 448 |

+

image_size = 1024

|

| 449 |

+

vit_patch_size = 16

|

| 450 |

+

image_embedding_size = image_size // vit_patch_size

|

| 451 |

+

image_encoder=ImageEncoderViT(

|

| 452 |

+

depth=encoder_depth,

|

| 453 |

+

embed_dim=encoder_embed_dim,

|

| 454 |

+

img_size=image_size,

|

| 455 |

+

mlp_ratio=4,

|

| 456 |

+

norm_layer=partial(torch.nn.LayerNorm, eps=1e-6),

|

| 457 |

+

num_heads=encoder_num_heads,

|

| 458 |

+

patch_size=vit_patch_size,

|

| 459 |

+

qkv_bias=True,

|

| 460 |

+

use_rel_pos=True,

|

| 461 |

+

global_attn_indexes=encoder_global_attn_indexes,

|

| 462 |

+

window_size=14,

|

| 463 |

+

out_chans=prompt_embed_dim,

|

| 464 |

+

)

|

| 465 |

+

|

| 466 |

+

|

| 467 |

+

return image_encoder

|

| 468 |

+

|

model.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:77d6144039548b14253176b6eb264896bc39eba532f8894700f210a7fd2a5956

|

| 3 |

+

size 1432121416

|

modeling_GOT.py

ADDED

|

@@ -0,0 +1,881 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|