Update README.md

Browse files

README.md

CHANGED

|

@@ -12,9 +12,9 @@ language:

|

|

| 12 |

---

|

| 13 |

## Why prune?

|

| 14 |

|

| 15 |

-

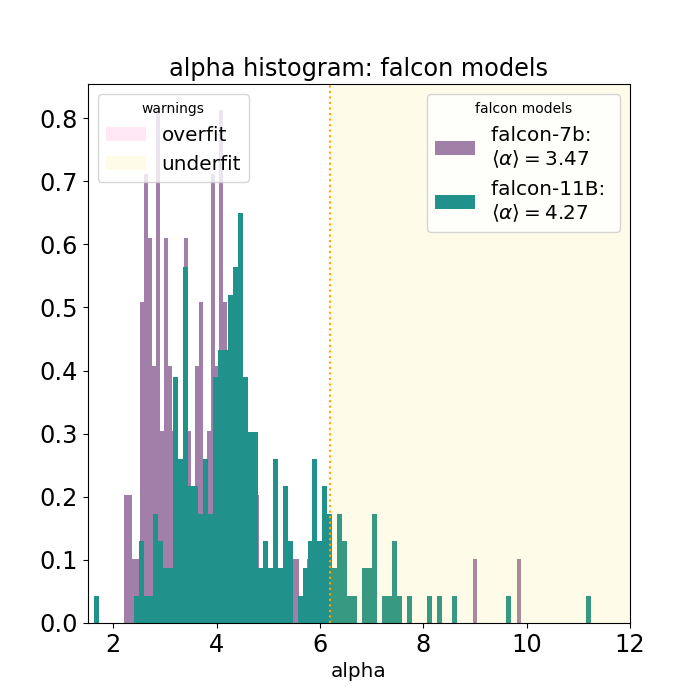

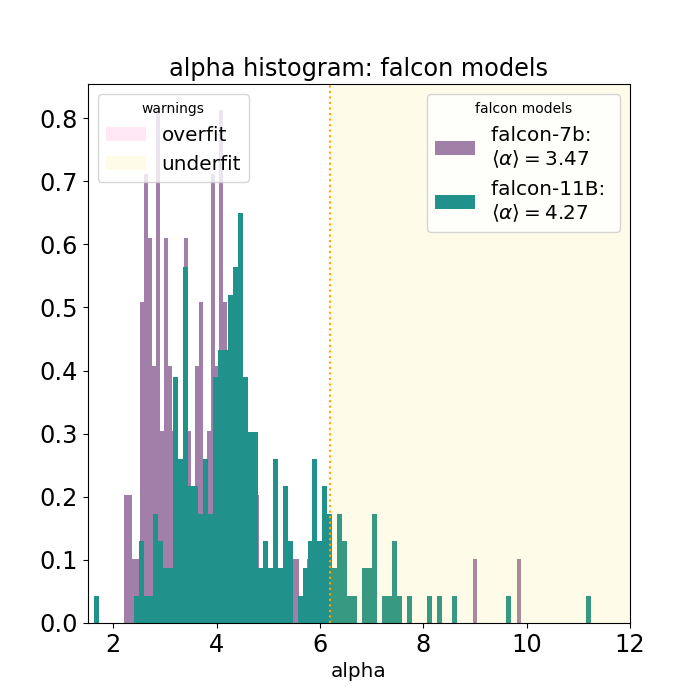

Falcon-11B is still undertrained, as can be seen by this graph:

|

| 16 |

|

| 17 |

-

This is why the choice is made

|

| 18 |

Note that \~1B of continued pre-training (\~1M rows of 1k tokens) is still required to restore the perplexity of this model in the desired language.

|

| 19 |

I'm planning on doing that for certain languages, depending on how much compute will be available.

|

| 20 |

|

|

|

|

| 12 |

---

|

| 13 |

## Why prune?

|

| 14 |

|

| 15 |

+

Even though [Falcon-11B](https://huggingface.co/tiiuae/falcon-11B) is trained on 5T tokens, it is still undertrained, as can be seen by this graph:

|

| 16 |

|

| 17 |

+

This is why the choice is made to prune 50% of the layers.

|

| 18 |

Note that \~1B of continued pre-training (\~1M rows of 1k tokens) is still required to restore the perplexity of this model in the desired language.

|

| 19 |

I'm planning on doing that for certain languages, depending on how much compute will be available.

|

| 20 |

|