from langchain.prompts import PromptTemplate

from langchain_core.output_parsers import JsonOutputParser, StrOutputParser

from langchain_community.chat_models import ChatOllama

from langchain_huggingface import ChatHuggingFace, HuggingFaceEndpoint

from langchain_core.tools import Tool

from langchain_google_community import GoogleSearchAPIWrapper

from firecrawl import FirecrawlApp

import gradio as gr

import os

# Using Ollama (only in Local)

# -----------------------------

# local_llm = 'llama3.1'

# llama3 = ChatOllama(model=local_llm, temperature=1)

# llama3_json = ChatOllama(model=local_llm, format='json', temperature=0)

# Hugging Face Initialization

os.environ["HUGGINGFACEHUB_API_TOKEN"] = os.getenv('HF_KEY')

os.environ["GOOGLE_CSE_ID"] = os.getenv('GOOGLE_CSE_ID')

os.environ["GOOGLE_API_KEY"] = os.getenv('GOOGLE_API_KEY')

# Llama Endpoint

llm = HuggingFaceEndpoint(

repo_id="meta-llama/Meta-Llama-3.1-8B-Instruct",

task="text-generation",

max_new_tokens=4000,

do_sample=False,

repetition_penalty=1.03,

)

llama3 = ChatHuggingFace(llm=llm, temperature = 1)

llama3_json = ChatHuggingFace(llm=llm, format = 'json', temperature = 0)

# Search & Scrape Initialization

google_search = GoogleSearchAPIWrapper()

firecrawl_app = FirecrawlApp(api_key=os.getenv('FIRECRAWL_KEY'))

# Keyword Generation

query_prompt = PromptTemplate(

template="""

<|begin_of_text|>

<|start_header_id|>system<|end_header_id|>

You are an expert at crafting web search queries for fact checking.

More often than not, a user will provide an information that they wish to fact check, however it might not be in the best format.

Reword their query to be the most effective web search string possible.

Return the JSON with a single key 'query' with no premable or explanation.

Information to transform: {question}

<|eot_id|>

<|start_header_id|>assistant<|end_header_id|>

""",

input_variables=["question"],

)

# Keyword Chain

query_chain = query_prompt | llama3_json | JsonOutputParser()

# Google Search and Firecrawl Setup

def search_and_scrape(keyword):

search_results = google_search.results(keyword, 3)

scraped_data = []

for result in search_results:

url = result['link']

scrape_response = firecrawl_app.scrape_url(url=url, params={'formats': ['markdown']})

scraped_data.append(scrape_response)

return scraped_data

# Summarizer

summarize_prompt = PromptTemplate(

template="""

<|begin_of_text|>

<|start_header_id|>system<|end_header_id|>

You are an expert at summarizing web crawling results. The user will give you multiple web search result with different topics. Your task is to summarize all the important information

from the article in a readable paragraph. It is okay if one paragraph contains multiple topics.

Information to transform: {question}

<|eot_id|>

<|start_header_id|>assistant<|end_header_id|>

""",

input_variables=["question"],

)

# Summarizer Chain

summarize_chain = summarize_prompt | llama3 | StrOutputParser()

# Fact Checker

generate_prompt = PromptTemplate(

template="""

<|begin_of_text|>

<|start_header_id|>system<|end_header_id|>

You are a fact-checker AI assistant that receives an information from the user, synthesizes web search results for that information, and verify whether the user's information is a fact or possibly a hoax.

Strictly use the following pieces of web search context to answer the question. If you don't know the answer, just give "Possibly Hoax" verdict. Only make direct references to material if provided in the context.

Return a JSON output with these keys, with no premable:

1. user_information: the user's input

2. system_verdict: is the user question above a fact? choose only between "Fact" or "Possibly Hoax"

3. explanation: a short explanation on why the verdict was chosen

If the context does not relate with the information provided by user, you can give "Possibly Hoax" result and tell the user that based on web search, it seems that the provided information is a false information.

<|eot_id|>

<|start_header_id|>user<|end_header_id|>

User Information: {question}

Web Search Context: {context}

JSON Verdict and Explanation:

<|eot_id|>

<|start_header_id|>assistant<|end_header_id|>

""",

input_variables=["question", "context"],

)

# Fact Checker Chain

generate_chain = generate_prompt | llama3_json | JsonOutputParser()

# Full Flow Function

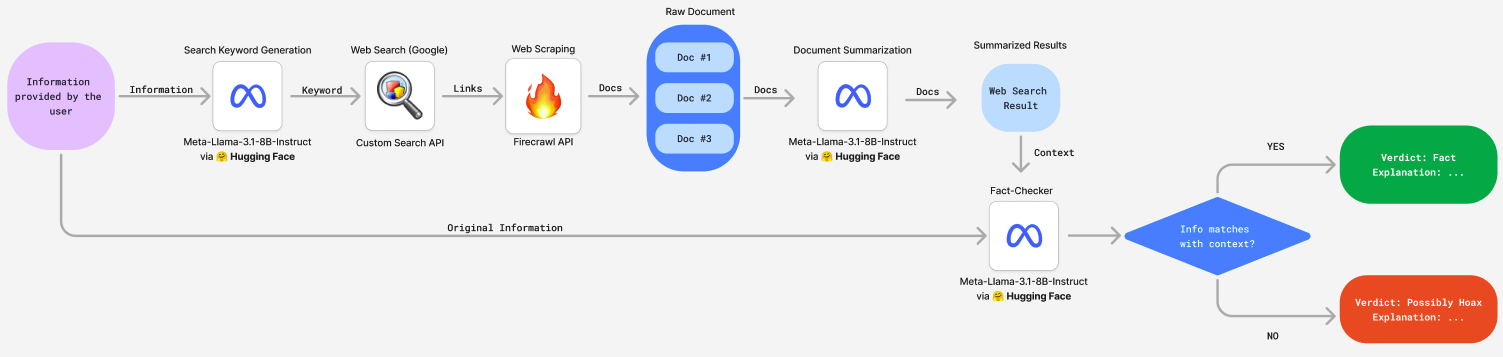

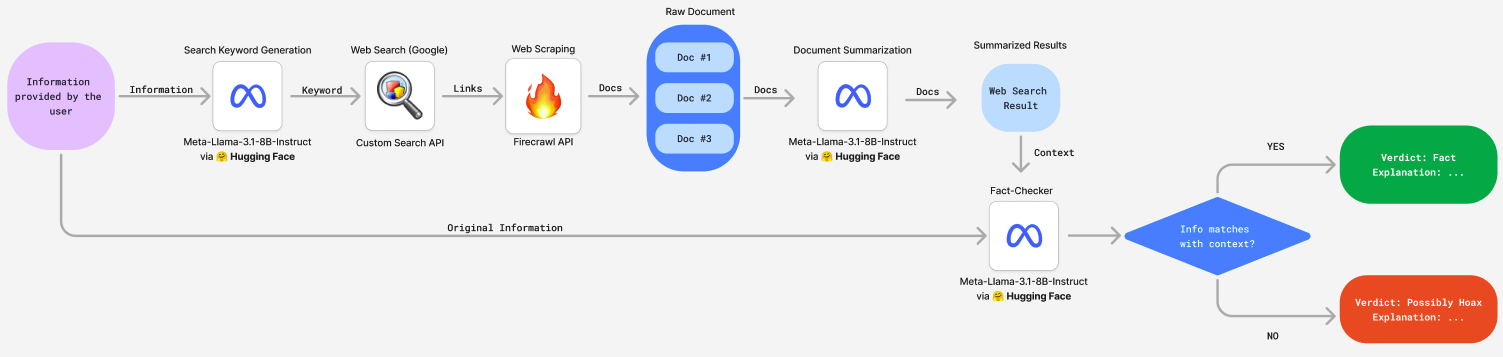

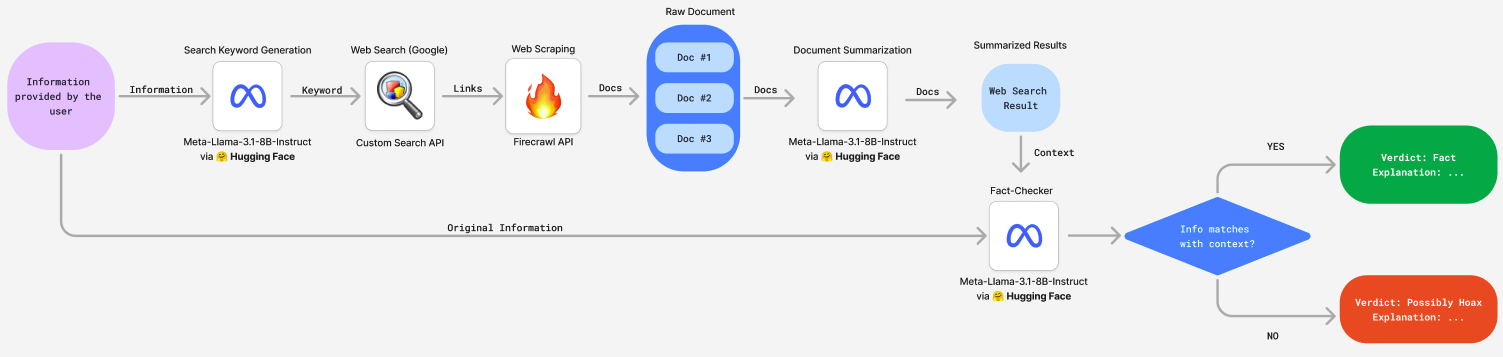

def fact_check_flow(user_question):

# Generate Keyword

keyword = query_chain.invoke({"question": user_question})["query"]

# Search & Scrape Results

context_data = search_and_scrape(keyword)

# Join Results

final_markdown = []

for results in context_data:

final_markdown.append(results['markdown'])

final_markdown = ' '.join(final_markdown)

# Summarize Results

context = summarize_chain.invoke({"question": final_markdown})

# Use Information + Context to Fact Check

final_response = generate_chain.invoke({"question": user_question, "context": context})

# Process Output

verdict = final_response['system_verdict']

explanation = final_response['explanation']

if verdict == "Fact":

verdict_html = f"{verdict}"

else:

verdict_html = f"{verdict}"

explanation_html = f"{explanation}

"

return verdict_html + explanation_html

demo = gr.Interface(

fn=fact_check_flow,

inputs=gr.Textbox(label="Input any information you want to fact-check!"),

outputs="html",

title="Aletheia: Llama-Powered Fact-Checker AI Agent 🤖",

description="""

*"Aletheia: Llama-Powered Fact-Checker AI Agent"* is an experimental fact-checking tool designed to help users verify the accuracy of information quickly and easily. This tool leverages the power of Large Language Models (LLM) combined with real-time web crawling to analyze the validity of user-provided information. This tool is a prototype, currently being used by the author as part of a submission for Meta's Llama Hackathon 2024, demonstrating the potential for Llama to assist in information verification.\

Important Note:

Due to current resource constraints, you need to restart the space each time you use this app to ensure it functions correctly (Go to "Settings" > "Restart Space"). This is a known issue and will be improved in future iterations (source: https://huggingface.co./spaces/huggingchat/chat-ui/discussions/430)

"""

)

if __name__ == "__main__":

demo.launch()