Spaces:

Runtime error

Runtime error

lixiang46

commited on

Commit

•

f69cd15

1

Parent(s):

fe70090

init

Browse files- .gitattributes +3 -0

- README.md +2 -2

- app.py +51 -126

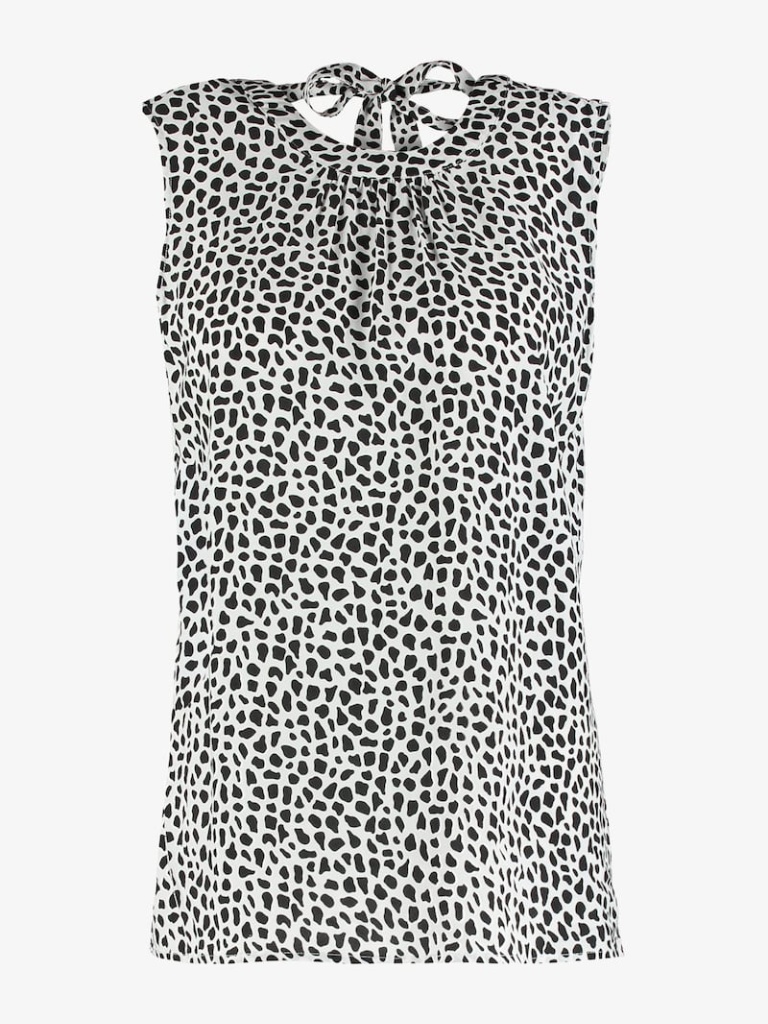

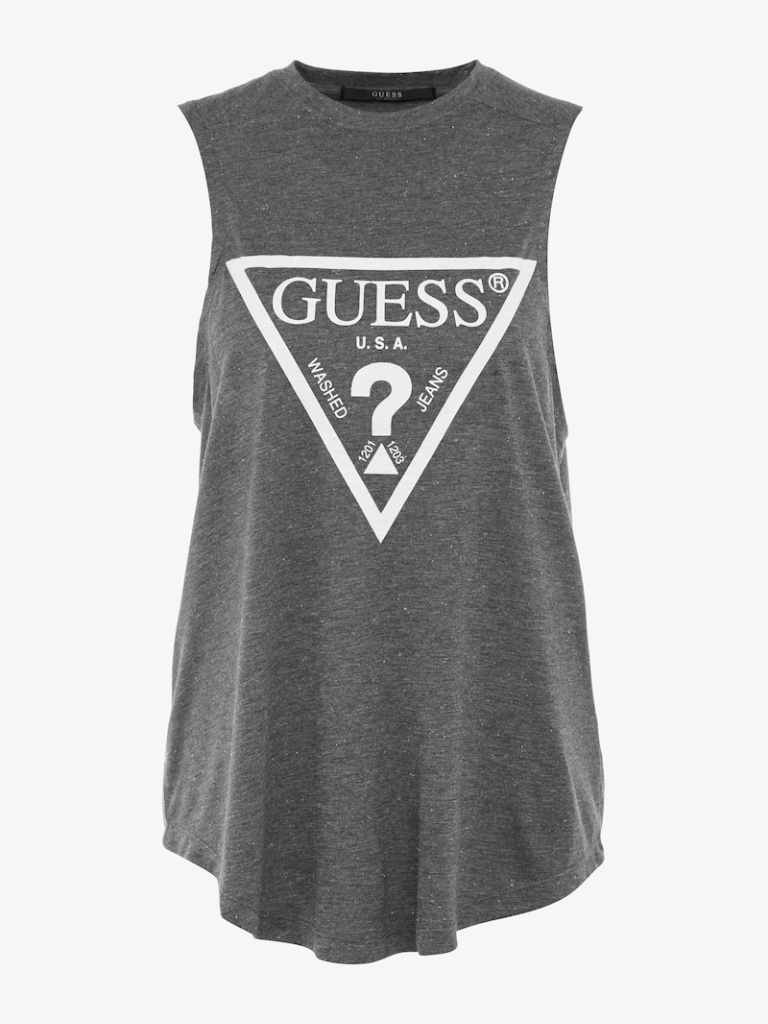

- assets/cloth/04469_00.jpg +0 -0

- assets/cloth/04743_00.jpg +0 -0

- assets/cloth/09133_00.jpg +0 -0

- assets/cloth/09163_00.jpg +0 -0

- assets/cloth/09164_00.jpg +0 -0

- assets/cloth/09166_00.jpg +0 -0

- assets/cloth/09176_00.jpg +0 -0

- assets/cloth/09236_00.jpg +0 -0

- assets/cloth/09256_00.jpg +0 -0

- assets/cloth/09263_00.jpg +0 -0

- assets/cloth/09266_00.jpg +0 -0

- assets/cloth/09290_00.jpg +0 -0

- assets/cloth/09305_00.jpg +0 -0

- assets/cloth/10165_00.jpg +0 -0

- assets/cloth/14627_00.jpg +0 -0

- assets/cloth/14673_00.jpg +0 -0

- assets/human/00034_00.jpg +0 -0

- assets/human/00035_00.jpg +0 -0

- assets/human/00055_00.jpg +0 -0

- assets/human/00121_00.jpg +0 -0

- assets/human/01992_00.jpg +0 -0

- assets/human/Jensen.jpeg +0 -0

- assets/human/sam1 (1).jpg +0 -0

- assets/human/taylor-.jpg +0 -0

- assets/human/will1 (1).jpg +0 -0

- assets/title.md +3 -0

.gitattributes

CHANGED

|

@@ -33,3 +33,6 @@ saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

|

|

|

|

|

|

|

|

|

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 36 |

+

assets/cloth filter=lfs diff=lfs merge=lfs -text

|

| 37 |

+

assets/human filter=lfs diff=lfs merge=lfs -text

|

| 38 |

+

assets/title.md filter=lfs diff=lfs merge=lfs -text

|

README.md

CHANGED

|

@@ -2,9 +2,9 @@

|

|

| 2 |

title: Kolors Tryon

|

| 3 |

emoji: 🖼

|

| 4 |

colorFrom: purple

|

| 5 |

-

colorTo:

|

| 6 |

sdk: gradio

|

| 7 |

-

sdk_version: 4.

|

| 8 |

app_file: app.py

|

| 9 |

pinned: false

|

| 10 |

license: apache-2.0

|

|

|

|

| 2 |

title: Kolors Tryon

|

| 3 |

emoji: 🖼

|

| 4 |

colorFrom: purple

|

| 5 |

+

colorTo: gray

|

| 6 |

sdk: gradio

|

| 7 |

+

sdk_version: 4.38.1

|

| 8 |

app_file: app.py

|

| 9 |

pinned: false

|

| 10 |

license: apache-2.0

|

app.py

CHANGED

|

@@ -1,146 +1,71 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

import gradio as gr

|

| 2 |

import numpy as np

|

| 3 |

import random

|

| 4 |

-

from diffusers import DiffusionPipeline

|

| 5 |

-

import torch

|

| 6 |

|

| 7 |

-

|

| 8 |

|

| 9 |

-

|

| 10 |

-

torch.cuda.max_memory_allocated(device=device)

|

| 11 |

-

pipe = DiffusionPipeline.from_pretrained("stabilityai/sdxl-turbo", torch_dtype=torch.float16, variant="fp16", use_safetensors=True)

|

| 12 |

-

pipe.enable_xformers_memory_efficient_attention()

|

| 13 |

-

pipe = pipe.to(device)

|

| 14 |

-

else:

|

| 15 |

-

pipe = DiffusionPipeline.from_pretrained("stabilityai/sdxl-turbo", use_safetensors=True)

|

| 16 |

-

pipe = pipe.to(device)

|

| 17 |

|

| 18 |

-

|

| 19 |

-

MAX_IMAGE_SIZE = 1024

|

| 20 |

-

|

| 21 |

-

def infer(prompt, negative_prompt, seed, randomize_seed, width, height, guidance_scale, num_inference_steps):

|

| 22 |

-

|

| 23 |

-

if randomize_seed:

|

| 24 |

-

seed = random.randint(0, MAX_SEED)

|

| 25 |

-

|

| 26 |

-

generator = torch.Generator().manual_seed(seed)

|

| 27 |

-

|

| 28 |

-

image = pipe(

|

| 29 |

-

prompt = prompt,

|

| 30 |

-

negative_prompt = negative_prompt,

|

| 31 |

-

guidance_scale = guidance_scale,

|

| 32 |

-

num_inference_steps = num_inference_steps,

|

| 33 |

-

width = width,

|

| 34 |

-

height = height,

|

| 35 |

-

generator = generator

|

| 36 |

-

).images[0]

|

| 37 |

|

| 38 |

-

return

|

| 39 |

|

| 40 |

-

|

| 41 |

-

|

| 42 |

-

|

| 43 |

-

|

| 44 |

-

]

|

| 45 |

|

| 46 |

css="""

|

| 47 |

-

#col-

|

|

|

|

|

|

|

|

|

|

|

|

|

| 48 |

margin: 0 auto;

|

| 49 |

-

max-width:

|

|

|

|

|

|

|

|

|

|

| 50 |

}

|

| 51 |

"""

|

| 52 |

|

| 53 |

-

|

| 54 |

-

|

| 55 |

-

|

| 56 |

-

|

| 57 |

|

| 58 |

-

with gr.Blocks(css=css) as

|

| 59 |

-

|

| 60 |

-

with gr.

|

| 61 |

-

gr.

|

| 62 |

-

|

| 63 |

-

|

| 64 |

-

|

| 65 |

-

|

| 66 |

-

|

| 67 |

-

|

| 68 |

-

prompt = gr.Text(

|

| 69 |

-

label="Prompt",

|

| 70 |

-

show_label=False,

|

| 71 |

-

max_lines=1,

|

| 72 |

-

placeholder="Enter your prompt",

|

| 73 |

-

container=False,

|

| 74 |

)

|

| 75 |

-

|

| 76 |

-

|

| 77 |

-

|

| 78 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 79 |

|

| 80 |

-

|

| 81 |

-

|

| 82 |

-

|

| 83 |

-

label="Negative prompt",

|

| 84 |

-

max_lines=1,

|

| 85 |

-

placeholder="Enter a negative prompt",

|

| 86 |

-

visible=False,

|

| 87 |

-

)

|

| 88 |

-

|

| 89 |

-

seed = gr.Slider(

|

| 90 |

-

label="Seed",

|

| 91 |

-

minimum=0,

|

| 92 |

-

maximum=MAX_SEED,

|

| 93 |

-

step=1,

|

| 94 |

-

value=0,

|

| 95 |

-

)

|

| 96 |

-

|

| 97 |

-

randomize_seed = gr.Checkbox(label="Randomize seed", value=True)

|

| 98 |

-

|

| 99 |

-

with gr.Row():

|

| 100 |

-

|

| 101 |

-

width = gr.Slider(

|

| 102 |

-

label="Width",

|

| 103 |

-

minimum=256,

|

| 104 |

-

maximum=MAX_IMAGE_SIZE,

|

| 105 |

-

step=32,

|

| 106 |

-

value=512,

|

| 107 |

-

)

|

| 108 |

-

|

| 109 |

-

height = gr.Slider(

|

| 110 |

-

label="Height",

|

| 111 |

-

minimum=256,

|

| 112 |

-

maximum=MAX_IMAGE_SIZE,

|

| 113 |

-

step=32,

|

| 114 |

-

value=512,

|

| 115 |

-

)

|

| 116 |

-

|

| 117 |

with gr.Row():

|

| 118 |

-

|

| 119 |

-

guidance_scale = gr.Slider(

|

| 120 |

-

label="Guidance scale",

|

| 121 |

-

minimum=0.0,

|

| 122 |

-

maximum=10.0,

|

| 123 |

-

step=0.1,

|

| 124 |

-

value=0.0,

|

| 125 |

-

)

|

| 126 |

-

|

| 127 |

-

num_inference_steps = gr.Slider(

|

| 128 |

-

label="Number of inference steps",

|

| 129 |

-

minimum=1,

|

| 130 |

-

maximum=12,

|

| 131 |

-

step=1,

|

| 132 |

-

value=2,

|

| 133 |

-

)

|

| 134 |

-

|

| 135 |

-

gr.Examples(

|

| 136 |

-

examples = examples,

|

| 137 |

-

inputs = [prompt]

|

| 138 |

-

)

|

| 139 |

|

| 140 |

-

|

| 141 |

-

fn = infer,

|

| 142 |

-

inputs = [prompt, negative_prompt, seed, randomize_seed, width, height, guidance_scale, num_inference_steps],

|

| 143 |

-

outputs = [result]

|

| 144 |

-

)

|

| 145 |

|

| 146 |

-

|

|

|

|

| 1 |

+

import sys

|

| 2 |

+

import os

|

| 3 |

+

import io

|

| 4 |

+

from PIL import Image

|

| 5 |

import gradio as gr

|

| 6 |

import numpy as np

|

| 7 |

import random

|

|

|

|

|

|

|

| 8 |

|

| 9 |

+

example_path = os.path.join(os.path.dirname(__file__), 'assets')

|

| 10 |

|

| 11 |

+

MAX_SEED = 999999

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 12 |

|

| 13 |

+

def start_tryon(imgs, garm_img, garment_des, seed):

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 14 |

|

| 15 |

+

return None

|

| 16 |

|

| 17 |

+

garm_list = os.listdir(os.path.join(example_path,"cloth"))

|

| 18 |

+

garm_list_path = [os.path.join(example_path,"cloth",garm) for garm in garm_list]

|

| 19 |

+

|

| 20 |

+

human_list = os.listdir(os.path.join(example_path,"human"))

|

| 21 |

+

human_list_path = [os.path.join(example_path,"human",human) for human in human_list]

|

| 22 |

|

| 23 |

css="""

|

| 24 |

+

#col-left {

|

| 25 |

+

margin: 0 auto;

|

| 26 |

+

max-width: 600px;

|

| 27 |

+

}

|

| 28 |

+

#col-right {

|

| 29 |

margin: 0 auto;

|

| 30 |

+

max-width: 750px;

|

| 31 |

+

}

|

| 32 |

+

#button {

|

| 33 |

+

color: blue;

|

| 34 |

}

|

| 35 |

"""

|

| 36 |

|

| 37 |

+

def load_description(fp):

|

| 38 |

+

with open(fp, 'r', encoding='utf-8') as f:

|

| 39 |

+

content = f.read()

|

| 40 |

+

return content

|

| 41 |

|

| 42 |

+

with gr.Blocks(css=css) as Tryon:

|

| 43 |

+

gr.HTML(load_description("assets/title.md"))

|

| 44 |

+

with gr.Row():

|

| 45 |

+

with gr.Column():

|

| 46 |

+

imgs = gr.Image(label="Person image", sources='upload', type="pil")

|

| 47 |

+

# category = gr.Dropdown(label="Garment category", choices=['upper_body', 'lower_body', 'dresses'], value="upper_body")

|

| 48 |

+

example = gr.Examples(

|

| 49 |

+

inputs=imgs,

|

| 50 |

+

examples_per_page=10,

|

| 51 |

+

examples=human_list_path

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 52 |

)

|

| 53 |

+

with gr.Column():

|

| 54 |

+

garm_img = gr.Image(label="Garment image", sources='upload', type="pil")

|

| 55 |

+

example = gr.Examples(

|

| 56 |

+

inputs=garm_img,

|

| 57 |

+

examples_per_page=8,

|

| 58 |

+

examples=garm_list_path)

|

| 59 |

+

with gr.Column():

|

| 60 |

+

image_out = gr.Image(label="Output", elem_id="output-img",show_share_button=False)

|

| 61 |

+

try_button = gr.Button(value="Try-on")

|

| 62 |

|

| 63 |

+

|

| 64 |

+

with gr.Column():

|

| 65 |

+

with gr.Accordion(label="Advanced Settings", open=False):

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 66 |

with gr.Row():

|

| 67 |

+

seed = gr.Number(label="Seed", minimum=-1, maximum=2147483647, step=1, value=None)

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 68 |

|

| 69 |

+

try_button.click(fn=start_tryon, inputs=[imgs, garm_img, seed], outputs=[image_out], api_name='tryon')

|

|

|

|

|

|

|

|

|

|

|

|

|

| 70 |

|

| 71 |

+

Tryon.queue(max_size=10).launch()

|

assets/cloth/04469_00.jpg

ADDED

|

assets/cloth/04743_00.jpg

ADDED

|

assets/cloth/09133_00.jpg

ADDED

|

assets/cloth/09163_00.jpg

ADDED

|

assets/cloth/09164_00.jpg

ADDED

|

assets/cloth/09166_00.jpg

ADDED

|

assets/cloth/09176_00.jpg

ADDED

|

assets/cloth/09236_00.jpg

ADDED

|

assets/cloth/09256_00.jpg

ADDED

|

assets/cloth/09263_00.jpg

ADDED

|

assets/cloth/09266_00.jpg

ADDED

|

assets/cloth/09290_00.jpg

ADDED

|

assets/cloth/09305_00.jpg

ADDED

|

assets/cloth/10165_00.jpg

ADDED

|

assets/cloth/14627_00.jpg

ADDED

|

assets/cloth/14673_00.jpg

ADDED

|

assets/human/00034_00.jpg

ADDED

|

assets/human/00035_00.jpg

ADDED

|

assets/human/00055_00.jpg

ADDED

|

assets/human/00121_00.jpg

ADDED

|

assets/human/01992_00.jpg

ADDED

|

assets/human/Jensen.jpeg

ADDED

|

assets/human/sam1 (1).jpg

ADDED

.jpg)

|

assets/human/taylor-.jpg

ADDED

|

assets/human/will1 (1).jpg

ADDED

.jpg)

|

assets/title.md

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:ee7fff5b3874ec80927627cdec9b2e5773cf257952b81fd280c4458993dc1894

|

| 3 |

+

size 598

|