Upload folder using huggingface_hub

Browse filesThis view is limited to 50 files because it contains too many changes.

See raw diff

- .gitattributes +4 -0

- .gitignore +18 -0

- MIST_logo.png +0 -0

- README.md +238 -7

- assets/MIST_V2_LOGO.png +0 -0

- assets/effect_show.png +0 -0

- assets/output_image.png +0 -0

- assets/output_image_box.png +0 -0

- assets/robustness.png +3 -0

- assets/user_2.jpg +3 -0

- assets/user_case_1.png +3 -0

- assets/user_case_2.png +3 -0

- attacks/mist.py +1156 -0

- attacks/utils.py +113 -0

- data/MIST.png +0 -0

- eval/sample_lora_15.ipynb +0 -0

- eval/train_dreambooth_lora_15.py +1007 -0

- ldm/configs/karlo/decoder_900M_vit_l.yaml +37 -0

- ldm/configs/karlo/improved_sr_64_256_1.4B.yaml +27 -0

- ldm/configs/karlo/prior_1B_vit_l.yaml +21 -0

- ldm/configs/stable-diffusion/intel/v2-inference-bf16.yaml +71 -0

- ldm/configs/stable-diffusion/intel/v2-inference-fp32.yaml +70 -0

- ldm/configs/stable-diffusion/intel/v2-inference-v-bf16.yaml +72 -0

- ldm/configs/stable-diffusion/intel/v2-inference-v-fp32.yaml +71 -0

- ldm/configs/stable-diffusion/v2-1-stable-unclip-h-inference.yaml +80 -0

- ldm/configs/stable-diffusion/v2-1-stable-unclip-l-inference.yaml +83 -0

- ldm/configs/stable-diffusion/v2-inference-v.yaml +68 -0

- ldm/configs/stable-diffusion/v2-inference.yaml +67 -0

- ldm/configs/stable-diffusion/v2-inpainting-inference.yaml +158 -0

- ldm/configs/stable-diffusion/v2-midas-inference.yaml +74 -0

- ldm/configs/stable-diffusion/x4-upscaling.yaml +76 -0

- ldm/data/__init__.py +0 -0

- ldm/data/util.py +24 -0

- ldm/models/autoencoder.py +219 -0

- ldm/models/diffusion/__init__.py +0 -0

- ldm/models/diffusion/ddim.py +337 -0

- ldm/models/diffusion/ddpm.py +1884 -0

- ldm/models/diffusion/dpm_solver/__init__.py +1 -0

- ldm/models/diffusion/dpm_solver/dpm_solver.py +1163 -0

- ldm/models/diffusion/dpm_solver/sampler.py +96 -0

- ldm/models/diffusion/plms.py +245 -0

- ldm/models/diffusion/sampling_util.py +22 -0

- ldm/modules/attention.py +341 -0

- ldm/modules/diffusionmodules/__init__.py +0 -0

- ldm/modules/diffusionmodules/model.py +852 -0

- ldm/modules/diffusionmodules/openaimodel.py +807 -0

- ldm/modules/diffusionmodules/upscaling.py +81 -0

- ldm/modules/diffusionmodules/util.py +278 -0

- ldm/modules/distributions/__init__.py +0 -0

- ldm/modules/distributions/distributions.py +92 -0

.gitattributes

CHANGED

|

@@ -33,3 +33,7 @@ saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 36 |

+

assets/robustness.png filter=lfs diff=lfs merge=lfs -text

|

| 37 |

+

assets/user_2.jpg filter=lfs diff=lfs merge=lfs -text

|

| 38 |

+

assets/user_case_1.png filter=lfs diff=lfs merge=lfs -text

|

| 39 |

+

assets/user_case_2.png filter=lfs diff=lfs merge=lfs -text

|

.gitignore

ADDED

|

@@ -0,0 +1,18 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

*.bin

|

| 2 |

+

*.ckpt

|

| 3 |

+

*.pt

|

| 4 |

+

logs/

|

| 5 |

+

*.safetensors

|

| 6 |

+

*.jpg

|

| 7 |

+

*.png

|

| 8 |

+

!data/MIST.png

|

| 9 |

+

!/MIST_logo.png

|

| 10 |

+

*.zip

|

| 11 |

+

__pycache__/

|

| 12 |

+

stable-diffusion/*/

|

| 13 |

+

test/

|

| 14 |

+

*.pkl

|

| 15 |

+

data/training/*

|

| 16 |

+

output/lora/*

|

| 17 |

+

output/mist/*

|

| 18 |

+

!assets/*

|

MIST_logo.png

ADDED

|

README.md

CHANGED

|

@@ -1,12 +1,243 @@

|

|

| 1 |

---

|

| 2 |

-

title:

|

| 3 |

-

|

| 4 |

-

colorFrom: blue

|

| 5 |

-

colorTo: gray

|

| 6 |

sdk: gradio

|

| 7 |

sdk_version: 4.11.0

|

| 8 |

-

app_file: app.py

|

| 9 |

-

pinned: false

|

| 10 |

---

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 11 |

|

| 12 |

-

Check out the configuration reference at https://huggingface.co/docs/hub/spaces-config-reference

|

|

|

|

| 1 |

---

|

| 2 |

+

title: mist-v2

|

| 3 |

+

app_file: mist-webui.py

|

|

|

|

|

|

|

| 4 |

sdk: gradio

|

| 5 |

sdk_version: 4.11.0

|

|

|

|

|

|

|

| 6 |

---

|

| 7 |

+

<p align="center">

|

| 8 |

+

<br>

|

| 9 |

+

<!-- <img src="mist_logo.png"> -->

|

| 10 |

+

<img src="assets/MIST_V2_LOGO.png">

|

| 11 |

+

<br>

|

| 12 |

+

</p>

|

| 13 |

+

|

| 14 |

+

|

| 15 |

+

[](https://mist-project.github.io/index_en.html)

|

| 16 |

+

[](https://arxiv.org/abs/2310.04687)

|

| 17 |

+

<!--

|

| 18 |

+

[](https://arxiv.org/abs/2310.04687)

|

| 19 |

+

-->

|

| 20 |

+

<!--

|

| 21 |

+

### [project page](https://mist-project.github.io) | [arxiv](https://arxiv.org/abs/2310.04687) | [document](https://arxiv.org/abs/2310.04687) -->

|

| 22 |

+

|

| 23 |

+

<!-- #region -->

|

| 24 |

+

<!-- <p align="center">

|

| 25 |

+

<img src="effect_show.png">

|

| 26 |

+

</p> -->

|

| 27 |

+

<!-- #endregion -->

|

| 28 |

+

<!--

|

| 29 |

+

> Mist adds watermarks to images, making them unrecognizable and unusable for AI-for-Art models that try to mimic them. -->

|

| 30 |

+

|

| 31 |

+

<!-- #region -->

|

| 32 |

+

<p align="center">

|

| 33 |

+

<img src="assets/user_2.jpg">

|

| 34 |

+

</p>

|

| 35 |

+

<!-- <p align="center">

|

| 36 |

+

<img src="user_case_2.png">

|

| 37 |

+

</p> -->

|

| 38 |

+

<!-- #endregion -->

|

| 39 |

+

|

| 40 |

+

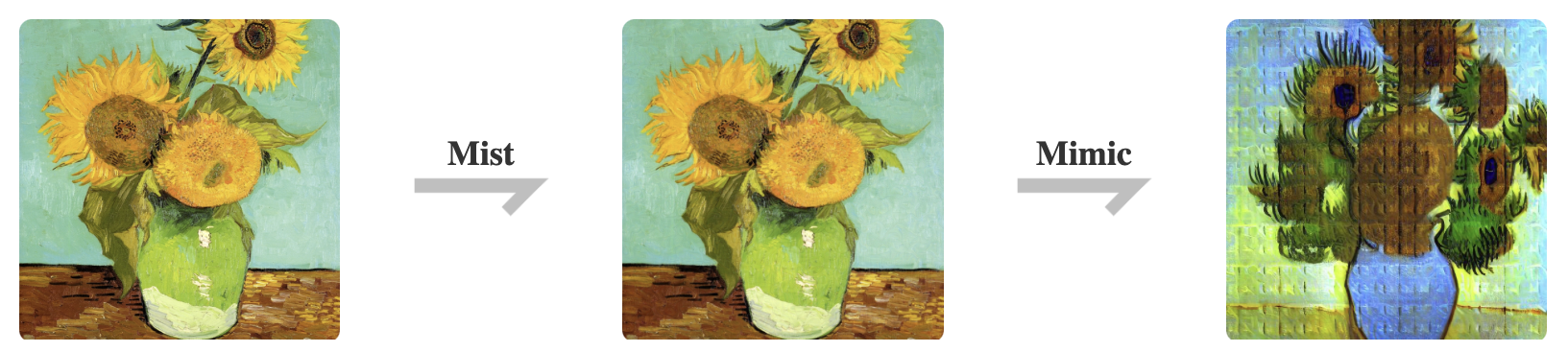

> Mist's Effects in User Cases. **The first row:** Lora generation from source images.

|

| 41 |

+

**The second row:** Lora generation from Mist-treated samples. Mist V2 significantly disrupts the output of the generation, effectively protecting artists' images. Used images are from anonymous artists. All rights reserved.

|

| 42 |

+

<!-- #region -->

|

| 43 |

+

<!-- <p align="center">

|

| 44 |

+

<img src="robustness.png">

|

| 45 |

+

</p> -->

|

| 46 |

+

<!-- #endregion -->

|

| 47 |

+

|

| 48 |

+

<!-- > Robustness of Mist against image preprocessing. -->

|

| 49 |

+

|

| 50 |

+

<!-- ## News

|

| 51 |

+

|

| 52 |

+

**2022/12/11**: Mist V2 released. -->

|

| 53 |

+

|

| 54 |

+

## Main Features

|

| 55 |

+

- Enhanced protection against AI-for-Art applications like Lora and SDEdit

|

| 56 |

+

- Imperceptible noise.

|

| 57 |

+

- 3-5 minutes processing with only 6GB of GPU memory in most cases. CPU processing supported.

|

| 58 |

+

- Resilience against denoising methods.

|

| 59 |

+

|

| 60 |

+

|

| 61 |

+

## About Mist

|

| 62 |

+

Mist is a powerful image preprocessing tool designed for the purpose of protecting the style and content of

|

| 63 |

+

images from being mimicked by state-of-the-art AI-for-Art applications. By adding watermarks to the images, Mist renders them unrecognizable and inmitable for the

|

| 64 |

+

models employed by AI-for-Art applications. Attempts by AI-for-Art applications to mimic these Misted images

|

| 65 |

+

will be ineffective, and the output image of such mimicry will be scrambled and unusable as artwork.

|

| 66 |

+

|

| 67 |

+

|

| 68 |

+

<p align="center">

|

| 69 |

+

<img src="assets/effect_show.png">

|

| 70 |

+

</p>

|

| 71 |

+

|

| 72 |

+

In Mist V2, we have enhanced its effectiveness against a wider range of AI-for-Art applications, particularly excelling with Lora. Mist V2 achieves robust defense with even more discreet watermarks compared to [Mist V1](https://github.com/mist-project/mist). Additionally, Mist V2 introduces support for CPU processing and can efficiently run on GPUs with as little as 6GB of memory in most cases.

|

| 73 |

+

|

| 74 |

+

|

| 75 |

+

<!-- For more details, refer to our [documentation](https://arxiv.org/abs/2310.04687). -->

|

| 76 |

+

|

| 77 |

+

|

| 78 |

+

|

| 79 |

+

|

| 80 |

+

|

| 81 |

+

|

| 82 |

+

|

| 83 |

+

## Quick Start

|

| 84 |

+

|

| 85 |

+

### Environment

|

| 86 |

+

|

| 87 |

+

**Preliminaries:** To run this repository, please have [Anaconda](https://pytorch.org/) installed in your work station. The GPU version of Mist requires a NVIDIA GPU in [Ampere](https://en.wikipedia.org/wiki/Ampere_(microarchitecture)) or more advanced architecture with more than 6GB VRAM. You can also try the CPU version

|

| 88 |

+

in a moderate running speed.

|

| 89 |

+

|

| 90 |

+

Clone this repository to your local and get into the repository root:

|

| 91 |

+

|

| 92 |

+

```bash

|

| 93 |

+

git clone https://github.com/mist-project/mist-v2.git

|

| 94 |

+

cd mist-v2

|

| 95 |

+

```

|

| 96 |

+

|

| 97 |

+

Then, run the following commands in the root of the repository to install the environment:

|

| 98 |

+

|

| 99 |

+

```bash

|

| 100 |

+

conda create -n mist-v2 python=3.10

|

| 101 |

+

conda activate mist-v2

|

| 102 |

+

pip install -r requirements.txt

|

| 103 |

+

```

|

| 104 |

+

|

| 105 |

+

### Usage

|

| 106 |

+

|

| 107 |

+

Run Mist V2 in the default setup on GPU:

|

| 108 |

+

```bash

|

| 109 |

+

accelerate launch attacks/mist.py --cuda --low_vram_mode --instance_data_dir $INSTANCE_DIR --output_dir $OUTPUT_DIR --class_data_dir $CLASS_DATA_DIR --instance_prompt $PROMPT --class_prompt $CLASS_PROMPT --mixed_precision bf16

|

| 110 |

+

```

|

| 111 |

+

|

| 112 |

+

Run Mist V2 in the default setup on CPU:

|

| 113 |

+

```bash

|

| 114 |

+

accelerate launch attacks/mist.py --instance_data_dir $INSTANCE_DIR --output_dir $OUTPUT_DIR --class_data_dir $CLASS_DATA_DIR --instance_prompt $PROMPT --class_prompt $CLASS_PROMPT --mixed_precision bf16

|

| 115 |

+

```

|

| 116 |

+

|

| 117 |

+

The parameters are demonstrated in the following table:

|

| 118 |

+

|

| 119 |

+

| Parameter | Explanation |

|

| 120 |

+

| --------------- | ------------------------------------------------------------------------------------------ |

|

| 121 |

+

| $INSTANCE_DIR | Directory of input clean images. The goal is to add adversarial noise to them. |

|

| 122 |

+

| $OUTPUT_DIR | Directory for output adversarial examples (misted images). |

|

| 123 |

+

| $CLASS_DATA_DIR | Directory for class data in prior preserved training of Dreambooth, required to be empty. |

|

| 124 |

+

| $PROMPT | Prompt that describes the input clean images, used to perturb the images. |

|

| 125 |

+

| $CLASS_PROMPT | Prompt used to generate class data, recommended to be similar to $PROMPT. |

|

| 126 |

+

|

| 127 |

+

Here is a case command to run Mist V2 on GPU:

|

| 128 |

+

|

| 129 |

+

```bash

|

| 130 |

+

accelerate launch attacks/mist.py --cuda --low_vram_mode --instance_data_dir data/training --output_dir output/ --class_data_dir data/class --instance_prompt "a photo of a misted person, high quality, masterpiece" --class_prompt "a photo of a person, high quality, masterpiece" --mixed_precision bf16

|

| 131 |

+

```

|

| 132 |

+

|

| 133 |

+

We also provide a WebUI with the help of [Gradio](https://www.gradio.app/). To boost the WebUI, run:

|

| 134 |

+

|

| 135 |

+

```bash

|

| 136 |

+

python mist-webui.py

|

| 137 |

+

```

|

| 138 |

+

|

| 139 |

+

### Evaluation

|

| 140 |

+

|

| 141 |

+

We provide a simple pipeline to evaluate the output adversarial examples (only for GPU users).

|

| 142 |

+

Basically, this pipeline trains a LoRA on the adversarial examples and samples images with the LoRA.

|

| 143 |

+

Note that our adversarial examples may induce LoRA to output images with NSFW contents

|

| 144 |

+

(for example, chaotic texture). As stated, this is to prevent LoRA training on unauthorized image data. To evaluate the effectiveness of our method, we disable the safety checker in the LoRA sampling script. Following is the instruction to run the pipeline.

|

| 145 |

+

|

| 146 |

+

First, train a LoRA on the output adversarial examples.

|

| 147 |

+

|

| 148 |

+

```bash

|

| 149 |

+

accelerate launch eval/train_dreambooth_lora_15.py --instance_data_dir=$LORA_INPUT_DIR --output_dir=$LORA_OUTPUT_DIR --class_data_dir=$LORA_CLASS_DIR --instance_prompt $LORA_PROMPT --class_prompt $LORA_CLASS_PROMPT --resolution=512 --train_batch_size=1 --learning_rate=1e-4 --scale_lr --max_train_steps=2000

|

| 150 |

+

```

|

| 151 |

+

|

| 152 |

+

The parameters are demonstrated in the following table:

|

| 153 |

+

|

| 154 |

+

|

| 155 |

+

| Parameter | Explanation |

|

| 156 |

+

| ------------------ | ---------------------------------------------------------------------------------------------------------- |

|

| 157 |

+

| $LORA_INPUT_DIR | Directory of training data (adversarial examples), staying the same as $OUTPUT_DIR in the previous table. |

|

| 158 |

+

| $LORA_OUTPUT_DIR | Directory to store the trained LoRA. |

|

| 159 |

+

| $LORA_CLASS_DIR | Directory for class data in prior preserved training of Dreambooth, required to be empty. |

|

| 160 |

+

| $LORA_PROMPT | Prompt that describes the training data, used to train the LoRA. |

|

| 161 |

+

| $LORA_CLASS_PROMPT | Prompt used to generate class data, recommended to be related to $LORA_PROMPT. |

|

| 162 |

+

|

| 163 |

+

|

| 164 |

+

Next, open the `eval/sample_lora_15.ipynb` and run the first block. After that, change the value of the variable `LORA_OUTPUT_DIR` to be the previous `$LORA_OUTPUT_DIR` when training the LoRA.

|

| 165 |

+

|

| 166 |

+

```Python

|

| 167 |

+

from lora_diffusion import tune_lora_scale, patch_pipe

|

| 168 |

+

torch.manual_seed(time.time())

|

| 169 |

+

|

| 170 |

+

# The directory of LoRA

|

| 171 |

+

LORA_OUTPUT_DIR = [The value of $LORA_OUTPUT_DIR]

|

| 172 |

+

...

|

| 173 |

+

```

|

| 174 |

+

|

| 175 |

+

Finally, run the second block to see the output and evaluate the performance of Mist.

|

| 176 |

+

|

| 177 |

+

|

| 178 |

+

## A Glimpse to Methodology

|

| 179 |

+

|

| 180 |

+

Mist V2 works by adversarially attacking generative diffusion models. Basically, the attacking is an optimization over the following objective:

|

| 181 |

+

|

| 182 |

+

$$ \underset{x'}{min} \mathbb{E} {(z_0', \epsilon,t)} \Vert \epsilon_\theta(z'_t(z'_0,\epsilon),t)-z_0^T\Vert^2_2, \Vert x'-x\Vert\leq\zeta$$

|

| 183 |

+

|

| 184 |

+

We demonstrate the notation in the following table.

|

| 185 |

+

|

| 186 |

+

| Variable | Explanation |

|

| 187 |

+

| ----------------- | ---------------------------------------------------------------- |

|

| 188 |

+

| $x$ / $x'$ | The clean image / The adversarial example |

|

| 189 |

+

| $t$ | Time step in the diffusion model. |

|

| 190 |

+

| $z'_0$ | The latent variable of $x'$ in the 0th time step |

|

| 191 |

+

| $\epsilon$ | A standard Gaussian noise |

|

| 192 |

+

| $z_0^T$ | The latent variable of a target image $x^T$ in the 0th time step |

|

| 193 |

+

| $\epsilon_\theta$ | The noise predictor (U-Net) in the diffusion model |

|

| 194 |

+

| $\zeta$ | The budget of adversarial noise |

|

| 195 |

+

|

| 196 |

+

|

| 197 |

+

Intuitively, we find that pushing the output of the U-Net in the diffusion model to the 0th timestep

|

| 198 |

+

latent variable of a target image can effectively confuse the diffusion model. This abstracts the

|

| 199 |

+

aforementioned objective of Mist V2.

|

| 200 |

+

|

| 201 |

+

Our paper is still in working. We are trying to reveal the mechanism behind our method in the paper. Despite of this, you can access [Arxiv]() to view the first draft of our paper.

|

| 202 |

+

|

| 203 |

+

## License

|

| 204 |

+

|

| 205 |

+

This project is licensed under the [GPL-3.0 license](https://github.com/mist-project/mist/blob/main/LICENSE).

|

| 206 |

+

|

| 207 |

+

|

| 208 |

+

## Citation

|

| 209 |

+

If you find our work valuable and utilize it, we kindly request that you cite our paper.

|

| 210 |

+

|

| 211 |

+

```

|

| 212 |

+

@article{zheng2023understanding,

|

| 213 |

+

title={Understanding and Improving Adversarial Attacks on Latent Diffusion Model},

|

| 214 |

+

author={Zheng, Boyang and Liang, Chumeng and Wu, Xiaoyu and Liu, Yan},

|

| 215 |

+

journal={arXiv preprint arXiv:2310.04687},

|

| 216 |

+

year={2023}

|

| 217 |

+

}

|

| 218 |

+

```

|

| 219 |

+

|

| 220 |

+

Our repository also refers to following papers:

|

| 221 |

+

|

| 222 |

+

```

|

| 223 |

+

@inproceedings{van2023anti,

|

| 224 |

+

title={Anti-DreamBooth: Protecting users from personalized text-to-image synthesis},

|

| 225 |

+

author={Van Le, Thanh and Phung, Hao and Nguyen, Thuan Hoang and Dao, Quan and Tran, Ngoc N and Tran, Anh},

|

| 226 |

+

booktitle={Proceedings of the IEEE/CVF International Conference on Computer Vision},

|

| 227 |

+

pages={2116--2127},

|

| 228 |

+

year={2023}

|

| 229 |

+

}

|

| 230 |

+

```

|

| 231 |

+

|

| 232 |

+

```

|

| 233 |

+

@article{liang2023mist,

|

| 234 |

+

title={Mist: Towards Improved Adversarial Examples for Diffusion Models},

|

| 235 |

+

author={Liang, Chumeng and Wu, Xiaoyu},

|

| 236 |

+

journal={arXiv preprint arXiv:2305.12683},

|

| 237 |

+

year={2023}

|

| 238 |

+

}

|

| 239 |

+

```

|

| 240 |

+

|

| 241 |

+

|

| 242 |

+

|

| 243 |

|

|

|

assets/MIST_V2_LOGO.png

ADDED

|

assets/effect_show.png

ADDED

|

assets/output_image.png

ADDED

|

assets/output_image_box.png

ADDED

|

assets/robustness.png

ADDED

|

Git LFS Details

|

assets/user_2.jpg

ADDED

|

Git LFS Details

|

assets/user_case_1.png

ADDED

|

Git LFS Details

|

assets/user_case_2.png

ADDED

|

Git LFS Details

|

attacks/mist.py

ADDED

|

@@ -0,0 +1,1156 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

import argparse

|

| 2 |

+

import copy

|

| 3 |

+

import hashlib

|

| 4 |

+

import itertools

|

| 5 |

+

import logging

|

| 6 |

+

import os

|

| 7 |

+

import sys

|

| 8 |

+

import gc

|

| 9 |

+

from pathlib import Path

|

| 10 |

+

from colorama import Fore, Style, init,Back

|

| 11 |

+

import random, time

|

| 12 |

+

'''some system level settings'''

|

| 13 |

+

init(autoreset=True)

|

| 14 |

+

sys.path.insert(0, sys.path[0]+"/../")

|

| 15 |

+

import lpips

|

| 16 |

+

|

| 17 |

+

import datasets

|

| 18 |

+

import diffusers

|

| 19 |

+

import numpy as np

|

| 20 |

+

import torch

|

| 21 |

+

import torch.nn.functional as F

|

| 22 |

+

import torch.utils.checkpoint

|

| 23 |

+

import transformers

|

| 24 |

+

from accelerate import Accelerator

|

| 25 |

+

from accelerate.logging import get_logger

|

| 26 |

+

from accelerate.utils import set_seed

|

| 27 |

+

from diffusers import AutoencoderKL, DDPMScheduler, DiffusionPipeline, UNet2DConditionModel,DDIMScheduler

|

| 28 |

+

from diffusers.utils.import_utils import is_xformers_available

|

| 29 |

+

from PIL import Image

|

| 30 |

+

from torch.utils.data import Dataset

|

| 31 |

+

from torchvision import transforms

|

| 32 |

+

from tqdm.auto import tqdm

|

| 33 |

+

from transformers import AutoTokenizer, PretrainedConfig

|

| 34 |

+

from torch import autograd

|

| 35 |

+

from typing import Optional, Tuple

|

| 36 |

+

import pynvml

|

| 37 |

+

# from utils import print_tensor

|

| 38 |

+

|

| 39 |

+

from lora_diffusion import (

|

| 40 |

+

extract_lora_ups_down,

|

| 41 |

+

inject_trainable_lora,

|

| 42 |

+

)

|

| 43 |

+

from lora_diffusion.xformers_utils import set_use_memory_efficient_attention_xformers

|

| 44 |

+

from attacks.utils import LatentAttack

|

| 45 |

+

|

| 46 |

+

logger = get_logger(__name__)

|

| 47 |

+

|

| 48 |

+

def parse_args(input_args=None):

|

| 49 |

+

parser = argparse.ArgumentParser(description="Simple example of a training script.")

|

| 50 |

+

parser.add_argument(

|

| 51 |

+

"--cuda",

|

| 52 |

+

action="store_true",

|

| 53 |

+

help="Use gpu for attack",

|

| 54 |

+

)

|

| 55 |

+

parser.add_argument(

|

| 56 |

+

"--pretrained_model_name_or_path",

|

| 57 |

+

"-p",

|

| 58 |

+

type=str,

|

| 59 |

+

default="./stable-diffusion/stable-diffusion-1-5",

|

| 60 |

+

required=False,

|

| 61 |

+

help="Path to pretrained model or model identifier from huggingface.co/models.",

|

| 62 |

+

)

|

| 63 |

+

parser.add_argument(

|

| 64 |

+

"--revision",

|

| 65 |

+

type=str,

|

| 66 |

+

default=None,

|

| 67 |

+

required=False,

|

| 68 |

+

help=(

|

| 69 |

+

"Revision of pretrained model identifier from huggingface.co/models. Trainable model components should be"

|

| 70 |

+

" float32 precision."

|

| 71 |

+

),

|

| 72 |

+

)

|

| 73 |

+

parser.add_argument(

|

| 74 |

+

"--tokenizer_name",

|

| 75 |

+

type=str,

|

| 76 |

+

default=None,

|

| 77 |

+

help="Pretrained tokenizer name or path if not the same as model_name",

|

| 78 |

+

)

|

| 79 |

+

parser.add_argument(

|

| 80 |

+

"--instance_data_dir",

|

| 81 |

+

type=str,

|

| 82 |

+

default="",

|

| 83 |

+

required=False,

|

| 84 |

+

help="A folder containing the images to add adversarial noise",

|

| 85 |

+

)

|

| 86 |

+

parser.add_argument(

|

| 87 |

+

"--class_data_dir",

|

| 88 |

+

type=str,

|

| 89 |

+

default="",

|

| 90 |

+

required=False,

|

| 91 |

+

help="A folder containing the training data of class images.",

|

| 92 |

+

)

|

| 93 |

+

parser.add_argument(

|

| 94 |

+

"--instance_prompt",

|

| 95 |

+

type=str,

|

| 96 |

+

default="a picture",

|

| 97 |

+

required=False,

|

| 98 |

+

help="The prompt with identifier specifying the instance",

|

| 99 |

+

)

|

| 100 |

+

parser.add_argument(

|

| 101 |

+

"--class_prompt",

|

| 102 |

+

type=str,

|

| 103 |

+

default="a picture",

|

| 104 |

+

help="The prompt to specify images in the same class as provided instance images.",

|

| 105 |

+

)

|

| 106 |

+

parser.add_argument(

|

| 107 |

+

"--with_prior_preservation",

|

| 108 |

+

default=True,

|

| 109 |

+

help="Flag to add prior preservation loss.",

|

| 110 |

+

)

|

| 111 |

+

parser.add_argument(

|

| 112 |

+

"--prior_loss_weight",

|

| 113 |

+

type=float,

|

| 114 |

+

default=0.1,

|

| 115 |

+

help="The weight of prior preservation loss.",

|

| 116 |

+

)

|

| 117 |

+

parser.add_argument(

|

| 118 |

+

"--num_class_images",

|

| 119 |

+

type=int,

|

| 120 |

+

default=50,

|

| 121 |

+

help=(

|

| 122 |

+

"Minimal class images for prior preservation loss. If there are not enough images already present in"

|

| 123 |

+

" class_data_dir, additional images will be sampled with class_prompt."

|

| 124 |

+

),

|

| 125 |

+

)

|

| 126 |

+

parser.add_argument(

|

| 127 |

+

"--output_dir",

|

| 128 |

+

type=str,

|

| 129 |

+

default="",

|

| 130 |

+

help="The output directory where the perturbed data is stored",

|

| 131 |

+

)

|

| 132 |

+

parser.add_argument("--seed", type=int, default=None, help="A seed for reproducible training.")

|

| 133 |

+

parser.add_argument(

|

| 134 |

+

"--resolution",

|

| 135 |

+

type=int,

|

| 136 |

+

default=512,

|

| 137 |

+

help=(

|

| 138 |

+

"The resolution for input images, all the images in the train/validation dataset will be resized to this"

|

| 139 |

+

" resolution"

|

| 140 |

+

),

|

| 141 |

+

)

|

| 142 |

+

parser.add_argument(

|

| 143 |

+

"--center_crop",

|

| 144 |

+

default=True,

|

| 145 |

+

help=(

|

| 146 |

+

"Whether to center crop the input images to the resolution. If not set, the images will be randomly"

|

| 147 |

+

" cropped. The images will be resized to the resolution first before cropping."

|

| 148 |

+

),

|

| 149 |

+

)

|

| 150 |

+

parser.add_argument(

|

| 151 |

+

"--train_text_encoder",

|

| 152 |

+

action="store_false",

|

| 153 |

+

help="Whether to train the text encoder. If set, the text encoder should be float32 precision.",

|

| 154 |

+

)

|

| 155 |

+

parser.add_argument(

|

| 156 |

+

"--train_batch_size",

|

| 157 |

+

type=int,

|

| 158 |

+

default=1,

|

| 159 |

+

help="Batch size (per device) for the training dataloader.",

|

| 160 |

+

)

|

| 161 |

+

parser.add_argument(

|

| 162 |

+

"--sample_batch_size",

|

| 163 |

+

type=int,

|

| 164 |

+

default=1,

|

| 165 |

+

help="Batch size (per device) for sampling images.",

|

| 166 |

+

)

|

| 167 |

+

parser.add_argument(

|

| 168 |

+

"--max_train_steps",

|

| 169 |

+

type=int,

|

| 170 |

+

default=5,

|

| 171 |

+

help="Total number of training steps to perform.",

|

| 172 |

+

)

|

| 173 |

+

parser.add_argument(

|

| 174 |

+

"--max_f_train_steps",

|

| 175 |

+

type=int,

|

| 176 |

+

default=10,

|

| 177 |

+

help="Total number of sub-steps to train surogate model.",

|

| 178 |

+

)

|

| 179 |

+

parser.add_argument(

|

| 180 |

+

"--max_adv_train_steps",

|

| 181 |

+

type=int,

|

| 182 |

+

default=30,

|

| 183 |

+

help="Total number of sub-steps to train adversarial noise.",

|

| 184 |

+

)

|

| 185 |

+

parser.add_argument(

|

| 186 |

+

"--gradient_accumulation_steps",

|

| 187 |

+

type=int,

|

| 188 |

+

default=1,

|

| 189 |

+

help="Number of updates steps to accumulate before performing a backward/update pass.",

|

| 190 |

+

)

|

| 191 |

+

parser.add_argument(

|

| 192 |

+

"--checkpointing_iterations",

|

| 193 |

+

type=int,

|

| 194 |

+

default=5,

|

| 195 |

+

help=("Save a checkpoint of the training state every X iterations."),

|

| 196 |

+

)

|

| 197 |

+

|

| 198 |

+

parser.add_argument(

|

| 199 |

+

"--logging_dir",

|

| 200 |

+

type=str,

|

| 201 |

+

default="logs",

|

| 202 |

+

help=(

|

| 203 |

+

"[TensorBoard](https://www.tensorflow.org/tensorboard) log directory. Will default to"

|

| 204 |

+

" *output_dir/runs/**CURRENT_DATETIME_HOSTNAME***."

|

| 205 |

+

),

|

| 206 |

+

)

|

| 207 |

+

parser.add_argument(

|

| 208 |

+

"--allow_tf32",

|

| 209 |

+

action="store_true",

|

| 210 |

+

help=(

|

| 211 |

+

"Whether or not to allow TF32 on Ampere GPUs. Can be used to speed up training. For more information, see"

|

| 212 |

+

" https://pytorch.org/docs/stable/notes/cuda.html#tensorfloat-32-tf32-on-ampere-devices"

|

| 213 |

+

),

|

| 214 |

+

)

|

| 215 |

+

parser.add_argument(

|

| 216 |

+

"--report_to",

|

| 217 |

+

type=str,

|

| 218 |

+

default="tensorboard",

|

| 219 |

+

help=(

|

| 220 |

+

'The integration to report the results and logs to. Supported platforms are `"tensorboard"`'

|

| 221 |

+

' (default), `"wandb"` and `"comet_ml"`. Use `"all"` to report to all integrations.'

|

| 222 |

+

),

|

| 223 |

+

)

|

| 224 |

+

parser.add_argument(

|

| 225 |

+

"--mixed_precision",

|

| 226 |

+

type=str,

|

| 227 |

+

default="bf16",

|

| 228 |

+

choices=["no", "fp16", "bf16"],

|

| 229 |

+

help=(

|

| 230 |

+

"Whether to use mixed precision. Choose between fp16 and bf16 (bfloat16). Bf16 requires PyTorch >="

|

| 231 |

+

" 1.10.and an Nvidia Ampere GPU. Default to the value of accelerate config of the current system or the"

|

| 232 |

+

" flag passed with the `accelerate.launch` command. Use this argument to override the accelerate config."

|

| 233 |

+

),

|

| 234 |

+

)

|

| 235 |

+

parser.add_argument(

|

| 236 |

+

"--low_vram_mode",

|

| 237 |

+

action="store_false",

|

| 238 |

+

help="Whether or not to use low vram mode.",

|

| 239 |

+

)

|

| 240 |

+

parser.add_argument(

|

| 241 |

+

"--pgd_alpha",

|

| 242 |

+

type=float,

|

| 243 |

+

default=5e-3,

|

| 244 |

+

help="The step size for pgd.",

|

| 245 |

+

)

|

| 246 |

+

parser.add_argument(

|

| 247 |

+

"--pgd_eps",

|

| 248 |

+

type=float,

|

| 249 |

+

default=float(8.0/255.0),

|

| 250 |

+

help="The noise budget for pgd.",

|

| 251 |

+

)

|

| 252 |

+

parser.add_argument(

|

| 253 |

+

"--lpips_bound",

|

| 254 |

+

type=float,

|

| 255 |

+

default=0.1,

|

| 256 |

+

help="The noise budget for pgd.",

|

| 257 |

+

)

|

| 258 |

+

parser.add_argument(

|

| 259 |

+

"--lpips_weight",

|

| 260 |

+

type=float,

|

| 261 |

+

default=0.5,

|

| 262 |

+

help="The noise budget for pgd.",

|

| 263 |

+

)

|

| 264 |

+

parser.add_argument(

|

| 265 |

+

"--fused_weight",

|

| 266 |

+

type=float,

|

| 267 |

+

default=1e-5,

|

| 268 |

+

help="The decay of alpha and eps when applying pre_attack",

|

| 269 |

+

)

|

| 270 |

+

parser.add_argument(

|

| 271 |

+

"--target_image_path",

|

| 272 |

+

default="data/MIST.png",

|

| 273 |

+

help="target image for attacking",

|

| 274 |

+

)

|

| 275 |

+

|

| 276 |

+

parser.add_argument(

|

| 277 |

+

"--lora_rank",

|

| 278 |

+

type=int,

|

| 279 |

+

default=4,

|

| 280 |

+

help="Rank of LoRA approximation.",

|

| 281 |

+

)

|

| 282 |

+

parser.add_argument(

|

| 283 |

+

"--learning_rate",

|

| 284 |