update readme

Browse files- README.md +71 -8

- assets/1.png +0 -0

- assets/2.png +0 -0

- assets/3.png +0 -0

README.md

CHANGED

|

@@ -1054,22 +1054,85 @@ model-index:

|

|

| 1054 |

- type: f1

|

| 1055 |

value: 88.2026184757175

|

| 1056 |

---

|

|

|

|

| 1057 |

|

| 1058 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1059 |

|

| 1060 |

**[2024-04-22]**

|

| 1061 |

|

| 1062 |

-

piccolo-large-zh-v2 目前在C-MTEB榜单取得第一名,领先上一名BERT模型约1.9个点。

|

| 1063 |

-

|

| 1064 |

piccolo-large-zh-v2 currently ranks first on the C-MTEB list, leading the previous BERT model by about 1.9 points.

|

| 1065 |

|

| 1066 |

-

##

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1067 |

|

| 1068 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1069 |

|

| 1070 |

-

piccolo-large-zh-v2 is a Chinese embedding model developed by the general model group at SenseTime Research. This upgraded version of Piccolo aims to prioritize general downstream fine-tuning methods. We plan to release an updated technical report in the near future, and further technical details will be disclosed during the SenseTime Tech Day on April 23rd: https://www.sensetime.com/cn

|

| 1071 |

|

| 1072 |

## Usage

|

| 1073 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1074 |

|

| 1075 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1054 |

- type: f1

|

| 1055 |

value: 88.2026184757175

|

| 1056 |

---

|

| 1057 |

+

[EN](README.md) | [简体中文](README_zh.md)

|

| 1058 |

|

| 1059 |

+

**News**

|

| 1060 |

+

**[2024-05-14]**

|

| 1061 |

+

We have currently release our model weights, training code, and tech report. Discussions are welcome.

|

| 1062 |

+

For training code, please refer to our [github](https://github.com/hjq133/piccolo-embedding)

|

| 1063 |

+

For training details, please refer to our [tech-report](https://arxiv.org/abs/2405.06932)

|

| 1064 |

|

| 1065 |

**[2024-04-22]**

|

| 1066 |

|

|

|

|

|

|

|

| 1067 |

piccolo-large-zh-v2 currently ranks first on the C-MTEB list, leading the previous BERT model by about 1.9 points.

|

| 1068 |

|

| 1069 |

+

## Piccolo-large-zh-v2

|

| 1070 |

+

|

| 1071 |

+

piccolo-large-zh-v2 is a Chinese embedding model developed by the general model group from SenseTime Research. This upgraded version of Piccolo aims to prioritize general downstream fine-tuning methods. Piccolo2 primarily leverages an efficient multi-task hybrid loss training approach,

|

| 1072 |

+

effectively harnessing textual data and labels from diverse downstream

|

| 1073 |

+

tasks. In addition, Piccolo2 scales up the embedding dimension and uses

|

| 1074 |

+

MRL training to support more flexible vector dimensions.

|

| 1075 |

+

|

| 1076 |

+

## Model Hightlights

|

| 1077 |

+

The main feature of piccolo2 is that it uses a multi-task hybrid loss during training.

|

| 1078 |

+

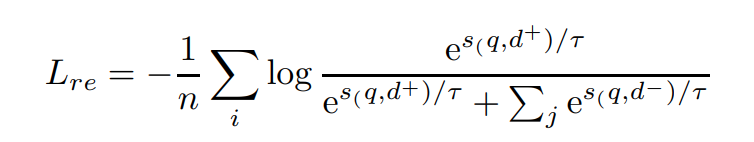

For retrieval/sorting tasks, we use the standard InfoNCE with in-batch-negative:

|

| 1079 |

+

<p align='left'>

|

| 1080 |

+

<img src='assets/1.png' width='400' height='80'>

|

| 1081 |

+

</p>

|

| 1082 |

+

|

| 1083 |

+

For sts/pair classification tasks, we use cosent loss, which is proved to be better for data with more fine-grained labels(e.g. score values ):

|

| 1084 |

+

<p align='left'>

|

| 1085 |

+

<img src='assets/2.png' width='450' height='90'>

|

| 1086 |

+

</p>

|

| 1087 |

|

| 1088 |

+

For classification/clustering tasks, by treating text and its semantic labels as positive and negative pairs, we convert the dataset into the format of triples. And then we use InfoNCE to optimize it. However, it’s important to

|

| 1089 |

+

stress that in-batch negatives are no longer used due to the fact that

|

| 1090 |

+

it can easily lead to conflict training targets:

|

| 1091 |

+

<p align='left'>

|

| 1092 |

+

<img src='assets/3.png' width='400' height='80'>

|

| 1093 |

+

</p>

|

| 1094 |

+

|

| 1095 |

+

## Experiments and Results

|

| 1096 |

+

Piccolo2 primarily focuses on the downstream general finetune paradigm. Our open source model uses [stella-v3.5](https://huggingface.co/infgrad/stella-mrl-large-zh-v3.5-1792d) as initialization and trained about 2500 steps on 32 GPUS. For more implementation details, please refer to our [technical report](https://arxiv.org/abs/2405.06932).

|

| 1097 |

+

|

| 1098 |

+

| Model Name | Model Size (GB) | Dimension | Sequence Length | Classification (9) | Clustering (4) | Pair Classification (2) | Reranking (4) | Retrieval (8) | STS (8) | Average (35) |

|

| 1099 |

+

|:----:|:---:|:---:|:---:|:---:|:---:|:---:|:---:|:---:|:---:|:---:|

|

| 1100 |

+

| [**piccolo-large-zh-v2**](https://huggingface.co/sensenova/piccolo-large-zh-v2) | 1.21 | 1792 | 512 | 74.59 | 62.17 | 90.24 | 70 | 74.36 | 63.5 | 70.95 |

|

| 1101 |

+

| [gte-Qwen1.5-7B-instruct](https://huggingface.co/Alibaba-NLP/gte-Qwen1.5-7B-instruct)| 26.45 | 32768 |4096 | 73.35 | 67.08 | 88.52 | 66.38 | 70.62 | 62.32 | 69.56|

|

| 1102 |

+

| [acge-text-embedding](https://huggingface.co/aspire/acge_text_embedding) |1.21 | 1792 | 512 | 72.75 | 58.7 | 87.84 | 67.98 | 72.93 | 62.09 | 69.07 |

|

| 1103 |

|

|

|

|

| 1104 |

|

| 1105 |

## Usage

|

| 1106 |

+

The piccolo model can be easily accessed in the sentence-transformer package:

|

| 1107 |

+

```python

|

| 1108 |

+

# for s2s/s2p dataset, you can use piccolo as below

|

| 1109 |

+

from sklearn.preprocessing import normalize

|

| 1110 |

+

from sentence_transformers import SentenceTransformer

|

| 1111 |

+

sentences = ["数据1", "数据2"]

|

| 1112 |

+

matryoshka_dim=1792 # support 256, 512, 768, 1024, 1280, 1536, 1792

|

| 1113 |

+

model = SentenceTransformer('sensenova/piccolo-large-zh-v2')

|

| 1114 |

+

embeddings_1 = model.encode(sentences, normalize_embeddings=False)

|

| 1115 |

+

embeddings_2 = model.encode(sentences, normalize_embeddings=False)

|

| 1116 |

+

embeddings_1 = normalize(embeddings_1[..., :matryoshka_dim], norm="l2", axis=1)

|

| 1117 |

+

embeddings_2 = normalize(embeddings_2[..., :matryoshka_dim], norm="l2", axis=1)

|

| 1118 |

+

similarity = embeddings_1 @ embeddings_2.T

|

| 1119 |

+

```

|

| 1120 |

+

|

| 1121 |

+

## 🤗 **Model List**

|

| 1122 |

+

| Model|Language|Description|prompt|

|

| 1123 |

+

|:-|:-:|:-:|:--:|

|

| 1124 |

+

| [sensenova/piccolo-large-zh-v2](https://huggingface.co/sensenova/piccolo-large-zh-v2) | Chinese | version2: finetuning with multi-task hybrid loss training | None |

|

| 1125 |

+

| [sensenova/piccolo-large-zh](https://huggingface.co/sensenova/piccolo-large-zh) | Chinese | version1: pretrain under 400 million chinese text pair | '查询'/'结果' |

|

| 1126 |

+

| [sensenova/piccolo-base-zh](https://huggingface.co/sensenova/piccolo-base-zh) | Chinese | version1: pretrain under 400 million chinese text pair | '查询'/'结果' |

|

| 1127 |

+

|

| 1128 |

|

| 1129 |

+

## Citation

|

| 1130 |

+

If you find our tech report, models or code helpful, please cite our report or give a star on github or huggingface!

|

| 1131 |

+

```bibtex

|

| 1132 |

+

@misc{2405.06932,

|

| 1133 |

+

Author = {Junqin Huang and Zhongjie Hu and Zihao Jing and Mengya Gao and Yichao Wu},

|

| 1134 |

+

Title = {Piccolo2: General Text Embedding with Multi-task Hybrid Loss Training},

|

| 1135 |

+

Year = {2024},

|

| 1136 |

+

Eprint = {arXiv:2405.06932},

|

| 1137 |

+

}

|

| 1138 |

+

```

|

assets/1.png

ADDED

|

assets/2.png

ADDED

|

assets/3.png

ADDED

|