File size: 4,407 Bytes

977a00d 905c4f6 977a00d 905c4f6 977a00d 8fb530e 977a00d 88c2e9e 977a00d 8fb530e 977a00d 9cc3edf 8824051 977a00d 9cc3edf f09427f 977a00d 1a30b56 977a00d 1a30b56 977a00d d43e648 977a00d d43e648 977a00d d43e648 977a00d c088fe5 9cc3edf 977a00d |

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 |

---

license: other

license_name: tongyi-qianwen

license_link: https://huggingface.co./Qwen/Qwen1.5-72B/blob/main/LICENSE

language:

- th

- en

pipeline_tag: text-generation

---

**Typhoon-1.5-72B-instruct: Thai Large Language Model (Instruct)**

**Typhoon-1.5-72B-instruct** is a *instruct* Thai 🇹🇭 large language model with 72 billion parameters, and it is based on Qwen1.5-72B.

**We also have a newer release of 1.5x 70B, which is better for application use cases.** [here](https://huggingface.co./scb10x/llama-3-typhoon-v1.5x-70b-instruct)

For release post, please see our [blog](https://blog.opentyphoon.ai/typhoon-1-5-release-a9364cb8e8d7).

## **Model Description**

- **Model type**: A 72B instruct decoder-only model based on Qwen1.5 archtecture.

- **Requirement**: transformers 4.38.0 or newer.

- **Primary Language(s)**: Thai 🇹🇭 and English 🇬🇧

- **License**: [Qwen License](https://huggingface.co./Qwen/Qwen1.5-72B/raw/main/LICENSE)

## **Performance**

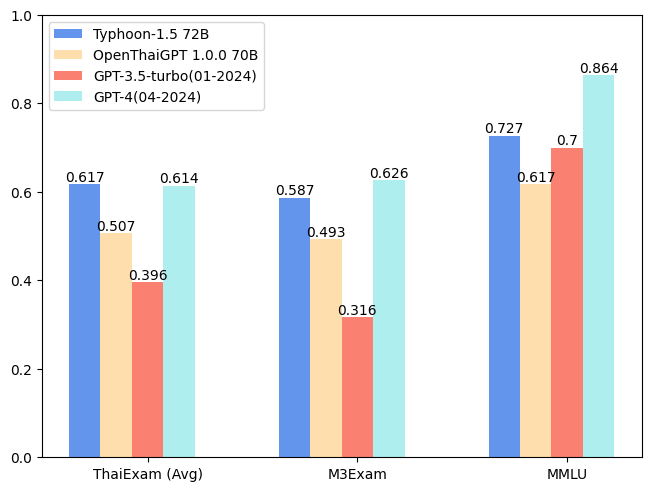

| Model | ONET | IC | TGAT | TPAT-1 | A-Level | Average (ThaiExam) | M3Exam | MMLU |

| --- | --- | --- | --- | --- | --- | --- | --- | --- |

| Typhoon-1.5 72B | 0.562 | **0.716** | **0.778** | 0.5 | **0.528** | **0.6168** | 0.587 | 0.7271 |

| OpenThaiGPT 1.0.0 70B | 0.447 | 0.492 | **0.778** | 0.5 | 0.319 | 0.5072 | 0.493 | 0.6167 |

| GPT-3.5-turbo(01-2024) | 0.358 | 0.279 | 0.678 | 0.345 | 0.318 | 0.3956 | 0.316 | 0.700** |

| GPT-4(04-2024) | **0.589** | 0.594 | 0.756 | **0.517** | 0.616 | 0.6144 | **0.626** | **0.864**** |

** We report the MMLU score that is reported in [GPT-4 Tech Report](https://arxiv.org/abs/2303.08774).

## Usage Example

```python

from transformers import AutoTokenizer, AutoModelForCausalLM

import torch

model_id = "scb10x/typhoon-v1.5-72b-instruct"

tokenizer = AutoTokenizer.from_pretrained(model_id)

model = AutoModelForCausalLM.from_pretrained(

model_id,

torch_dtype=torch.bfloat16,

device_map="auto",

) # We do not recommend loading the model using 4-bit and 8-bit BNB as it may produce inaccurate results.

messages = [

{"role": "system", "content": "You are a helpful assistant."},

{"role": "user", "content": "ขอสูตรไก่ย่าง"},

]

input_ids = tokenizer.apply_chat_template(

messages,

add_generation_prompt=True,

return_tensors="pt"

).to(model.device)

outputs = model.generate(

input_ids,

max_new_tokens=1024,

do_sample=True,

temperature=0.6,

top_p=0.9,

repetition_penalty=1.15

)

response = outputs[0][input_ids.shape[-1]:]

print(tokenizer.decode(response, skip_special_tokens=True))

```

## Chat Template

We use chatml chat-template.

```python

{% for message in messages %}{{'<|im_start|>' + message['role'] + '\n' + message['content']}}{% if (loop.last and add_generation_prompt) or not loop.last %}{{ '<|im_end|>' + '\n'}}{% endif %}{% endfor %}

{% if add_generation_prompt and messages[-1]['role'] != 'assistant' %}{{ '<|im_start|>assistant\n' }}{% endif %}

```

## **Intended Uses & Limitations**

This model is an instructional model. However, it’s still undergoing development. It incorporates some level of guardrails, but it still may produce answers that are inaccurate, biased, or otherwise objectionable in response to user prompts. We recommend that developers assess these risks in the context of their use case.

## **Follow us**

**https://twitter.com/opentyphoon**

## **Support / Ask any question**

**https://discord.gg/CqyBscMFpg**

## **SCB10X AI Team**

- Kunat Pipatanakul, Potsawee Manakul, Sittipong Sripaisarnmongkol, Natapong Nitarach, Pathomporn Chokchainant, Kasima Tharnpipitchai

- If you find Typhoon v1.5 useful for your work, please cite it using:

```

@article{pipatanakul2023typhoon,

title={Typhoon: Thai Large Language Models},

author={Kunat Pipatanakul and Phatrasek Jirabovonvisut and Potsawee Manakul and Sittipong Sripaisarnmongkol and Ruangsak Patomwong and Pathomporn Chokchainant and Kasima Tharnpipitchai},

year={2023},

journal={arXiv preprint arXiv:2312.13951},

url={https://arxiv.org/abs/2312.13951}

}

```

## **Contact Us**

- General & Collaboration: **[[email protected]](mailto:[email protected])**, **[[email protected]](mailto:[email protected])**

- Technical: **[[email protected]](mailto:[email protected])** |