Create README.md

Browse files

README.md

ADDED

|

@@ -0,0 +1,75 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

base_model: cmarkea/bloomz-3b-dpo-chat

|

| 3 |

+

library_name: peft

|

| 4 |

+

license: apache-2.0

|

| 5 |

+

datasets:

|

| 6 |

+

- cmarkea/table-vqa

|

| 7 |

+

language:

|

| 8 |

+

- fr

|

| 9 |

+

- en

|

| 10 |

+

pipeline_tag: Table Question Answering

|

| 11 |

+

---

|

| 12 |

+

|

| 13 |

+

## Model Description

|

| 14 |

+

|

| 15 |

+

**cmarkea/bloomz-3b-dpo-table-qa-latex** is a fine-tuned version of the **[cmarkea/bloomz-3b-dpo-chat](https://huggingface.co/cmarkea/bloomz-3b-dpo-chat)** model, specialized for table-based question answering (QA) tasks. The model has been trained on the **[table-vqa](https://huggingface.co/datasets/cmarkea/table-vqa)** dataset, which was developed by Crédit Mutuel Arkéa, and it processes tables provided in their LaTeX source format.

|

| 16 |

+

|

| 17 |

+

This model is optimized for multilingual environments, supporting both French and English, and is especially effective in extracting and interpreting tabular data from documents. It has been fine-tuned for 2 days on an A100 40GB GPU and operates in bfloat16 precision to maximize resource efficiency.

|

| 18 |

+

|

| 19 |

+

### Key Features

|

| 20 |

+

- **Domain:** Table-based question answering (QA), particularly for extracting information from LaTeX-format tables.

|

| 21 |

+

- **Language Support:** French and English, making it suitable for multilingual environments.

|

| 22 |

+

- **Model Type:** Text-to-text language model.

|

| 23 |

+

- **Precision:** bfloat16, optimizing computational efficiency.

|

| 24 |

+

- **Training Duration:** 2 days on A100 40GB GPU.

|

| 25 |

+

- **Fine-Tuning Method:** Full fine-tuning.

|

| 26 |

+

|

| 27 |

+

This model is highly applicable in fields where tabular data needs to be queried and analyzed, such as financial reports, academic papers, and technical documentation.

|

| 28 |

+

|

| 29 |

+

## Usage

|

| 30 |

+

|

| 31 |

+

Here’s an example of how to use this model for table-based question answering:

|

| 32 |

+

|

| 33 |

+

```python

|

| 34 |

+

import torch

|

| 35 |

+

from transformers import pipeline

|

| 36 |

+

|

| 37 |

+

device = 0 if torch.cuda.is_available() else -1

|

| 38 |

+

table = '''\begin{tabular}{|c|c|c|}

|

| 39 |

+

\hline

|

| 40 |

+

Model & MAE-{$TKE$}-low & MAE-{$TKE$}-high\\

|

| 41 |

+

\hline

|

| 42 |

+

U-FNET & 0.0048 & $1.09 \times 10^{-5}$\\

|

| 43 |

+

\hline

|

| 44 |

+

\end{tabular}'''

|

| 45 |

+

question = "What is the MAE-TKE-high value for the U-FNET model?"

|

| 46 |

+

prompt = table + '\n' + question

|

| 47 |

+

|

| 48 |

+

model = pipeline("text-generation", "cmarkea/bloomz-3b-dpo-chat", device=device)

|

| 49 |

+

result = model(f"</s>{prompt}<s>", max_new_tokens=512)

|

| 50 |

+

print(result)

|

| 51 |

+

```

|

| 52 |

+

|

| 53 |

+

The model processes tables written in LaTeX format, so be sure to provide your tables in that form.

|

| 54 |

+

|

| 55 |

+

## Performance

|

| 56 |

+

|

| 57 |

+

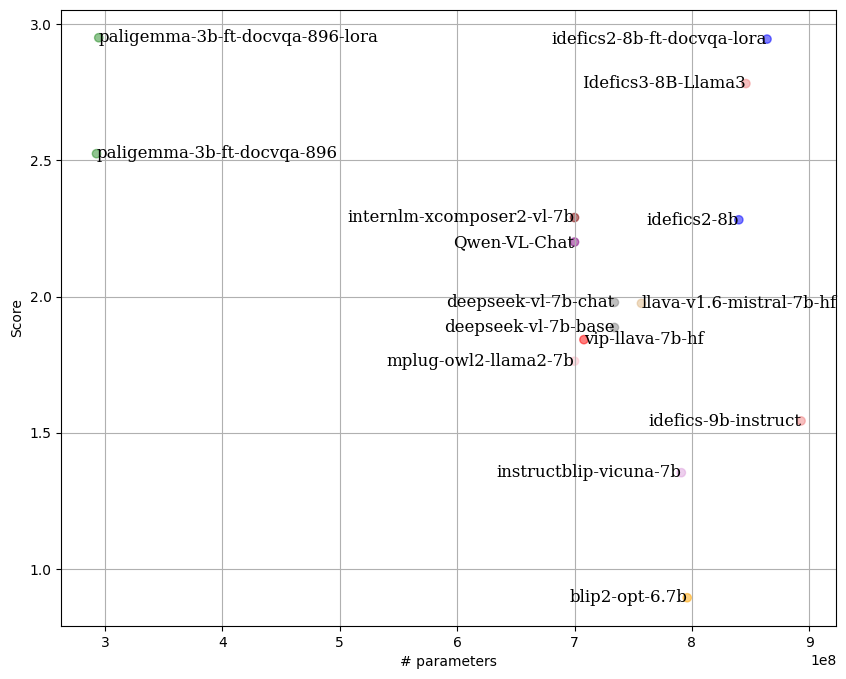

This model was evaluated on 200 question-answer pairs extracted from 100 tables in the **[table-vqa](https://huggingface.co/datasets/cmarkea/table-vqa)** test set. Each table had two question-answer pairs: one in French and one in English.

|

| 58 |

+

|

| 59 |

+

The evaluation used the **[LLM-as-Juries](https://arxiv.org/abs/2404.18796)** method, employing three judge models (GPT-4o, Gemini1.5 Pro, and Claude 3.5-Sonnet). The scoring was adapted to the table QA context, with a scale from 0 to 5 to ensure precision in assessing the model’s performance.

|

| 60 |

+

|

| 61 |

+

Here’s a visualization of the results:

|

| 62 |

+

|

| 63 |

+

|

| 64 |

+

|

| 65 |

+

|

| 66 |

+

## Citation

|

| 67 |

+

|

| 68 |

+

```bibtex

|

| 69 |

+

@online{AgDePaligemmaTableQALatex,

|

| 70 |

+

AUTHOR = {Tom Agonnoude, Cyrile Delestre},

|

| 71 |

+

URL = {https://huggingface.co/cmarkea/bloomz-3b-dpo-table-qa-latex},

|

| 72 |

+

YEAR = {2024},

|

| 73 |

+

KEYWORDS = {Table understanding, LaTeX, Multilingual, QA},

|

| 74 |

+

}

|

| 75 |

+

```

|