End of training

Browse files- README.md +3 -2

- all_results.json +22 -0

- eval_results.json +17 -0

- train_results.json +8 -0

- trainer_state.json +0 -0

- training_eval_loss.png +0 -0

- training_loss.png +0 -0

- training_rewards_accuracies.png +0 -0

- training_sft_loss.png +0 -0

README.md

CHANGED

|

@@ -2,9 +2,10 @@

|

|

| 2 |

license: gemma

|

| 3 |

library_name: peft

|

| 4 |

tags:

|

|

|

|

|

|

|

| 5 |

- trl

|

| 6 |

- dpo

|

| 7 |

-

- llama-factory

|

| 8 |

- generated_from_trainer

|

| 9 |

base_model: google/gemma-2b-it

|

| 10 |

model-index:

|

|

@@ -17,7 +18,7 @@ should probably proofread and complete it, then remove this comment. -->

|

|

| 17 |

|

| 18 |

# Gemma-2B-It-ORPO-SALT

|

| 19 |

|

| 20 |

-

This model is a fine-tuned version of [google/gemma-2b-it](https://huggingface.co/google/gemma-2b-it) on the

|

| 21 |

It achieves the following results on the evaluation set:

|

| 22 |

- Loss: 1.3294

|

| 23 |

- Rewards/chosen: -0.1257

|

|

|

|

| 2 |

license: gemma

|

| 3 |

library_name: peft

|

| 4 |

tags:

|

| 5 |

+

- llama-factory

|

| 6 |

+

- lora

|

| 7 |

- trl

|

| 8 |

- dpo

|

|

|

|

| 9 |

- generated_from_trainer

|

| 10 |

base_model: google/gemma-2b-it

|

| 11 |

model-index:

|

|

|

|

| 18 |

|

| 19 |

# Gemma-2B-It-ORPO-SALT

|

| 20 |

|

| 21 |

+

This model is a fine-tuned version of [google/gemma-2b-it](https://huggingface.co/google/gemma-2b-it) on the dpo_mix_en and the bct_non_cot_dpo_1000 datasets.

|

| 22 |

It achieves the following results on the evaluation set:

|

| 23 |

- Loss: 1.3294

|

| 24 |

- Rewards/chosen: -0.1257

|

all_results.json

ADDED

|

@@ -0,0 +1,22 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"epoch": 2.9969690846635686,

|

| 3 |

+

"eval_logits/chosen": -20.66438865661621,

|

| 4 |

+

"eval_logits/rejected": -20.695892333984375,

|

| 5 |

+

"eval_logps/chosen": -1.2573115825653076,

|

| 6 |

+

"eval_logps/rejected": -1.3996058702468872,

|

| 7 |

+

"eval_loss": 1.3293771743774414,

|

| 8 |

+

"eval_odds_ratio_loss": 0.7206552624702454,

|

| 9 |

+

"eval_rewards/accuracies": 0.5345454812049866,

|

| 10 |

+

"eval_rewards/chosen": -0.12573117017745972,

|

| 11 |

+

"eval_rewards/margins": 0.014229409396648407,

|

| 12 |

+

"eval_rewards/rejected": -0.13996057212352753,

|

| 13 |

+

"eval_runtime": 82.3315,

|

| 14 |

+

"eval_samples_per_second": 13.361,

|

| 15 |

+

"eval_sft_loss": 1.2573115825653076,

|

| 16 |

+

"eval_steps_per_second": 6.68,

|

| 17 |

+

"total_flos": 5.618252880760013e+17,

|

| 18 |

+

"train_loss": 1.3892074464594277,

|

| 19 |

+

"train_runtime": 8722.5011,

|

| 20 |

+

"train_samples_per_second": 3.404,

|

| 21 |

+

"train_steps_per_second": 0.213

|

| 22 |

+

}

|

eval_results.json

ADDED

|

@@ -0,0 +1,17 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"epoch": 2.9969690846635686,

|

| 3 |

+

"eval_logits/chosen": -20.66438865661621,

|

| 4 |

+

"eval_logits/rejected": -20.695892333984375,

|

| 5 |

+

"eval_logps/chosen": -1.2573115825653076,

|

| 6 |

+

"eval_logps/rejected": -1.3996058702468872,

|

| 7 |

+

"eval_loss": 1.3293771743774414,

|

| 8 |

+

"eval_odds_ratio_loss": 0.7206552624702454,

|

| 9 |

+

"eval_rewards/accuracies": 0.5345454812049866,

|

| 10 |

+

"eval_rewards/chosen": -0.12573117017745972,

|

| 11 |

+

"eval_rewards/margins": 0.014229409396648407,

|

| 12 |

+

"eval_rewards/rejected": -0.13996057212352753,

|

| 13 |

+

"eval_runtime": 82.3315,

|

| 14 |

+

"eval_samples_per_second": 13.361,

|

| 15 |

+

"eval_sft_loss": 1.2573115825653076,

|

| 16 |

+

"eval_steps_per_second": 6.68

|

| 17 |

+

}

|

train_results.json

ADDED

|

@@ -0,0 +1,8 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"epoch": 2.9969690846635686,

|

| 3 |

+

"total_flos": 5.618252880760013e+17,

|

| 4 |

+

"train_loss": 1.3892074464594277,

|

| 5 |

+

"train_runtime": 8722.5011,

|

| 6 |

+

"train_samples_per_second": 3.404,

|

| 7 |

+

"train_steps_per_second": 0.213

|

| 8 |

+

}

|

trainer_state.json

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|

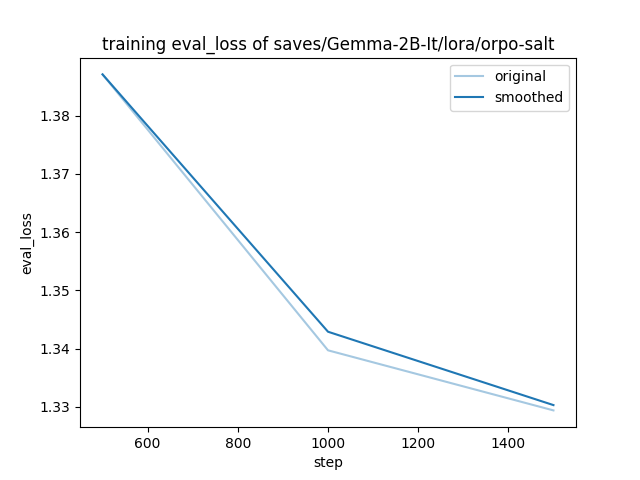

training_eval_loss.png

ADDED

|

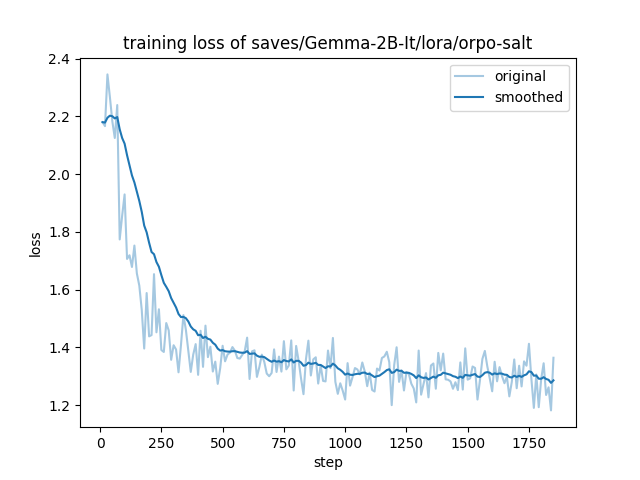

training_loss.png

ADDED

|

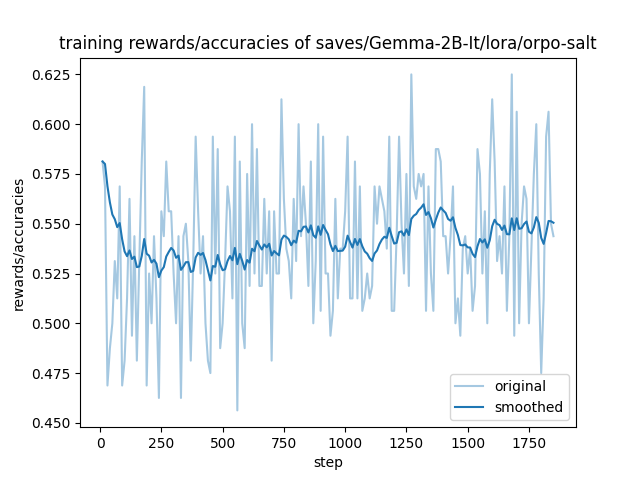

training_rewards_accuracies.png

ADDED

|

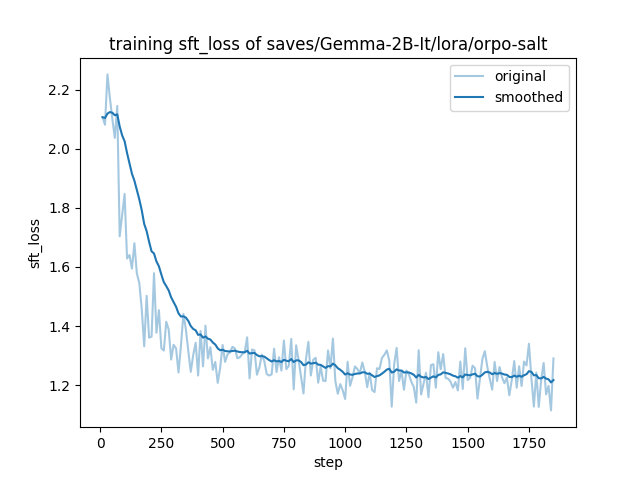

training_sft_loss.png

ADDED

|