Upload README.md

Browse files

README.md

CHANGED

|

@@ -297,10 +297,10 @@ And thank you again to a16z for their generous grant.

|

|

| 297 |

# Original model card: OpenOrca's Mistral 7B OpenOrca

|

| 298 |

|

| 299 |

|

| 300 |

-

<p><h1>🐋

|

| 301 |

|

| 302 |

|

| 303 |

-

|

| 305 |

|

| 306 |

|

|

@@ -313,9 +313,14 @@ We use [OpenChat](https://huggingface.co/openchat) packing, trained with [Axolot

|

|

| 313 |

This release is trained on a curated filtered subset of most of our GPT-4 augmented data.

|

| 314 |

It is the same subset of our data as was used in our [OpenOrcaxOpenChat-Preview2-13B model](https://huggingface.co/Open-Orca/OpenOrcaxOpenChat-Preview2-13B).

|

| 315 |

|

| 316 |

-

HF Leaderboard evals place this model as #2 for all models smaller than 30B at release time, outperforming all but one 13B model

|

| 317 |

|

| 318 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 319 |

|

| 320 |

Want to visualize our full (pre-filtering) dataset? Check out our [Nomic Atlas Map](https://atlas.nomic.ai/map/c1b88b47-2d9b-47e0-9002-b80766792582/2560fd25-52fe-42f1-a58f-ff5eccc890d2).

|

| 321 |

|

|

@@ -328,31 +333,77 @@ We will also give sneak-peak announcements on our Discord, which you can find he

|

|

| 328 |

|

| 329 |

https://AlignmentLab.ai

|

| 330 |

|

| 331 |

-

or

|

| 332 |

|

| 333 |

https://discord.gg/5y8STgB3P3

|

| 334 |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 335 |

# Prompt Template

|

| 336 |

|

| 337 |

We used [OpenAI's Chat Markup Language (ChatML)](https://github.com/openai/openai-python/blob/main/chatml.md) format, with `<|im_start|>` and `<|im_end|>` tokens added to support this.

|

| 338 |

|

| 339 |

## Example Prompt Exchange

|

| 340 |

|

| 341 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 342 |

|

| 343 |

# Evaluation

|

| 344 |

|

| 345 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 346 |

|

| 347 |

-

TBD

|

| 348 |

|

| 349 |

-

##

|

| 350 |

|

| 351 |

-

|

| 352 |

|

| 353 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 354 |

|

| 355 |

-

TBD

|

| 356 |

|

| 357 |

# Dataset

|

| 358 |

|

|

@@ -364,9 +415,18 @@ We used a curated, filtered selection of most of the GPT-4 augmented data from o

|

|

| 364 |

We trained with 8x A6000 GPUs for 62 hours, completing 4 epochs of full fine tuning on our dataset in one training run.

|

| 365 |

Commodity cost was ~$400.

|

| 366 |

|

|

|

|

| 367 |

# Citation

|

| 368 |

|

| 369 |

```bibtex

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 370 |

@misc{mukherjee2023orca,

|

| 371 |

title={Orca: Progressive Learning from Complex Explanation Traces of GPT-4},

|

| 372 |

author={Subhabrata Mukherjee and Arindam Mitra and Ganesh Jawahar and Sahaj Agarwal and Hamid Palangi and Ahmed Awadallah},

|

|

|

|

| 297 |

# Original model card: OpenOrca's Mistral 7B OpenOrca

|

| 298 |

|

| 299 |

|

| 300 |

+

<p><h1>🐋 Mistral-7B-OpenOrca 🐋</h1></p>

|

| 301 |

|

| 302 |

|

| 303 |

+

|

| 304 |

[<img src="https://raw.githubusercontent.com/OpenAccess-AI-Collective/axolotl/main/image/axolotl-badge-web.png" alt="Built with Axolotl" width="200" height="32"/>](https://github.com/OpenAccess-AI-Collective/axolotl)

|

| 305 |

|

| 306 |

|

|

|

|

| 313 |

This release is trained on a curated filtered subset of most of our GPT-4 augmented data.

|

| 314 |

It is the same subset of our data as was used in our [OpenOrcaxOpenChat-Preview2-13B model](https://huggingface.co/Open-Orca/OpenOrcaxOpenChat-Preview2-13B).

|

| 315 |

|

| 316 |

+

**HF Leaderboard evals place this model as #2 for all models smaller than 30B at release time, outperforming all but one 13B model.**

|

| 317 |

|

| 318 |

+

This release provides a first: a fully open model with class-breaking performance, capable of running fully accelerated on even moderate consumer GPUs.

|

| 319 |

+

Our thanks to the Mistral team for leading the way here.

|

| 320 |

+

|

| 321 |

+

We affectionately codename this model: "*MistralOrca*"

|

| 322 |

+

|

| 323 |

+

If you'd like to try the model now, we have it running on fast GPUs unquantized: https://huggingface.co/spaces/Open-Orca/Mistral-7B-OpenOrca

|

| 324 |

|

| 325 |

Want to visualize our full (pre-filtering) dataset? Check out our [Nomic Atlas Map](https://atlas.nomic.ai/map/c1b88b47-2d9b-47e0-9002-b80766792582/2560fd25-52fe-42f1-a58f-ff5eccc890d2).

|

| 326 |

|

|

|

|

| 333 |

|

| 334 |

https://AlignmentLab.ai

|

| 335 |

|

| 336 |

+

or check the OpenAccess AI Collective Discord for more information about Axolotl trainer here:

|

| 337 |

|

| 338 |

https://discord.gg/5y8STgB3P3

|

| 339 |

|

| 340 |

+

|

| 341 |

+

# Quantized Models

|

| 342 |

+

|

| 343 |

+

Quantized versions of this model are generously made available by [TheBloke](https://huggingface.co/TheBloke).

|

| 344 |

+

|

| 345 |

+

- AWQ: https://huggingface.co/TheBloke/Mistral-7B-OpenOrca-AWQ

|

| 346 |

+

- GPTQ: https://huggingface.co/TheBloke/Mistral-7B-OpenOrca-GPTQ

|

| 347 |

+

- GGUF: https://huggingface.co/TheBloke/Mistral-7B-OpenOrca-GGUF

|

| 348 |

+

|

| 349 |

+

|

| 350 |

# Prompt Template

|

| 351 |

|

| 352 |

We used [OpenAI's Chat Markup Language (ChatML)](https://github.com/openai/openai-python/blob/main/chatml.md) format, with `<|im_start|>` and `<|im_end|>` tokens added to support this.

|

| 353 |

|

| 354 |

## Example Prompt Exchange

|

| 355 |

|

| 356 |

+

```

|

| 357 |

+

<|im_start|>system

|

| 358 |

+

You are MistralOrca, a large language model trained by Alignment Lab AI. Write out your reasoning step-by-step to be sure you get the right answers!

|

| 359 |

+

<|im_end|>

|

| 360 |

+

<|im_start|>user

|

| 361 |

+

How are you?<|im_end|>

|

| 362 |

+

<|im_start|>assistant

|

| 363 |

+

I am doing well!<|im_end|>

|

| 364 |

+

<|im_start|>user

|

| 365 |

+

Please tell me about how mistral winds have attracted super-orcas.<|im_end|>

|

| 366 |

+

```

|

| 367 |

+

|

| 368 |

|

| 369 |

# Evaluation

|

| 370 |

|

| 371 |

+

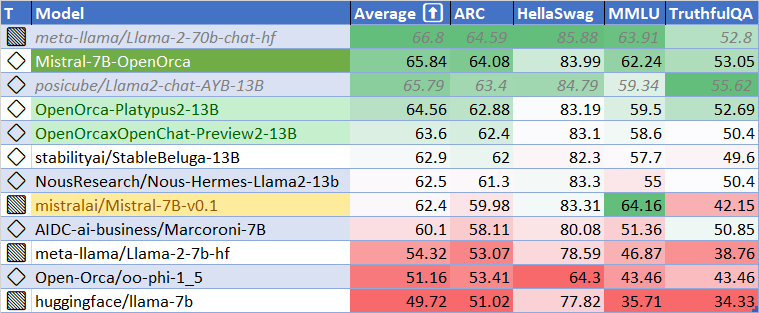

## HuggingFace Leaderboard Performance

|

| 372 |

+

|

| 373 |

+

We have evaluated using the methodology and tools for the HuggingFace Leaderboard, and find that we have dramatically improved upon the base model.

|

| 374 |

+

We find **105%** of the base model's performance on HF Leaderboard evals, averaging **65.33**.

|

| 375 |

+

|

| 376 |

+

At release time, this beats all 7B models, and all but one 13B.

|

| 377 |

+

|

| 378 |

+

|

| 379 |

+

|

| 380 |

+

|

| 381 |

+

| Metric | Value |

|

| 382 |

+

|-----------------------|-------|

|

| 383 |

+

| MMLU (5-shot) | 61.73 |

|

| 384 |

+

| ARC (25-shot) | 63.57 |

|

| 385 |

+

| HellaSwag (10-shot) | 83.79 |

|

| 386 |

+

| TruthfulQA (0-shot) | 52.24 |

|

| 387 |

+

| Avg. | 65.33 |

|

| 388 |

+

|

| 389 |

+

We use [Language Model Evaluation Harness](https://github.com/EleutherAI/lm-evaluation-harness) to run the benchmark tests above, using the same version as the HuggingFace LLM Leaderboard.

|

| 390 |

|

|

|

|

| 391 |

|

| 392 |

+

## AGIEval Performance

|

| 393 |

|

| 394 |

+

We compare our results to the base Mistral-7B model (using LM Evaluation Harness).

|

| 395 |

|

| 396 |

+

We find **129%** of the base model's performance on AGI Eval, averaging **0.397**.

|

| 397 |

+

As well, we significantly improve upon the official `mistralai/Mistral-7B-Instruct-v0.1` finetuning, achieving **119%** of their performance.

|

| 398 |

+

|

| 399 |

+

|

| 400 |

+

|

| 401 |

+

## BigBench-Hard Performance

|

| 402 |

+

|

| 403 |

+

We find **119%** of the base model's performance on BigBench-Hard, averaging **0.416**.

|

| 404 |

+

|

| 405 |

+

|

| 406 |

|

|

|

|

| 407 |

|

| 408 |

# Dataset

|

| 409 |

|

|

|

|

| 415 |

We trained with 8x A6000 GPUs for 62 hours, completing 4 epochs of full fine tuning on our dataset in one training run.

|

| 416 |

Commodity cost was ~$400.

|

| 417 |

|

| 418 |

+

|

| 419 |

# Citation

|

| 420 |

|

| 421 |

```bibtex

|

| 422 |

+

@software{lian2023mistralorca1

|

| 423 |

+

title = {MistralOrca: Mistral-7B Model Instruct-tuned on Filtered OpenOrcaV1 GPT-4 Dataset},

|

| 424 |

+

author = {Wing Lian and Bleys Goodson and Guan Wang and Eugene Pentland and Austin Cook and Chanvichet Vong and "Teknium"},

|

| 425 |

+

year = {2023},

|

| 426 |

+

publisher = {HuggingFace},

|

| 427 |

+

journal = {HuggingFace repository},

|

| 428 |

+

howpublished = {\url{https://huggingface.co/Open-Orca/Mistral-7B-OpenOrca},

|

| 429 |

+

}

|

| 430 |

@misc{mukherjee2023orca,

|

| 431 |

title={Orca: Progressive Learning from Complex Explanation Traces of GPT-4},

|

| 432 |

author={Subhabrata Mukherjee and Arindam Mitra and Ganesh Jawahar and Sahaj Agarwal and Hamid Palangi and Ahmed Awadallah},

|